Circuit Breaker Pattern: Stopping Cascading Failures in Distributed Systems

Circuit Breaker Pattern: Stopping Cascading Failures in Distributed Systems

Table of Contents

- How Cascading Failures Actually Happen

- Circuit Breaker Mechanics: The Three States

- Configuration Deep Dive: Thresholds and Windows

- Fallback Strategies: Failing Gracefully

- Implementation: Resilience4j for Java/Kotlin

- Implementation: Opossum for Node.js

- Service Mesh Level: Istio Outlier Detection

- Bulkhead Pattern: Isolating Thread Pools

- Combining Retry + Circuit Breaker Correctly

- Monitoring Circuit Breaker State

- Frequently Asked Questions

- Key Takeaway

How Cascading Failures Actually Happen

The threat model is specific and predictable:

Time 0: 100 users/sec hitting /api/recommendations

RecommendationService is healthy - avg 50ms response

Time 1m: RecommendationService DB becomes slow (disk I/O saturation)

RecommendationService now takes 30 seconds to respond

Time 2m: API server creates a new thread per pending request

100 users/sec x 30 seconds lag = 3,000 concurrent threads waiting

Time 3m: API server reaches max thread pool (500 threads)

API server now queues ALL requests - login fails, checkout fails

Time 4m: Users cannot log in - the entire site is down

Root cause: a slow RECOMMENDATION widgetThis is a cascading failure caused by resource exhaustion: threads consumed by the slow service cause all other operations to fail.

Circuit Breaker Mechanics: The Three States

CLOSED State (Normal Operation)

- All calls pass through to the downstream service

- The circuit breaker records success/failure of each call

- Calculates failure rate using a sliding window (count-based or time-based)

- Transition to OPEN when failure rate crosses threshold

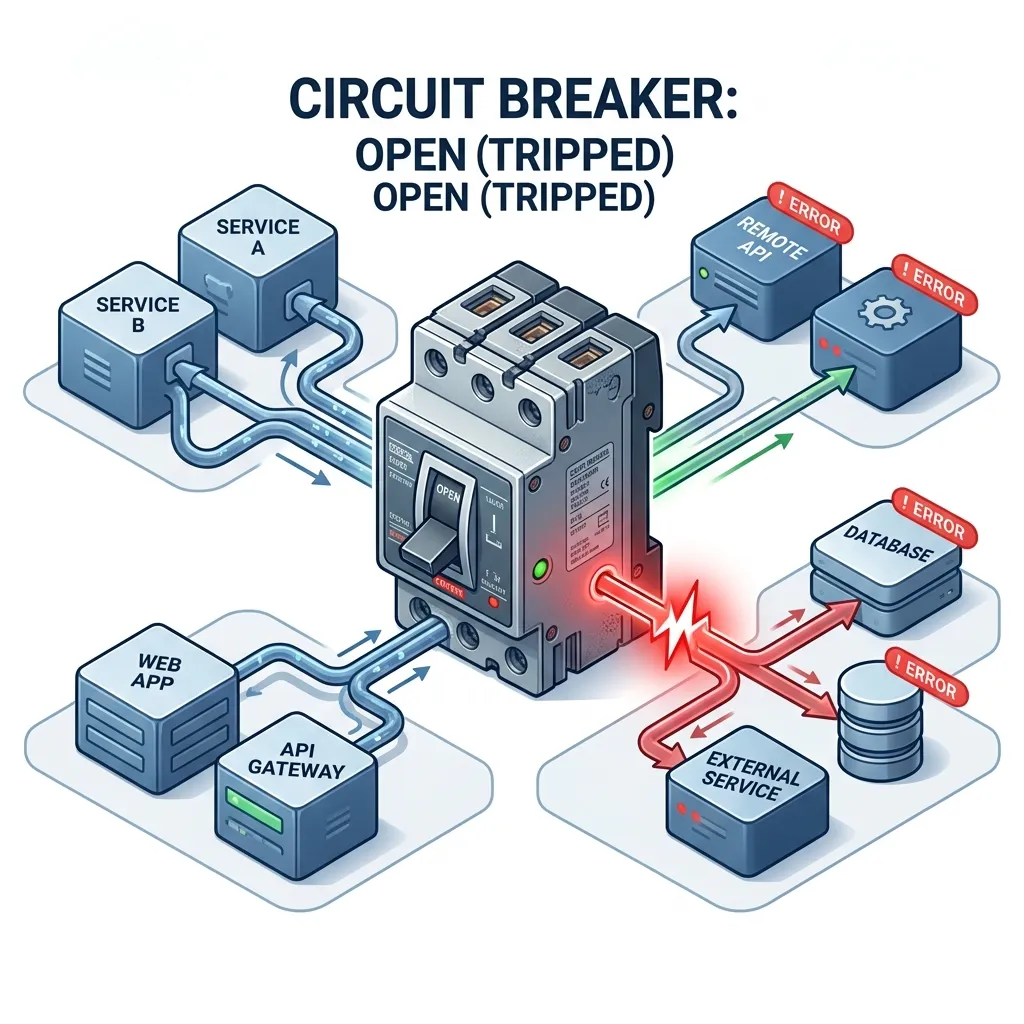

OPEN State (Protection Mode)

- All calls are blocked immediately - no network call is made

- Returns a predefined fallback response instantly

- The service gets complete relief - no requests, CPU/DB can recover

- After a configurable wait duration, moves to HALF-OPEN

HALF-OPEN State (Testing Recovery)

- Allows a small number of trial requests through

- If trial requests succeed: circuit closes (normal operation resumes)

- If trial requests fail: circuit re-opens (longer wait before next trial)

- Prevents flooding a recovering service

Configuration Deep Dive: Thresholds and Windows

Two types of calls trip a circuit breaker:

- Failure Rate - Too many errors (

5xx, connection refused, timeout) - Slow Call Rate - Too many calls exceeding a slow call duration threshold (e.g., > 3 seconds)

# Resilience4j configuration (application.yml):

resilience4j:

circuitbreaker:

instances:

recommendationService:

registerHealthIndicator: true

# Sliding window: count-based (last N calls) or time-based (last N seconds):

slidingWindowType: COUNT_BASED

slidingWindowSize: 20 # Evaluate based on last 20 calls

# Trip when 50%+ of calls fail:

failureRateThreshold: 50

# Also trip when 60%+ of calls take > 3 seconds:

slowCallRateThreshold: 60

slowCallDurationThreshold: 3000ms

# Minimum calls before evaluating failure rate:

minimumNumberOfCalls: 5

# How long to stay OPEN before testing:

waitDurationInOpenState: 30s

# How many trial calls in HALF-OPEN state:

permittedNumberOfCallsInHalfOpenState: 5

# Which exceptions count as failures:

recordExceptions:

- java.io.IOException

- java.util.concurrent.TimeoutException

# Which exceptions do NOT trip the circuit:

ignoreExceptions:

- com.myapp.exceptions.BusinessRuleException # Not a service faultFallback Strategies: Failing Gracefully

A circuit breaker without a good fallback just replaces one failure mode with another. Design fallbacks that provide real value:

| Fallback Type | Description | Example |

|---|---|---|

| Static response | Return a hardcoded default | Empty recommendations list |

| Cached response | Return last known good data from Redis | Show 1-hour-old recommendations |

| Degraded mode | Return reduced functionality | Show top-10 all-time bestsellers |

| Error with context | Explicit UI message | "Recommendations temporarily unavailable" |

| Alternative service | Call a simpler fallback service | Basic category-based suggestions |

Never return confusing errors or silent failures - users should always know if a feature is degraded.

Implementation: Resilience4j for Java/Kotlin

@Service

public class RecommendationClient {

private final WebClient webClient;

private final CircuitBreakerRegistry cbRegistry;

@CircuitBreaker(name = "recommendationService", fallbackMethod = "getStaticRecommendations")

public List<Product> getPersonalizedRecommendations(String userId) {

return webClient.get()

.uri("/recommendations/{userId}", userId)

.retrieve()

.bodyToFlux(Product.class)

.collectList()

.timeout(Duration.ofSeconds(3)) // Combine with timeout!

.block();

}

// Fallback: called when circuit is OPEN or call fails

public List<Product> getStaticRecommendations(String userId, CallNotPermittedException ex) {

log.warn("Circuit OPEN for recommendations. User: {}", userId);

return productRepository.findTop10Bestsellers(); // Degraded fallback

}

public List<Product> getStaticRecommendations(String userId, TimeoutException ex) {

log.warn("Recommendation timeout. Returning cached. User: {}", userId);

return redisCache.getOrDefault("top10", List.of()); // Cached fallback

}

}Bulkhead Pattern: Isolating Thread Pools

The Bulkhead pattern (named after ship watertight compartments) runs different services in separate thread pools, so one slow service can't exhaust all threads:

@Bulkhead(

name = "recommendationService",

type = Bulkhead.Type.THREADPOOL,

fallbackMethod = "getStaticRecommendations"

)

public CompletableFuture<List<Product>> getRecommendationsAsync(String userId) {

return CompletableFuture.supplyAsync(

() -> webClient.get().uri("/recommendations/{userId}", userId)...

);

}

// Configuration: max 10 threads for recommendation service

# Only 10 threads can wait for recommendation - chat, login, checkout are unaffected

resilience4j.thread-pool-bulkhead.instances.recommendationService.maxThreadPoolSize: 10

resilience4j.thread-pool-bulkhead.instances.recommendationService.coreThreadPoolSize: 5Combining Retry + Circuit Breaker Correctly

A critical mistake: putting Retry outside Circuit Breaker. This defeats the circuit breaker - retries send more requests to a failing service, worsening the cascade.

// ❌ WRONG: Retry wraps Circuit Breaker

// If CB is OPEN, retry tries 3 times x 100ms = 3 fallback calls (not wrong, but wasteful)

@Retry(name = "recSvc")

@CircuitBreaker(name = "recSvc") // WRONG ORDER

public Product getRecommendation(String userId) { ... }

// ✅ CORRECT: Circuit Breaker wraps Retry

// Retry tries 3 times -> CB counts failures -> trips after threshold

@CircuitBreaker(name = "recSvc")

@Retry(name = "recSvc") // CORRECT ORDER - CB sees aggregated results

public Product getRecommendation(String userId) { ... }

// Even better: Use separate names + Retry for transient network errors only

// CB for detecting genuinely failing servicesMonitoring Circuit Breaker State

Expose circuit breaker metrics to your observability stack:

# Spring Boot Actuator exposes CB health:

management:

endpoints.web.exposure.include: health,metrics,circuitbreakerevents

health.circuitbreakers.enabled: true

# Prometheus metrics exposed:

# resilience4j_circuitbreaker_state{name="recommendationService"} 1.0

# 0 = CLOSED, 1 = OPEN, 2 = HALF_OPEN

# resilience4j_circuitbreaker_failure_rate{name="recommendationService"} 0.65Alert when:

- Any circuit enters OPEN state -> team notification (service is DOWN)

- Circuit stays OPEN > 5 minutes -> page the on-call engineer

Frequently Asked Questions

When should I lower the failure threshold vs lengthen the wait duration? Failure threshold controls how sensitive the circuit is - lower it (30%) for critical payment services, keep it higher (60%) for non-critical recommendation engines. Wait duration controls how long the downstream gets to recover - lengthen it (60s) for services needing database recovery; keep it shorter (10s) for services with transient network issues.

Can I use Circuit Breaker at the API Gateway level instead of per-service?

Yes - this is the service mesh approach. Istio's DestinationRule with outlierDetection implements circuit breaking at the network layer, between any two services, without any code changes. The trade-off: it only detects HTTP 5xx errors and TCP connection failures, not application-level errors like malformed responses. Application-level circuit breakers (Resilience4j) can detect any custom failure condition.

Key Takeaway

The Circuit Breaker pattern is the difference between a system that fails completely and one that fails gracefully. Combined with the Bulkhead pattern (thread pool isolation) and well-designed fallbacks, it transforms a distributed system from a "house of cards" into a resilient architecture where partial failures remain partial. The 30 minutes spent configuring Resilience4j or Istio outlier detection is insurance against the 4am incident where a slow recommendation service brings down your entire checkout flow.

Read next: Zero Trust Architecture: Securing Software for 2026 ->

Part of the Software Architecture Hub - comprehensive guides from architectural foundations to advanced distributed systems patterns.