COBOL vs Java: Mainframe Modernization Comparison (2026)

When enterprises discuss mainframe modernization, the COBOL vs Java debate sits at the centre of almost every architecture decision. The question is rarely purely technical — it involves cost, risk, regulatory compliance, institutional knowledge, and the often-underestimated value of code that has been running correctly for 30 years. This guide gives you a clear-eyed technical and business comparison so you can make an informed decision rather than one driven by hype.

What you'll learn in this guide:

- Why COBOL continues to outperform Java on CPU-bound mainframe batch workloads

- The real costs and timelines of COBOL-to-Java migration projects (and why most fail to complete)

- Practical modernization strategies: API wrapping, strangler-fig pattern, and selective rewrite

- How to decide whether COBOL or Java is the right choice for new development in your organisation

← Start from the beginning: COBOL Program Structure | → View the full COBOL Mastery Course

The Scale of the Problem

Before comparing languages, it helps to understand why the decision matters so much. There are an estimated 800 billion lines of COBOL still running in production globally. The financial services industry alone processes roughly $3 trillion in daily transactions through COBOL systems. The US Social Security Administration, major airline reservation systems, most retail banking cores, and virtually every insurance back-office runs on COBOL.

This is not legacy code waiting to be replaced — it is mission-critical infrastructure that Java has not replaced despite 30 years of effort. Understanding why requires looking at what each language actually does well.

Language Design and Purpose

COBOL (Common Business-Oriented Language) was designed in 1959 specifically for business data processing. Its core strengths are:

- Fixed-point decimal arithmetic — COMP-3 packed-decimal format maps directly to z/Architecture hardware instructions, making financial calculations both faster and more accurate than floating-point

- Self-documenting syntax — COBOL reads almost like English prose, making audit and compliance review tractable for non-programmers

- Record-oriented I/O — QSAM, VSAM, and ESDS file handling is built into the language, not bolted on via libraries

- Batch throughput — COBOL batch jobs routinely process hundreds of millions of records per hour on z/OS

Java was designed in 1995 for write-once-run-anywhere portability. Its core strengths are:

- Object-oriented design — encapsulation, inheritance, and polymorphism support modern software engineering practices

- Ecosystem — Maven, Spring, JUnit, and thousands of open-source libraries accelerate development

- Developer availability — far more Java developers exist in the market than COBOL specialists

- Cloud-native tooling — Kubernetes, Docker, and CI/CD pipelines integrate naturally with Java

These are different tools built for different eras. The question is whether the differences matter for your specific workload.

Performance Comparison

Batch Processing

For CPU-intensive batch workloads — sorting, aggregating, and transforming large record files — COBOL typically wins on z/OS. IBM Enterprise COBOL compiles to highly optimized native machine code. COMP-3 arithmetic uses the AP, SP, MP, and DP decimal instruction set in hardware, which is significantly faster than Java's BigDecimal class for the same operations.

A representative benchmark: a COBOL program reading 10 million VSAM records, applying interest calculations with COMP-3 arithmetic, and writing output typically completes in under 2 minutes. An equivalent Java program using BigDecimal for precision-safe arithmetic on the same hardware often takes 4–8 minutes without careful JVM tuning.

Online Transaction Processing

For short-duration CICS transactions — the kind that respond to a terminal or API call in milliseconds — the gap narrows. COBOL CICS programs have near-zero startup overhead. Java programs in Liberty or WebSphere Application Server also perform well once the JVM is warmed up, but cold-start latency is measurable.

For high-volume OLTP (tens of thousands of transactions per second), COBOL remains competitive because CICS is extraordinarily well-tuned for z/OS workloads.

Web Services and API Layer

Here Java wins clearly. Exposing business logic as REST APIs, integrating with microservices, and building event-driven architectures is natural in Java and awkward in COBOL. Modern architectures often layer a Java API gateway in front of COBOL business logic — a pattern IBM actively supports with z/OS Connect.

Cost Comparison

Cost analysis must cover multiple dimensions:

| Dimension | COBOL | Java |

|---|---|---|

| Development labour | High (specialist scarcity) | Moderate (large talent pool) |

| Runtime compute | Low (z/OS CPU efficiency) | Moderate to high |

| Migration/rewrite cost | Low (keep running) | Very high (3–10 year projects) |

| Risk of migration | N/A | High (regression, data integrity) |

| Tooling/licensing | IBM compiler + tools | Generally open-source |

| Operational expertise | Mainframe admins | DevOps/cloud engineers |

The key insight is that keeping COBOL running is cheap; migrating away from COBOL is expensive. The Gartner estimate for a typical bank's COBOL-to-Java migration is $300–$500 million over 5–7 years, with a high probability of cost overrun.

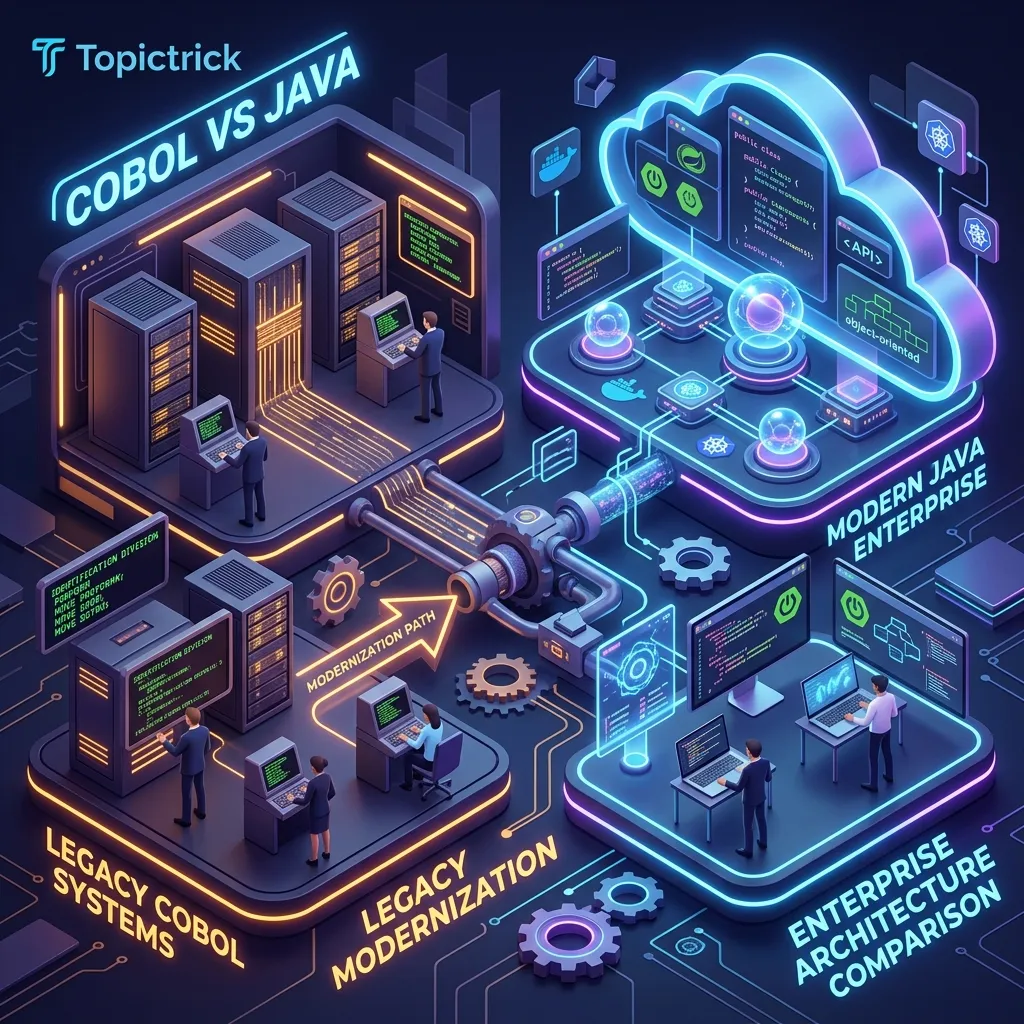

Modernization Strategies

Most successful modernization projects do not attempt a full rewrite. They use one of these patterns:

1. Encapsulation (API Wrapping)

Expose COBOL programs as REST or SOAP services using IBM z/OS Connect EE or MicroFocus COBOL Server. Java microservices call the COBOL API. The COBOL code is unchanged; you gain a modern interface without migration risk. This is the most common pattern in banking.

2. Strangler Fig

New functionality is written in Java. Existing COBOL modules are replaced one at a time as they require significant changes. Over 5–10 years the proportion of Java grows while COBOL shrinks. The system never has a big-bang cutover.

3. Selective Rewrite

Only the modules with the highest change velocity (e.g., customer-facing digital features) are rewritten in Java. Stable batch engines processing payroll or interest calculations stay in COBOL indefinitely because they work correctly and require no changes.

4. Full Rewrite

Attempted by organizations who believe they can replace everything. Success rate is low. The failures are well-documented: the Commonwealth Bank of Australia's aborted core banking replacement, TSB Bank's migration disaster, and multiple airline reservation system rewrites that were quietly abandoned. The root cause is almost always underestimating the business logic encoded in COBOL that no living person fully understands.

Skills and Hiring

The talent gap is real and often cited as the primary driver of modernization. The average COBOL developer is over 55 years old. University curricula dropped COBOL in the 1990s. This creates genuine succession risk.

However, the talent gap argument cuts both ways. COBOL developers command premium salaries precisely because of their scarcity — $75,000 to $200,000+ per year in financial services. And the shortage of experienced COBOL developers makes migrations harder, not easier, because institutional knowledge walks out the door during the project.

IBM has responded by adding COBOL support to VS Code, updating IBM Enterprise COBOL syntax to include modern features like JSON processing and XML parsing, and partnering with universities to teach COBOL. GnuCOBOL provides a free compiler for learning. The talent pipeline is thin but not zero.

Interoperability: The Hybrid Reality

The real-world answer to "COBOL vs Java" is increasingly "COBOL and Java." Modern mainframe architectures look like this:

- Java handles REST APIs, user interfaces, cloud integration, and new feature development

- COBOL handles the transaction engine, batch processing, and any logic where COMP-3 precision and z/OS performance matter

- IBM z/OS Connect or CICS Web Services provides the bridge

This is not a compromise — it is a deliberate architecture that uses each language where it performs best. Organizations like Goldman Sachs, JPMorgan, and Citibank run millions of lines of both COBOL and Java in production simultaneously.

When to Choose COBOL (Keep or Write New)

- Your workload involves large-scale batch processing with decimal arithmetic

- You operate under strict financial regulators who audit your calculation logic

- You are writing new CICS transaction code that integrates with existing COBOL programs

- Your team has COBOL expertise and the system is stable

- You are learning mainframe development for a career in banking or insurance

When to Choose Java (New Development or Greenfield)

- You are building a new microservice, API layer, or customer-facing application

- Your team is Java-native and has no mainframe background

- The workload does not require z/OS-specific performance characteristics

- You are targeting cloud deployment (AWS, Azure, GCP)

Conclusion

COBOL and Java are not direct competitors for the same use cases — they are complementary tools that modern enterprises increasingly run side by side. COBOL wins on batch throughput, decimal precision, and total cost of ownership for existing systems. Java wins on ecosystem, developer availability, and cloud integration.

The decision to migrate COBOL to Java should be driven by a rigorous cost/risk analysis, not by a belief that older means inferior. The $3 trillion that flows through COBOL systems every day is the market's verdict on its reliability. If you are entering the mainframe field, learning COBOL is a career advantage — not a compromise.

For a comprehensive foundation in COBOL programming, the COBOL Mastery course covers everything from your first program through production mainframe patterns.

Frequently Asked Questions

Q: What are the main technical reasons COBOL has not been replaced by Java in banking?

COBOL's longevity in banking is driven by three factors. First, mainframe COBOL batch throughput — processing millions of records per hour using VSAM and DB2 — has no Java equivalent at comparable cost per transaction on commodity hardware. Second, COBOL's fixed-point decimal arithmetic (COMP-3) avoids floating-point rounding errors that are unacceptable in financial calculations; Java's BigDecimal achieves the same correctness but at significantly higher CPU cost. Third, the embedded business logic in existing COBOL systems represents decades of encoded regulatory and financial rules — rewriting it faithfully is harder and riskier than maintaining it.

Q: What is the "strangler fig" pattern and how does it apply to COBOL modernisation?

The strangler fig pattern incrementally replaces a legacy system by routing specific transactions to new implementations while the original system continues handling everything else. In COBOL modernisation, this typically means exposing COBOL programs as APIs via z/OS Connect or wrapping them with Java facades, allowing new channels (mobile apps, web portals) to call existing COBOL logic without full rewriting. Over time, individual COBOL programs are replaced function by function. This avoids the big-bang rewrite risk while progressively modernising the system and allowing teams to build Java expertise on lower-risk components first.

Q: When is rewriting COBOL to Java actually the right decision?

Rewriting makes sense when: the COBOL system is genuinely at end of life (hardware or OS no longer supported), the business domain has changed so fundamentally that the existing code is a liability rather than an asset, the organisation is willing to invest substantial budget (often hundreds of millions for large banks) and accept multi-year timelines, and there is institutional knowledge available to validate the rewrite against the original behaviour. Most rewrite decisions that go wrong do so because they underestimate the business logic complexity embedded in the COBOL and overestimate the speed at which it can be faithfully reproduced.

Ready to Master COBOL?

This lesson is part of the COBOL Mastery Course — the complete reference from first program to production mainframe. 20 modules covering COBOL syntax, file handling, DB2, CICS, JCL, and modern features. Free, fresher to senior.