Project: Building a High-Performance In-Memory Key-Value Store (TopicKV) - Phase 2 Capstone

Project: Building a High-Performance In-Memory Key-Value Store (TopicKV) - Phase 2 Capstone

Table of Contents

- Architecture: Sharded Store Design

- Core Data Model: Entry with TTL

- Sharded Storage for Scalability

- Thread-Safe GET with std::optional

- Thread-Safe SET with RAII

- TTL Expiry and Lazy Deletion

- LRU Eviction Policy

- Command-Line REPL with std::format

- Statistics and Metrics

- Performance Benchmarks

- Extension Challenges

- Phase 2 Reflection

Architecture: Sharded Store Design

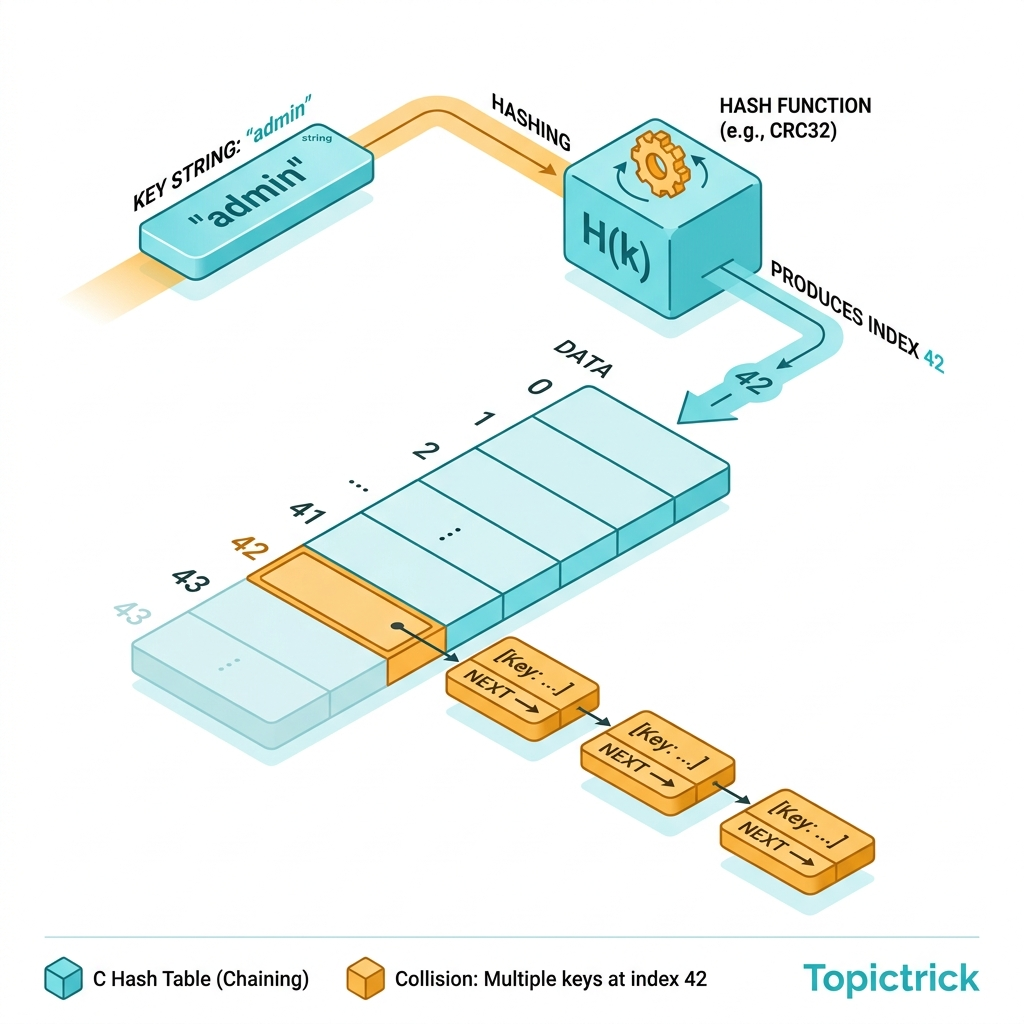

To avoid a single global lock bottlenecking all operations, TopicKV uses sharding - 16 independent sub-maps each with their own mutex:

Core Data Model: Entry with TTL

// include/topickv.hpp

#pragma once

#include <string>

#include <string_view>

#include <optional>

#include <memory>

#include <chrono>

#include <unordered_map>

#include <shared_mutex>

#include <array>

#include <atomic>

using Clock = std::chrono::steady_clock;

using TimePoint = std::chrono::time_point<Clock>;

using Duration = std::chrono::milliseconds;

// Internal entry - owns the value and tracks TTL:

struct Entry {

std::shared_ptr<std::string> value; // Shared ownership for snapshot reads

std::optional<TimePoint> expires_at; // nullopt = no expiry

TimePoint last_access; // For LRU tracking

explicit Entry(std::string val, std::optional<Duration> ttl = std::nullopt)

: value(std::make_shared<std::string>(std::move(val)))

, last_access(Clock::now())

{

if (ttl) expires_at = Clock::now() + *ttl;

}

bool is_expired() const {

if (!expires_at) return false;

return Clock::now() >= *expires_at;

}

void touch() { last_access = Clock::now(); }

};Sharded Storage for Scalability

class TopicKV {

static constexpr size_t SHARD_COUNT = 16;

static constexpr size_t MAX_ENTRIES = 10'000; // Per shard

struct Shard {

mutable std::shared_mutex mtx;

std::unordered_map<std::string, Entry> data;

};

std::array<Shard, SHARD_COUNT> shards_;

std::atomic<size_t> total_gets_{0};

std::atomic<size_t> total_sets_{0};

std::atomic<size_t> cache_hits_{0};

std::atomic<size_t> cache_misses_{0};

// Determine which shard owns a key:

Shard& shard_for(std::string_view key) {

size_t h = std::hash<std::string_view>{}(key);

return shards_[h % SHARD_COUNT];

}

const Shard& shard_for(std::string_view key) const {

size_t h = std::hash<std::string_view>{}(key);

return shards_[h % SHARD_COUNT];

}Thread-Safe GET with std::optional

public:

// GET: returns shared_ptr for snapshot safety (value stays valid even if key deleted)

std::optional<std::shared_ptr<std::string>> get(std::string_view key) {

auto& shard = shard_for(key);

std::shared_lock lock(shard.mtx); // Multiple concurrent readers OK

total_gets_.fetch_add(1, std::memory_order_relaxed);

auto it = shard.data.find(std::string(key));

if (it == shard.data.end()) {

cache_misses_.fetch_add(1, std::memory_order_relaxed);

return std::nullopt; // Key not found

}

// Lazy TTL check:

if (it->second.is_expired()) {

// Can't erase under shared_lock - caller should call del() or GC handles it

cache_misses_.fetch_add(1, std::memory_order_relaxed);

return std::nullopt; // Pretend it's gone

}

it->second.touch(); // Mark LRU access time

cache_hits_.fetch_add(1, std::memory_order_relaxed);

return it->second.value; // Return shared_ptr - safe even after shard unlock

}

// GET with fallback value:

std::string get_or(std::string_view key, std::string_view fallback) {

auto result = get(key);

return result ? **result : std::string(fallback);

}Thread-Safe SET with RAII

// SET: insert or update, with optional TTL

void set(std::string_view key, std::string value,

std::optional<Duration> ttl = std::nullopt) {

auto& shard = shard_for(key);

std::unique_lock lock(shard.mtx); // Exclusive write access

total_sets_.fetch_add(1, std::memory_order_relaxed);

// Evict if at capacity:

if (shard.data.size() >= MAX_ENTRIES) {

evict_lru(shard);

}

// Insert or update:

auto [it, inserted] = shard.data.try_emplace(

std::string(key),

std::move(value),

ttl

);

if (!inserted) {

// Key exists - update in-place:

it->second = Entry(std::move(value), ttl);

}

}

// DEL: remove a key:

bool del(std::string_view key) {

auto& shard = shard_for(key);

std::unique_lock lock(shard.mtx);

return shard.data.erase(std::string(key)) > 0;

}

// MSET: set multiple at once (atomically within each shard):

void mset(std::initializer_list<std::pair<std::string_view, std::string>> entries) {

for (auto& [key, value] : entries) set(key, value);

}TTL Expiry and Lazy Deletion

// Garbage collect all expired entries (call periodically):

size_t gc() {

size_t removed = 0;

for (auto& shard : shards_) {

std::unique_lock lock(shard.mtx);

auto it = shard.data.begin();

while (it != shard.data.end()) {

if (it->second.is_expired()) {

it = shard.data.erase(it);

removed++;

} else {

++it;

}

}

}

return removed;

}LRU Eviction Policy

private:

// Evict the least-recently-used entry from a shard (must hold unique_lock):

static void evict_lru(Shard& shard) {

if (shard.data.empty()) return;

// Find the entry with the oldest last_access:

auto oldest = std::ranges::min_element(shard.data, {},

[](const auto& entry) { return entry.second.last_access; });

if (oldest != shard.data.end()) {

shard.data.erase(oldest);

}

}Command-Line REPL with std::format

// src/repl.cpp

#include <print>

#include <string>

#include <sstream>

#include "topickv.hpp"

void run_repl(TopicKV& store) {

std::println("TopicKV v1.0 - type HELP for commands");

std::string line;

while (std::print("> "), std::getline(std::cin, line)) {

std::istringstream iss(line);

std::string cmd;

iss >> cmd;

if (cmd == "SET") {

std::string key, val;

iss >> key >> val;

store.set(key, val);

std::println("OK");

} else if (cmd == "SETEX") { // SET with TTL

std::string key, val;

int ms;

iss >> key >> ms >> val;

store.set(key, val, std::chrono::milliseconds(ms));

std::println("OK (expires in {}ms)", ms);

} else if (cmd == "GET") {

std::string key;

iss >> key;

auto result = store.get(key);

result ? std::println("{}", **result) : std::println("(nil)");

} else if (cmd == "DEL") {

std::string key;

iss >> key;

std::println("{}", store.del(key) ? "1" : "0");

} else if (cmd == "GC") {

std::println("Removed {} expired entries", store.gc());

} else if (cmd == "STATS") {

auto [gets, sets, hits, misses] = store.stats();

std::println("GETs: {} | SETs: {} | Hits: {} | Misses: {} | Rate: {:.1f}%",

gets, sets, hits, misses,

gets > 0 ? 100.0 * hits / gets : 0.0);

} else if (cmd == "QUIT" || cmd == "EXIT") {

break;

} else {

std::println("Unknown command. Commands: SET SETEX GET DEL GC STATS QUIT");

}

}

}Extension Challenges

- Persistence: Serialize the entire store to a binary file on SIGTERM (use

std::ofstream+std::bit_castfor POD types) - Pub/Sub: Add a

SUBSCRIBE key/PUBLISH key msgcommand usingstd::condition_variableand astd::deque<std::string>channel - Transactions: Implement

MULTI/EXECby buffering commands in astd::vector<Command>and applying atomically - Network interface: Wrap the store in a TCP server using

std::jthread(from Module 14) - one thread per connection using the REPL dispatch logic

Phase 2 Reflection

| Phase 2 Concept | Used In TopicKV |

|---|---|

std::unordered_map (Module 10) | Core key-value storage per shard |

std::shared_ptr (Module 3) | Entry value - snapshot safety |

std::shared_mutex (Module 14) | Concurrent reader/writer safety |

std::optional (Module 6) | GET return type - explicit "not found" |

std::chrono | TTL timestamps, expiry comparison |

| RAII locks (Module 8) | shared_lock / unique_lock auto-release |

std::atomic (Module 14) | Lock-free statistics counters |

std::ranges::min_element (Module 15) | LRU eviction algorithm |

Proceed to Phase 3: Inheritance & Composition ->

Frequently Asked Questions

Q: What C++ data structures are best suited for building an in-memory key-value store?

std::unordered_map<Key, Value> provides O(1) average-case lookup and is the natural choice for a hash-based key-value store. For ordered range queries, std::map (red-black tree, O(log n)) is better. For a concurrent store, wrap with std::shared_mutex for read-heavy workloads or use a sharded approach (multiple maps each with their own lock) to reduce contention. For extremely high throughput, consider lock-free hash maps from libraries like libcuckoo or Junction.

Q: How do you implement expiry (TTL) for keys in a C++ in-memory store?

Store the expiry timestamp alongside each value - std::chrono::steady_clock::time_point. On every read, check whether the current time exceeds the stored expiry and return "not found" if so (lazy expiration). For active cleanup, maintain a priority queue (std::priority_queue) sorted by expiry time and run a background thread that periodically pops and deletes expired keys. This two-pronged approach (lazy + active) balances memory usage and read latency.

Q: How does an in-memory datastore project demonstrate important C++ concepts?

It exercises RAII for resource management (automatic cleanup of allocated entries), templates and type erasure for storing heterogeneous value types, concurrency primitives (std::mutex, std::shared_mutex, std::condition_variable) for thread safety, move semantics for zero-copy value insertion, and std::chrono for TTL management. It also naturally motivates benchmarking with std::chrono::high_resolution_clock and profiling to understand cache behaviour - making it an excellent capstone project for systems-level C++ skills.

Part of the C++ Mastery Course - 30 modules from modern C++ basics to expert systems engineering.