Go Goroutines Explained: Concurrency for Beginners

Go Goroutines: The Hardware-Mirror of Concurrency

A goroutine is a lightweight, concurrent function managed by the Go runtime rather than the operating system. Starting a goroutine requires only the go keyword before a function call and uses approximately 2KB of initial stack memory - compared to ~1MB for an OS thread. Go applications routinely run tens of thousands of goroutines simultaneously, making Go ideal for highly concurrent servers, pipelines, and microservices.

Concurrency 1: Goroutines & The Scheduler

Concurrency is Go's "killer feature." While other languages struggle with complex thread pools, heavy memory overhead, or a "Single Threaded" event loop, Go makes high-performance concurrent programming as easy as typing two letters: go.

In this module, we will explore the Goroutine-a lightweight execution thread managed entirely by the Go runtime rather than the operating system.

In this module, we will explore the Goroutine-a lightweight execution thread managed entirely by the Go runtime rather than the operating system.

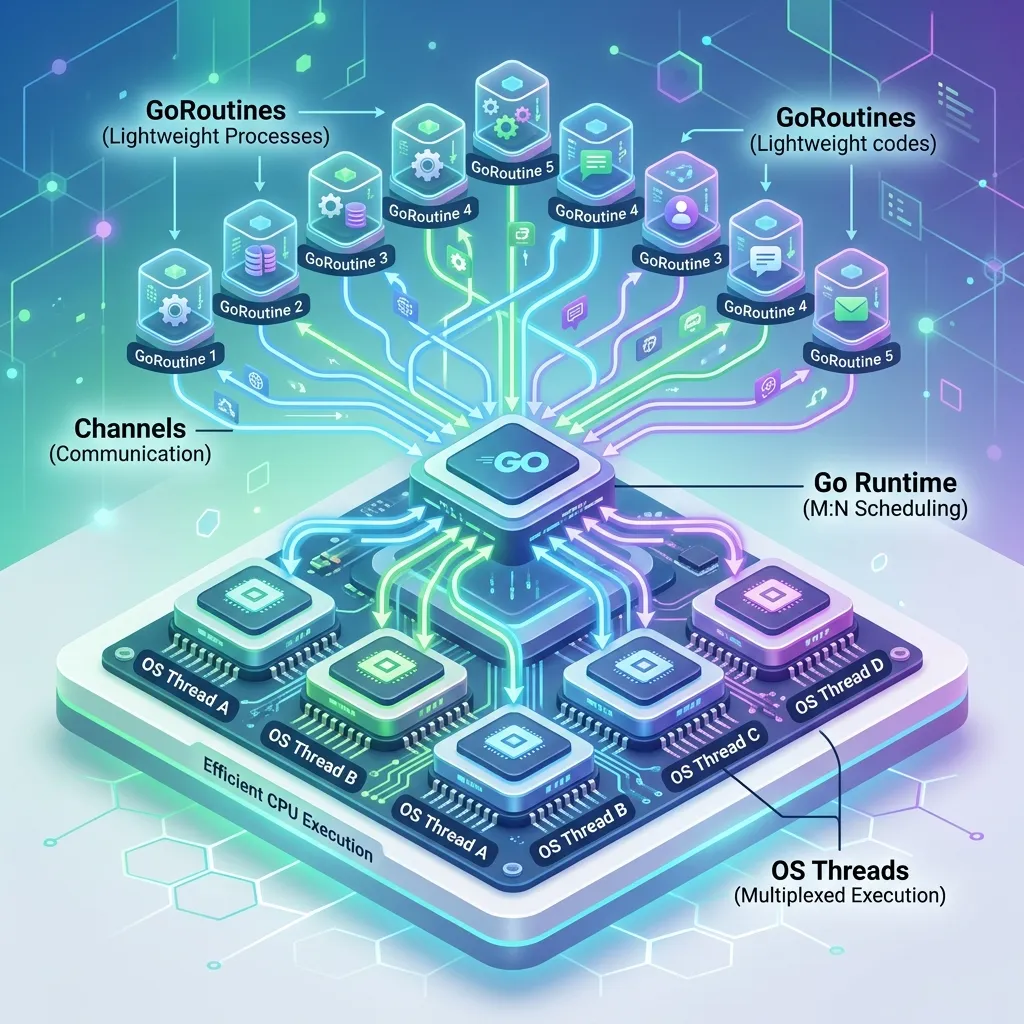

1. The Scheduler Mirror: M:P:G Physics

To understand Goroutines, you must understand how the Go Runtime maps code to silicon. This is done through the M:P:G Model.

The Flow Physics

- G (Goroutine): This is your code (the function you ran with

go). It contains the instruction pointer and the stack. - M (Machine): This is a physical OS Thread managed by the kernel. It is the actual worker that executes code on the CPU.

- P (Processor): This is a virtual resource (a "Context") that represents the right to execute Go code. There are usually as many

Ps as there are CPU cores.

The Work-Stealing Mirror: If one Processor (P) finishes all its Goroutines (G), it will "steal" half of the work from another Processor's queue. This ensures that every CPU core is constantly saturated, maximizing throughput across the execution mirror.

2. What is a Goroutine?

A Goroutine is a function that is executing concurrently with other Goroutines in the same address space. They are incredibly "cheap"-a typical Goroutine starts with only 2KB of stack space and is dynamically resized. You can easily run hundreds of thousands of them on a single laptop without crashing the system.

Launching Your First Goroutine

To start a Goroutine, simply prefix a function call with the keyword go.

func sayHello() {

fmt.Println("Hello from a Goroutine!")

}

func main() {

// This starts a new Goroutine

go sayHello()

// The main function continues immediately

fmt.Println("Hello from Main!")

// We need a small sleep here to see the output because

// if main exits, all other goroutines are terminated instantly.

time.Sleep(100 * time.Millisecond)

}// if main exits, all other goroutines are terminated instantly. time.Sleep(100 * time.Millisecond) }

---

## 3. The Stack Mirror: 2KB to Infinity

Goroutines are tiny because they use **Contiguous Stacks** that grow and shrink dynamically.

### The Allocation Physics

- **The Starting Point**: Every Goroutine starts with a 2KB stack. This is why you can fit 500,000 Goroutines into 1GB of RAM.

- **The Growth Mirror**: When a Goroutine runs out of space, the Go runtime allocates a new, larger stack block, "mirrors" (copies) the existing data to it, and updates all pointers.

- **The OS Thread Contrast**: Traditional OS threads allocate a fixed 1MB-2MB stack upfront. This is "wasteful silicon"-memory that sits idle just in case the thread needs it.

---

## 4. The Magic: The Go Scheduler

The Go runtime includes its own scheduler that employs an **M:N scheduling** technique. It multiplexes $M$ Goroutines onto $N$ OS threads.

The scheduler uses a strategy called "Work Stealing" to ensure that no CPU core remains idle while others are overloaded. This is why Go is the language of choice for cloud-native infrastructure like Docker, Kubernetes, and Terraform.

### The Fork-Join Model

When you use the `go` keyword, you are "forking" a new branch of execution. However, the `go` keyword doesn't provide a way to "join" or wait for that execution to finish. To do that safely, we need synchronization tools like Channels or WaitGroups (which we will cover in the next modules).

<DataGrid

title="The Power of Goroutines"

items={[

{ title: "Lightweight", example: "~2KB stack", description: "Unlike OS threads which require ~1MB of memory, Goroutines allow for massive density on minimal hardware." },

{ title: "Fast Context Switches", example: "User-space scheduling", description: "Because the Go runtime manages the threads, switching between Goroutines is orders of magnitude faster than OS thread context switching." },

{ title: "Dynamic Stacks", example: "Self-governing memory", description: "Goroutine stacks grow and shrink as needed, preventing 'Stack Overflow' errors while remaining memory efficient." }

]}

/>

<ComparisonTable

labelA="OS Threads"

labelB="Go Goroutines"

data={[

{ feature: "Memory Cost", toolA: "High (~1MB)", toolB: "Low (~2KB)" },

{ feature: "Creation Time", toolA: "Slow (System call)", toolB: "Fast (Runtime allocation)" },

{ feature: "Management", toolA: "Kernel/OS Scheduler", toolB: "Go Runtime Scheduler" },

{ feature: "Quantity", toolA: "Hundreds or Thousands", toolB: "Millions" }

]}

/>

---

## Goroutines in Practice: HTTP Server Example

Every incoming HTTP request in a Go web server is handled in its own goroutine automatically. This means a Go server handling 10,000 simultaneous connections creates 10,000 goroutines - something that would be impossible with OS threads.

Here is a simple demonstration of launching goroutines to process tasks concurrently:

```go

package main

import (

"fmt"

"sync"

"time"

)

func processOrder(orderID int, wg *sync.WaitGroup) {

defer wg.Done()

fmt.Printf("Processing order %d...\n", orderID)

time.Sleep(100 * time.Millisecond) // Simulate work

fmt.Printf("Order %d complete.\n", orderID)

}

func main() {

var wg sync.WaitGroup

// Process 100 orders concurrently

for i := 1; i <= 100; i++ {

wg.Add(1)

go processOrder(i, &wg)

}

wg.Wait() // Block until all orders are processed

fmt.Println("All orders processed.")

}Without goroutines, processing 100 orders sequentially at 100ms each would take 10 seconds. With goroutines, all 100 complete in approximately 100ms.

Detecting Race Conditions

Go ships with a built-in race detector. Run your code or tests with the -race flag to catch data races during development:

go run -race main.go

go test -race ./...The race detector instruments your code to detect when two goroutines access the same memory location concurrently without proper synchronisation. It adds runtime overhead, so use it during testing and development, not in production binaries.

A common race condition looks like this:

// RACE CONDITION: two goroutines writing to the same variable

counter := 0

go func() { counter++ }()

go func() { counter++ }()

// Final value of counter is unpredictableThe fix is to use either a sync.Mutex to protect the shared variable or a channel to coordinate access.

Anonymous Goroutines

A very common Go pattern is launching an anonymous function as a goroutine. This is useful for fire-and-forget background tasks:

go func() {

// This runs concurrently

sendWelcomeEmail(userID)

}()Be careful with closures and goroutines. Variables captured by a goroutine closure must not be mutated by the main goroutine without synchronisation. When launching goroutines in a loop, pass the loop variable as a function argument to avoid the classic closure-over-loop-variable bug:

// WRONG: all goroutines may see i = 10

for i := 0; i < 10; i++ {

go func() { fmt.Println(i) }()

}

// CORRECT: each goroutine gets its own copy

for i := 0; i < 10; i++ {

go func(n int) { fmt.Println(n) }(i)

}Why Go's Concurrency Model Matters for Production

The reason companies like Cloudflare, Uber, Dropbox, and Docker chose Go as their primary language is directly attributable to goroutines. A Node.js server handling I/O-bound work through an event loop is single-threaded - one slow callback blocks everyone. A Python server using threads pays 1MB per thread and switches between them via the OS scheduler with significant overhead.

Go's goroutine model hits the sweet spot: true parallelism across multiple CPU cores (unlike Node.js) with the lightweight scheduling overhead of a green thread system (unlike OS threads). The result is servers that can handle extraordinary concurrency with predictable, low latency.

Further Reading

This is the first module in the Go concurrency series. Continue with Go channels and communication and then select statements and WaitGroups. For the broader context of what makes Go powerful, see what is Go programming language.

Phase 11: Concurrency Architecture Mastery Checklist

- Verify GOMAXPROCS Alignment: Ensure that your application is utilizing all available CPU cores by checking that

runtime.GOMAXPROCS(0)matches your hardware mirror. - Audit Worker Pool Saturation: Identify CPU-bound loops and ensure they are wrapped in goroutines that respect

runtime.NumCPU()to avoid scheduler thrashing. - Implement Race Detection: Always run

go test -racein CI to detect unsynchronized memory access before the silicon state becomes corrupted. - Test Stack Pressure: Identify deeply recursive functions that might trigger aggressive stack copying and refactor them to utilize heap-allocated slices.

- Use Goroutine Leaks Detection: Use tools like

goleakto verify that all spawned goroutines exit cleanly when their parent context is cancelled.

Read next: Go Channels and Communication: The Signal Mirror ->

Next Steps

Goroutines are powerful, but they are dangerous if they can't communicate. If two Goroutines try to access the same variable at the same time, you'll get a Race Condition, which can lead to unpredictable crashes. In the next tutorial, we will explore Channels-the safe, elegant way for Goroutines to talk to each other.

Common Goroutine Mistakes

1. Launching goroutines without a way to wait for them

go doWork() starts a goroutine and returns immediately. If main() exits, all goroutines are killed. Use a sync.WaitGroup to wait for goroutines to finish before the program exits.

2. Goroutine leaks

A goroutine blocked on a channel read or waiting for a mutex that is never released leaks forever. Always ensure goroutines have a way to exit - pass a context.Context and select on ctx.Done(). The Go blog on concurrency patterns covers leak-free patterns.

3. Race conditions on shared memory

Two goroutines reading and writing the same variable without synchronisation is a data race. Run go test -race or go run -race to detect races. Use sync.Mutex or channels to protect shared state.

4. Closing a channel from the receiver side Only the sender should close a channel. Closing from the receiver, or closing an already-closed channel, panics. Establish a clear ownership rule: the goroutine that creates and sends on a channel is responsible for closing it.

5. Using goroutines for CPU-bound work without limiting parallelism

Spawning thousands of goroutines for CPU-bound tasks creates more OS threads than CPU cores and causes thrashing. Use a worker pool pattern - a fixed number of goroutines reading from a shared job channel - to limit parallelism to runtime.NumCPU().

Frequently Asked Questions

How many goroutines can a Go program run simultaneously? Go can comfortably run millions of goroutines. Each starts with a small stack (~2KB) that grows dynamically as needed. The Go runtime multiplexes goroutines onto OS threads using its M:N scheduler. See the Go runtime scheduler documentation for the mechanics.

What is the difference between a goroutine and a thread? OS threads are managed by the kernel and typically have a fixed 1-8MB stack. Goroutines are managed by the Go runtime, start with ~2KB, and are much cheaper to create and context-switch. A typical Go server runs thousands of goroutines on a handful of OS threads.

When should I use a goroutine vs a channel vs a mutex? Use goroutines to express concurrency. Use channels to communicate between goroutines and to transfer ownership of data. Use mutexes to protect shared state that multiple goroutines access. The Go proverb applies: "Do not communicate by sharing memory; instead, share memory by communicating."