HLASM Performance Optimisation Techniques

HLASM Performance Optimisation Techniques

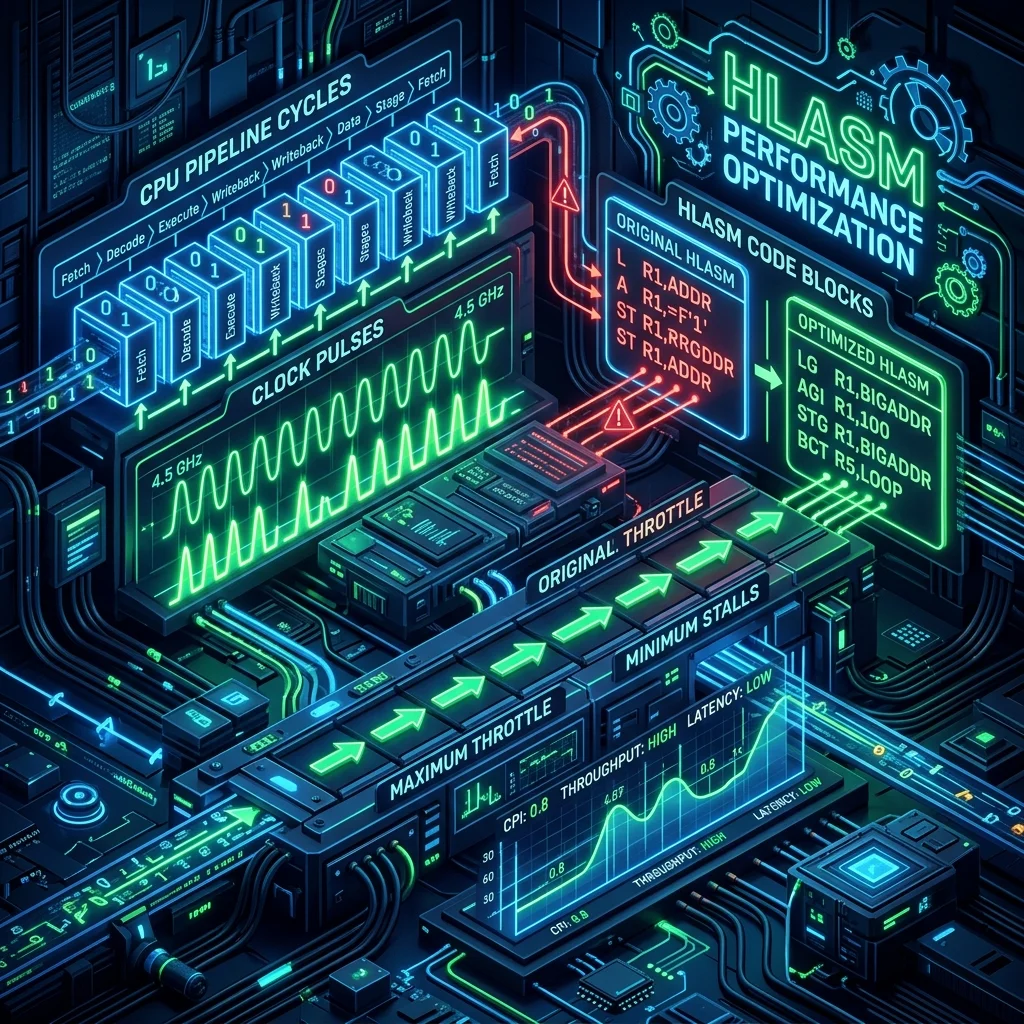

The primary reason to write HLASM over COBOL or Java is performance. This module covers the techniques that make assembler code fast: instruction selection, pipeline awareness, cache efficiency, and the EX instruction for variable-length operations.

Why HLASM Is Faster

HLASM gives you direct control over:

- Exact instruction selection (no compiler choices)

- Register allocation (no spilling to memory unnecessarily)

- Memory access patterns (cache-friendly layouts)

- Loop structure (counted loops with BCT, no hidden overhead)

- Instruction ordering (avoiding pipeline stalls)

A well-written HLASM routine for a hot code path can be 2-5x faster than equivalent optimised COBOL.

Instruction Selection

Choose the most efficient instruction for the task:

Zeroing a register:

SR 3,3 Fastest: single instruction, sets CC

XR 3,3 Also fast: XOR with self

LA 3,0 Slightly more overhead

L 3,=F'0' Avoid: extra memory accessLoading small constants:

LA 3,1 Fast: immediate, no memory

LA 3,255 Fast: fits in 12-bit displacement

L 3,=F'256' Slower: memory access requiredIncrementing a register:

LA 3,1(3) Fastest: LA adds 1 without memory access

A 3,=F'1' Slower: requires literal in memory

AHI 3,1 Fast alternative (Add Halfword Immediate)Avoiding Unnecessary Memory Access

Memory accesses (L, ST) are orders of magnitude slower than register operations. Keep frequently-used values in registers:

* Slow: reloading the same value in a loop

LOOP L 3,TOTAL Reload total every iteration (cache miss risk)

A 3,0(4)

ST 3,TOTAL Store back every iteration

LA 4,4(4)

BCT 5,LOOP

* Fast: keep running total in register

L 3,TOTAL Load once before loop

LOOP A 3,0(4) Accumulate in register (no memory access)

LA 4,4(4)

BCT 5,LOOP

ST 3,TOTAL Store once after loopCache-Friendly Data Layouts

Modern z/Architecture processors have L1, L2, and L3 caches. Accessing memory sequentially (stride-1) maximises cache hits:

* Good: sequential access (cache-friendly)

LA 4,TABLE Point to start

LOOP L 3,0(4) Load each element sequentially

* ... process ...

LA 4,4(4) Move to next (sequential)

BCT 5,LOOP

* Bad: strided access (cache-unfriendly for large strides)

LA 4,TABLE

LOOP L 3,0(4) Load every Nth element

LA 4,128(4) Jump 128 bytes (may cause cache miss)

BCT 5,LOOPBCT vs Branch+Compare for Loops

BCT (Branch on Count) combines decrement and branch in one instruction:

* Using BCT (preferred for simple counted loops)

LA 5,100 Loop 100 times

LOOP DS 0H

* ... loop body ...

BCT 5,LOOP Decrement and branch if not zero

* Using BRCT (Branch Relative on Count - no base-displacement overhead)

LA 5,100

LOOP DS 0H

* ... body ...

BRCT 5,LOOP Relative branch - no address calculationBRCT (Branch Relative on Count) is slightly faster than BCT for tight loops because the target address is encoded as a relative offset, avoiding base-displacement calculation.

The EX Instruction

EX reg,instruction executes a single instruction with the length field replaced by the low byte of the register. This enables variable-length operations without loops:

* Move variable-length data (length-1 in register)

LA 3,0(5) reg 3 = length - 1 (EX adds 1)

EX 3,MOVEINS Execute MVC with variable length

B SKIPMVC

MOVEINS MVC TARGET(0),SOURCE Template: length field = 0 (replaced by EX)

SKIPMVC DS 0H

* Compare variable-length data

LA 3,0(5) reg 3 = length - 1

EX 3,CLCINS

BE EQUAL

CLCINS CLC FIELD1(0),FIELD2EX is the fastest way to handle variable-length SS instructions without a loop. The instruction after EX is never executed - EX executes its target, then resumes at EX+6.

MVCL vs MVC Loop vs EX

| Method | Best for |

|---|---|

| MVC (256 bytes max) | Fixed-length ≤ 256 bytes |

| EX with MVC template | Variable-length ≤ 256 bytes |

| MVCL | Long moves > 256 bytes |

| Loop with MVC | When pattern is needed (padding) |

Instruction Ordering and Pipeline

IBM Z processors are pipelined and out-of-order. To maximise throughput:

- Avoid back-to-back instructions that use the same register

- Interleave independent operations

- Avoid load-use hazards (loading a register and immediately using it)

* Potential hazard: load then immediate use

L 3,DATA1

A 3,DATA2 Might stall waiting for L to complete

* Better: interleave independent loads

L 3,DATA1

L 4,DATA2 Load reg 4 while reg 3 load completes

AR 3,4 Now use both - less stall riskFrequently Asked Questions

Q: How much faster is HLASM than COBOL for typical business logic? For pure computation (loops, arithmetic, string manipulation), optimised HLASM is typically 2-5x faster than compiled COBOL. However, modern COBOL compilers (IBM COBOL for z/OS with OPT(2)) generate good machine code, and the difference is often smaller than expected. The biggest gains come from eliminating unnecessary memory accesses, tight loop optimisation, and tasks like bulk data movement where MVCL and EX provide hardware-accelerated performance that compilers can't always exploit.

Q: Should I optimise for instruction count or instruction mix? Instruction mix matters more than count. A single MVCL that moves 4KB beats 16 MVC instructions. A load from L1 cache is ~1 cycle; a load from main memory might be 100+ cycles. Focus first on memory access patterns (locality, sequential access), then on register reuse (avoid unnecessary loads/stores), then on instruction selection. Profile with RMF (Resource Measurement Facility) or IBM's zAware before optimising - measure where time is actually spent.

Q: What is the EX instruction and when should I avoid it? EX executes another instruction with a modified length or mask field. It's perfect for variable-length SS instructions (MVC, CLC, TR). Avoid EX when: the target instruction requires branch prediction (EX branches perform poorly in some microarchitectures), when the length is always fixed (use MVC directly), or when the target is in a different cache line (EX requires fetching the target instruction). Modern z/Architecture supports EX Target Cache (EXRL instruction) which caches the EX target for better performance.

Part of HLASM Mastery Course - Module 21 of 22.