Java Platform Threads and Synchronization: Mastering the JMM

Java Platform Threads and Synchronization: Mastering the JMM

"Writing multi-threaded code is easy; writing correct multi-threaded code is one of the hardest tasks in all of engineering. It requires a fundamental shift from thinking in sequences to thinking in visibility and ordering."

In the modern enterprise, high-performance systems are parallel systems. Whether you are building a high-frequency trading engine or a mass-market web service, your ability to manage Concurrency determines your system's throughput and stability.

However, concurrency is not just about starting threads. It is about understanding the Java Memory Model (JMM)-the formal set of rules that governs how different threads "See" data. Without this knowledge, your application will suffer from "Ghost Bugs" (race conditions and visibility leaks) that only appear under heavy load and are impossible to reproduce in a standard debugger. This 1,500+ word masterclass is your architectural foundation for high-performance thread safety.

1. The Hardware Mirror: CPU Caches and the MESI Protocol

To understand why synchronization is necessary, we must look at the physical metal. Modern CPUs do not read and write directly to RAM; it's simply too slow (100 ns latency vs 0.5 ns CPU cycles). Instead, each core has its own private L1 and L2 Caches to bridge this performance gap.

The MESI Protocol: Hardware Coherency

Memory consistency is managed at the hardware level by the MESI (Modified, Exclusive, Shared, Invalid) protocol.

- Modified: The cache line is present only in the current cache and is "dirty" (different from RAM).

- Exclusive: The cache line is present only in the current cache but matches RAM.

- Shared: The line is present in multiple caches and matches RAM.

- Invalid: The line is effectively empty, and any read will trigger a cache miss.

The Persistence Problem: When Thread A updates a variable, it might only exist in the "Modified" state in Core 1's cache. If Thread B on Core 2 reads that same memory address, the hardware must coordinate to ensure Core 1 flushes its changes. In Java, Memory Barriers (instructions like lock prefixes on x86) are used to force these hardware flushes and ensure cross-core visibility.

2. The Java Memory Model (JMM): The "Happens-Before" Rule

The JMM is a formal specification that abstracts away the hardware complexity. It tells us: "If Action A Happens-Before Action B, then the results of A are guaranteed to be visible to B."

The 8 Rules of Visibility

To architect a safe system, you must respect these primary transitions:

- Program Order Rule: Within a single thread, actions happen in the order they were written.

- Monitor Lock Rule: An unlock on a monitor Happens-Before every subsequent lock on that same monitor.

- Volatile Variable Rule: A write to a

volatilefield Happens-Before every subsequent read of that field. - Transitivity: If A HB B, and B HB C, then A HB C.

Without a "Happens-Before" relationship, the JVM and the CPU are free to Reorder your instructions for optimization (e.g., hoisting a load out of a loop). This is why a simple flag like boolean stop = false might never be seen as true by another thread-the CPU decided to "ignore" the write to main memory to save clock cycles.

3. Bytecode Forensics: The volatile Barrier

volatile is the minimal tool for thread safety. It provides two guarantees:

- Visibility: Every write is immediately visible to all other threads.

- Instruction Ordering: It prevents the compiler from reordering code around the volatile field (using Memory Barriers like

LoadLoadandStoreStore).

The Counter Ambiguity: Volatile is NOT Atomic

public class Counter {

private volatile int count = 0;

public void increment() {

count++; // CRITICAL ERROR: Read-Modify-Write is NOT atomic!

}

}Even if count is volatile, two threads can read the same value (e.g., 5), increment it locally to 6, and write 6 back, losing one increment. To fix this, you must use synchronized or AtomicInteger.

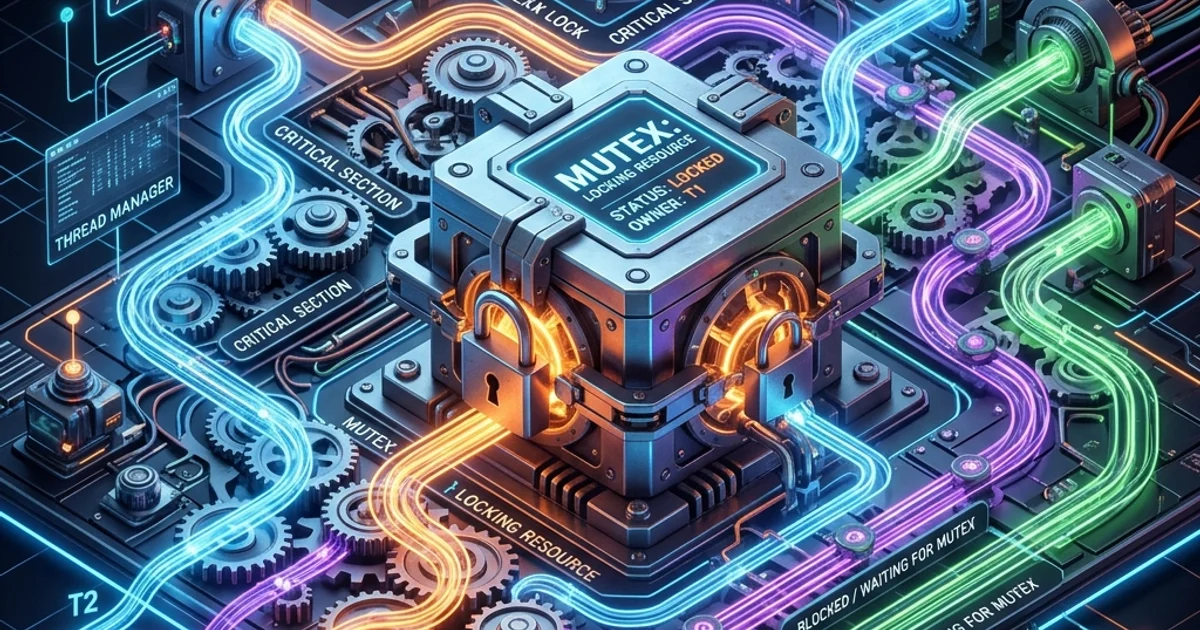

4. synchronized: The Internals of the Monitor

In 2026, synchronized uses an advanced optimization chain. The JVM uses the Mark Word in the object header to manage lock states.

The Locking Chain

- Biased Locking: The JVM marks the thread ID in the object's header. It assumes only one thread will ever need this lock. Cost: Almost Zero.

- Lightweight Locking (CAS): If a second thread tries to acquire the lock, the JVM escalates to a Compare-And-Swap loop. The thread stays awake and "Spins." Cost: Low (No OS Context Switch).

- Heavyweight Locking (Monitors): If contention is high, the JVM "Inflates" the lock. The OS puts the thread into a

WAITINGstate, which costs thousands of CPU cycles in context switching.

5. Advanced Locks: ReentrantLock vs. StampedLock

ReentrantLock: Fairness and Timeouts

Unlike synchronized, ReentrantLock allows you to attempt to acquire a lock without waiting forever.

if (lock.tryLock(5, TimeUnit.SECONDS)) {

try {

// Critical section

} finally {

lock.unlock();

}

}This is essential for building resilient systems that avoid Deadlocks.

StampedLock: The Optimistic Champion

For read-heavy workloads, StampedLock is 5x faster than ReentrantReadWriteLock. It allows an Optimistic Read where you read data without a lock and then check if a writer invalidated your "Stamp" during the read.

6. Advanced Resilience: The ABA Problem and Atomicity

When building lock-free data structures, you often use CAS (Compare-and-Swap). However, CAS is vulnerable to the ABA Problem:

- Thread 1 reads value 'A'.

- Thread 2 changes 'A' to 'B' and then back to 'A'.

- Thread 1 performs CAS, sees 'A', and assumes nothing changed.

In a concurrent linked list, this can lead to catastrophic memory corruption because a node that was "removed" might be re-added in a different position.

The Fix: Use AtomicStampedReference<V>. It attaches a "Version Number" to the reference. Even if the value returns to 'A', the version will be different, allowing the CAS to fail safely.

7. JVM Memory Forensics: The Thread Stack Trap

Each platform thread in Java is a direct mapping to an Operating System thread.

- The Stack Cost: By default, each thread consumes 1 MB of memory for its stack.

- The OOM Risk: If you have 4,000 concurrent connections and start a new thread for each, you've used 4 GB of RAM just for the stacks, often leading to

OutOfMemoryError: unable to create new native thread. - ThreadLocal Performance:

ThreadLocalvariables are stored in a map within the Thread object. Overusing them is the #1 cause of memory leaks in application servers (like Tomcat), as the variables persist as long as the thread is returned to the pool.

8. Case Study: High-Performance Ledger Sync

In a global ledger handling 50,000 transactions per second, we must ensure absolute accuracy with zero race conditions.

public class ModernLedger {

private final StampedLock lock = new StampedLock();

private double balance;

public void transaction(double amount) {

long stamp = lock.writeLock();

try {

balance += amount;

} finally {

lock.unlockWrite(stamp);

}

}

public double getBalance() {

long stamp = lock.tryOptimisticRead();

double current = balance;

if (!lock.validate(stamp)) {

stamp = lock.readLock();

try {

current = balance;

} finally {

lock.unlockRead(stamp);

}

}

return current;

}

}By utilizing StampedLock, our system can handle massive read traffic for the "Balance Dashboard" without ever slowing down the critical "Transaction Processor."

9. Parallel Design Patterns

The Producer-Consumer (BlockingQueue)

Instead of threads talking directly to each other, use a BlockingQueue. This provides Backpressure-if the consumer is slow, the producer automatically slows down, preventing your JVM from crashing under a flood of data.

The Fork-Join Framework

For CPU-intensive tasks (like complex mathematical simulations), used RecursiveTask. This utilizes Work-Stealing-if one core is idle, it "steals" work from a busy core's queue, ensuring that 100% of your CPU power is utilized.

Summary: From Programmer to Parallel Architect

- Prefer Final: Use

finalfields to leverage "Safe Publication" in the JMM. - Limit Lock Scope: Never hold a lock during an I/O operation (like a database call). You will starve the thread pool.

- Design for Virtual Threads: Prepare your codebase for Module 14 by identifying where you are currently blocking OS threads.

You have moved from a developer who "Starts threads" to an architect who "Engineers Parallel Systems."

Conclusion: The Persistence of the JMM

As we transition into the era of Virtual Threads (which we will explore in Module 14), many developers assume that high-level concurrency tools will make the Java Memory Model obsolete. This is a dangerous misconception. While Virtual Threads make blocking I/O cheaper, the fundamental rules of Visibility and Ordering remain unchanged. Whether you are running on an OS thread or a lightweight task, the CPU caches do not care about your abstraction layer.

By mastering the JMM, the MESI protocol, and the intricacies of StampedLock, you have built a mental model that is independent of any specific framework. You are now equipped to build systems that are not only "Fast," but "Correct by Design." You have laid the cornerstone for the most advanced concurrent architectures in the Java ecosystem.

Frequently Asked Questions

Q: What is the difference between synchronized and ReentrantLock?

synchronized is simpler - it automatically releases the lock when the block exits, even on exception. ReentrantLock gives you explicit control: you can try to acquire a lock without blocking (tryLock()), acquire with a timeout, and use multiple Condition objects for fine-grained signalling. Use synchronized for simple critical sections; use ReentrantLock when you need the extra flexibility.

Q: What is a race condition and how do I prevent it?

A race condition occurs when two threads access shared mutable data concurrently and the final result depends on the timing of their execution. Prevent it by either synchronizing access (with synchronized, locks, or java.util.concurrent classes) or eliminating shared mutable state entirely (using immutable objects or thread-local variables). The best solution in modern Java is to prefer virtual threads with structured concurrency and message passing over shared mutable state.

Q: When should I use volatile instead of synchronized?

Use volatile when you have a single shared variable that is independently read and written - it guarantees visibility (changes are immediately visible to all threads) without mutual exclusion. Use synchronized when you need an atomic compound operation (check-then-act, read-modify-write). volatile alone cannot make counter++ thread-safe because that is three operations; for that, use AtomicInteger.

Part of the Java Enterprise Mastery - engineering the thread.