Spring Kafka: Event-Driven Microservices at Scale

Spring Kafka: Event-Driven Microservices at Scale

"In a massive system, you don't call; you leave a message. Kafka is the post-office for a distributed world, ensuring that every letter is delivered, recorded, and never lost."

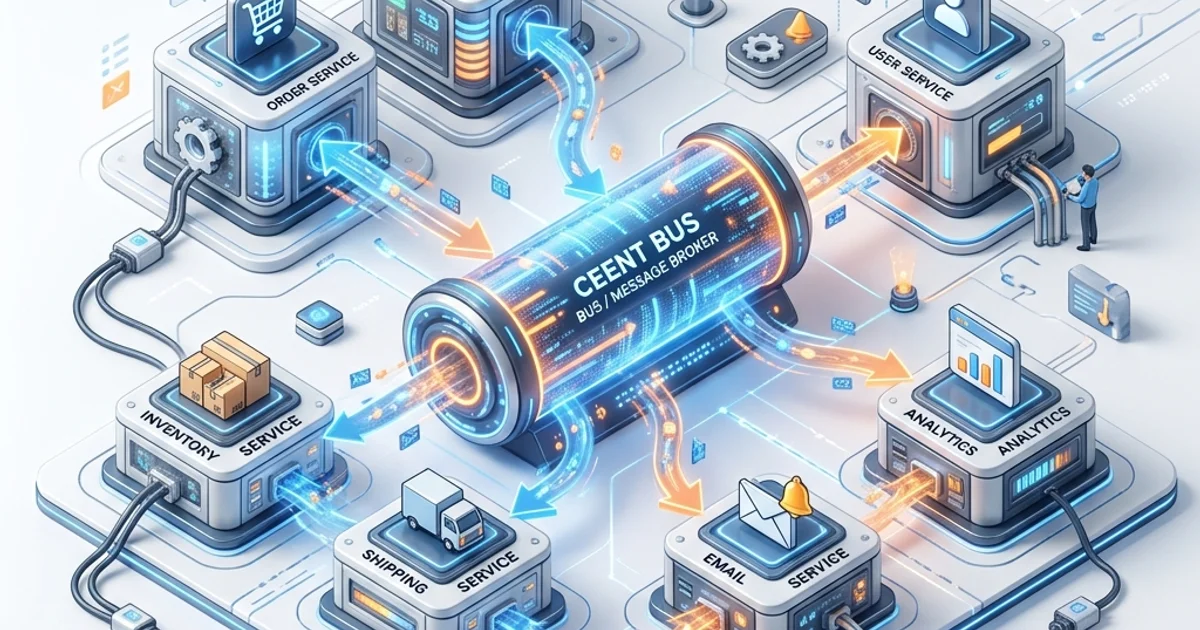

In a traditional microservices architecture, services talk to each other via synchronous REST calls. This creates a "Distributed Monolith"-if one service goes down or becomes slow, the entire system grinds to a halt. Apache Kafka solves this by introducing Event-Driven Architecture (EDA). Instead of waiting for a response, a service simply publishes an event (e.g., "Order Placed") to an infinite, append-only log. Interested services "Consume" this event whenever they have the resources.

This 1,500+ word deep-dive explorers the "Hardware Mirror" of Kafka, the mechanics of Exactly-Once Semantics (EOS), and the resilient patterns like Dead Letter Topics (DLT) that allow systems to scale to billions of daily events.

1. The "Hardware Mirror": Why Kafka is Unbeatably Fast

To understand why Kafka can process terabytes of data while other message brokers (like RabbitMQ or ActiveMQ) struggle, we must look at how it interacts with the physical hardware.

Sequential I/O vs. Random Access

Kafka is essentially a Distributed Commit Log. It does not store data in complex B-Tree structures or hash tables. Instead, every event is appended to the end of a file.

- The Hardware Reality: Modern NVMe or SSD drives can perform sequential writes at thousands of MB/s, but random access (jumping between sectors) is significantly slower. By being "Append-Only," Kafka treats the disk like a high-speed tape drive.

The Page Cache & Zero-Copy

Kafka doesn't try to manage its own memory cache; it relies on the Operating System's Page Cache.

- Writing: When a message is sent to Kafka, it is written to the OS Page Cache and immediately acknowledged. The OS eventually flushes this to the physical disk.

- Reading: When a consumer reads a message, Kafka uses the

sendfilesystem call (Zero-Copy). This bypasses the application space entirely. The data is copied directly from the Page Cache/Disk to the Network Buffer.

- The Result: Kafka can serve data to thousands of consumers simultaneously without ever involving the JVM's Heap, effectively eliminating GC overhead and CPU cycles for data copies.

2. Producer Mechanics: Performance Tuning for 2026

Building a producer in Spring Boot is easy, but building a high-performance producer requires understanding the tradeoff between Latency and Throughput.

Batching and Linger

You should never send messages one by one. This is "Network Chatty" and kills throughput.

batch.size: The maximum amount of data (in bytes) to collect before sending.linger.ms: The time to wait for more messages to arrive before sending the batch. By settinglinger.ms=5, you allow the producer to bundle 100 small messages into one network packet, drastically reducing overhead.

Compression: Trading CPU for Network

By enabling compression (e.g., lz4 or zstd), you reduce the I/O load on the broker and the disk.

- Efficiency: In 2026 systems, CPU cycles are often cheaper than Network Bandwidth. Compressing at the producer and decompressing at the consumer is a "Hardware-Positive" decision.

The "Acks" Configuration

How sure do you need to be that the broker received the message?

acks=0: Fire and forget. Zero reliability, maximum speed.acks=1: The Leader broker must write to its local log.acks=all: All replicas must acknowledge the write. This is the Gold Standard for financial data.

3. Partitioning: The Secret to Infinite Scaling

A Topic in Kafka is divided into Partitions.

- The Parallelism Limit: One partition can only be read by one consumer thread in a group. If you have 10 partitions, you can have a maximum of 10 consumers reading simultaneously. To scale further, you must increase the partition count (e.g., to 100 or 1000).

- Key-Based Routing: By using a message key (like

orderId), you ensure that all messages for that order are routed to the same partition. Since Kafka guarantees order within a partition, your logic can safely assume it will always process "OrderCreated" before "OrderCancelled."

4. Consumer Mechanics: The Rebalance Protocol

Consuming messages in Kafka is a stateful operation. The consumer needs to know where it left off, which is managed via Offsets.

Offsets and Commit Strategies

When a consumer reads a message, it updates its "bookmark" (Offset) in the __consumer_offsets topic.

- Auto-Commit: The consumer commits every 5 seconds. This is dangerous; if the service crashes after processing but before committing, you get duplicate messages.

- Manual Commit: You only commit the offset after your business logic (e.g., saving to DB) has succeeded. This provides a At-Least-Once guarantee.

The Consumer Group & Rebalance

Kafka uses a "Pull" model. Consumers join a Group.

- Self-Healing: If one consumer in a group fails, Kafka starts a Rebalance. It reassigns partitions to the remaining healthy members.

- Hardware Impact: Rebalances are expensive "Stop-the-World" events. In 2026, we prefer Static Membership (setting a

group.instance.id) to avoid rebalancing during short network blips or pod restarts.

5. The Holy Grail: Exactly-Once Semantics (EOS)

One of the hardest problems in distributed systems is ensuring a message is processed exactly once. In Spring Kafka, we achieve this using Transactions.

The "Consume-Process-Produce" Loop

Using the @Transactional annotation on a Kafka listener allows you to:

- Read a message from Topic A.

- Perform a database update.

- Publish a result to Topic B.

- The Atomic Guarantee: If the database update fails, the offset for Topic A is not committed, and the message for Topic B is marked as "Aborted" on the broker. Consumers with

isolation.level=read_committedwill never see the aborted message.

6. Schema Governance: Why JSON is a Production Risk

In a production environment, sending "Raw JSON" is a recipe for disaster. If a producer changes a field name, every consumer breaks.

Apache Avro & The Schema Registry

Avro is a binary serialization format that decouples the data from its structure.

- Space Efficiency: Avro messages are up to 60% smaller than JSON because they don't store field names in every message. This reduces Disk I/O and Network Saturation.

- Compatibility: The Schema Registry ensures that producers cannot publish "Breaking Changes." If you try to delete a required field, the registry will reject the schema.

7. Production Spring Implementation

Here is a production-grade configuration for a Spring Boot 3.x microservice using Kafka.

spring:

kafka:

bootstrap-servers: broker-1:9092,broker-2:9092

producer:

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: io.confluent.kafka.serializers.KafkaAvroSerializer

acks: all

retries: 5

properties:

enable.idempotence: true

linger.ms: 5

batch.size: 32768 # 32KB batches

consumer:

group-id: inventory-service-group

auto-offset-reset: earliest

enable-auto-commit: false

isolation-level: read_committed

properties:

spring.json.trusted.packages: "*"The Idempotent Producer

By setting enable.idempotence: true, the Kafka broker assigns a Producer ID and a Sequence Number to every message. If the producer sends the same message twice due to a network glitch, the broker detects the duplicate sequence number and discards it. This is a hardware-level deduplication strategy.

8. Kafka Streams: The Lightweight Event Processor

For many developers, Kafka is just a message broker. But with Kafka Streams, it becomes a distributed computing platform.

Streams vs. Tables (The Dualism)

- KStream: A stream of every event (e.g., "User clicked button," "User clicked button again"). It is stateless by default.

- KTable: A materialized view of the latest state per key (e.g., "User's current profile").

- Hardware Impact: KStreams leverage the State Store (RocksDB), which is optimized for SSD Sequential Writes. This allows you to perform joins and aggregations on millions of events per second with sub-millisecond latency, without hitting an external database.

9. Observability: Monitoring the Nervous System

In a high-throughput system, "Everything is fine" until the Consumer Lag explodes.

Key Metrics to Track

- Consumer Lag: The delta between the latest message on the broker and the last message processed. If this grows, your hardware is saturated or your code is slow.

- Request Latency (JMX): How long does the broker take to acknowledge a write? High P99 latency usually indicates Disk I/O Wait or Garbage Collection (GC) pauses.

- Network Throughput: Are you saturating the 10Gbps NIC? If so, it's time to enable

zstdcompression or increase thebatch.size.

Micrometer Integration

Spring Boot 3 exposes these metrics automatically via the /actuator/metrics/ endpoints. You should alert if lag exceeds a threshold (e.g., 100,000 messages) to prevent cascading failures.

10. Testing Strategies: Local vs. Production Realism

Testing Kafka is notoriously difficult. You have two main choices:

EmbeddedKafka (Mock)

A lightweight, in-memory broker.

- Pros: Fast, no external dependencies.

- Cons: It doesn't behave like a real Linux-based broker. It ignores Zero-Copy and Page Cache mechanics. Use this only for simple unit tests.

Testcontainers (Real)

Spins up a real Docker container running the Confluent or Bitnami Kafka image.

- The "Hardware-Mirror" Choice: Testing against a real broker allows you verify behavior like Rebalancing, Network Jitter, and Transactional Rollbacks. In 2026, there is no excuse for not using Testcontainers in your CI/CD pipeline.

Summary: Designing the Nervous System

- Hardware First: Optimize for Sequential I/O, Page Cache, and Zero-Copy.

- Batching is Mandatory: Use

linger.msandbatch.sizeto maximize throughput. - Governance over Speed: Always use a Schema Registry (Avro/Protobuf) to prevent "Poison Pill" events.

- Transactions for Integrity: Use EOS for financial or state-critical operations.

- Monitor the Lag: Rising lag is the first sign of architectural failure.

You have moved from simply "Sending messages" to "Architecting a Global, Resilient, and High-Performance Event Fabric."

Frequently Asked Questions

Q: What is the difference between Kafka topics, partitions, and consumer groups?

A topic is a logical stream of records (like a database table). Partitions are the physical shards of a topic - they allow parallel reading. A consumer group is a set of consumers that share the work of consuming a topic: each partition is assigned to exactly one consumer in the group at a time, enabling horizontal scaling. Add more partitions to increase parallelism; add more consumers to a group to process those partitions faster.

Q: How do I guarantee exactly-once semantics in Kafka with Spring?

Enable idempotent producers (spring.kafka.producer.properties.enable.idempotence=true) and use Kafka transactions (spring.kafka.producer.transaction-id-prefix). On the consumer side, process within a transaction and commit offsets atomically with your database write using the transactional outbox pattern or Kafka's own consumer-producer transactions. Note that exactly-once is expensive - evaluate whether at-least-once with idempotent consumers is sufficient for your use case.

Q: What is consumer lag and why does it matter?

Consumer lag is the difference between the latest offset written to a partition and the last offset processed by a consumer group. A growing lag means your consumers are falling behind production rate - perhaps due to slow processing, insufficient consumer instances, or a spike in traffic. Monitor lag with kafka-consumer-groups.sh --describe or tools like Burrow or Confluent Control Center. Alerting on lag is more actionable than alerting on raw message throughput.

Part of the Java Enterprise Mastery - engineering the event.