Monolith to Microservices: Migration Guide

Monolith to Microservices: Migration Guide

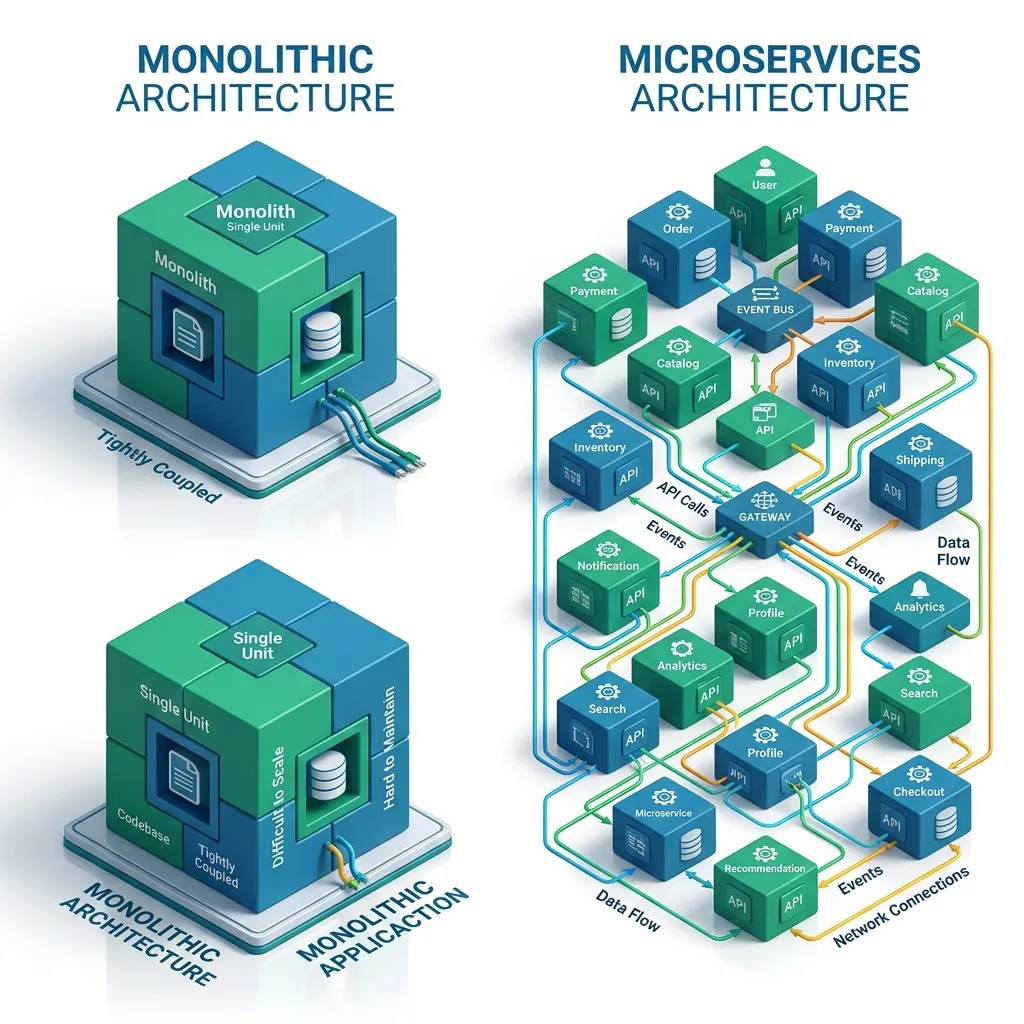

Migrating a monolith to microservices is one of the most challenging and frequently attempted engineering projects. Done wrong, it produces a distributed monolith - all the complexity of distributed systems with none of the benefits. Done well, it enables independent team scaling, faster deployments, and component-level resilience.

This guide covers the Strangler Fig pattern for incremental migration, the shared database problem and how to resolve it safely, data synchronisation patterns, identifying the right service boundaries, and the anti-patterns that derail migrations before they deliver value.

Why Migrate (and Why Not To)

Before starting, be explicit about the problem you are solving:

| Real migration trigger | Not a migration trigger |

|---|---|

| Specific teams blocked from deploying independently | "We want to be modern" |

| One component (search, video processing) needs separate scaling | Rewriting for its own sake |

| Different parts require different technology stacks | The monolith works fine |

| Reliability problems caused by coupling (one bug crashes all features) | The team is small |

The most common mistake is migrating for architectural aesthetics rather than a concrete business problem. Instagram, GitHub, and Shopify ran at scale as monoliths. The question is not "should we have microservices?" but "what specific problem will microservices solve for us?"

The Strangler Fig Pattern

The safest migration approach: extract functionality incrementally, letting the new architecture grow around the old one until the monolith is empty and can be decommissioned.

Phase 0 - Current state:

Clients -> Monolith -> Single database

Phase 1 - Add API Gateway:

Clients -> API Gateway -> Monolith (all routes)

Phase 2 - Extract first service:

Clients -> API Gateway -> Monolith (all routes except /payments)

-> Payment Service (handles /payments)

Phase 3 - Extract second service:

Clients -> API Gateway -> Monolith (remaining routes)

-> Payment Service

-> Notification Service

...repeat until monolith is emptyThe API gateway is the routing control point. Each migration step:

- Build the new service alongside the monolith (do not delete monolith code yet)

- Deploy both in production

- Route a small percentage of traffic to the new service (canary)

- Monitor for errors and performance regressions

- Route 100% traffic to the new service

- Delete the code from the monolith

If a problem emerges, flip the gateway route back to the monolith - instant rollback, zero downtime.

Identifying Service Boundaries

The most important decision in a migration is where to draw boundaries. Use Domain-Driven Design's Bounded Context as the guide: each service should represent a cohesive area of the business with its own language, data, and team.

Signs of a clean boundary:

- The data belongs to one team

- The feature can be deployed without coordinating with other teams

- The domain language is distinct (order vs. payment vs. inventory)

Signs of a bad boundary:

- The new service calls the monolith for every request

- Two services share a database table

- Deploying one service requires deploying another

- The service has no business meaning (a "database wrapper" service)Identifying boundaries in the codebase

# Find modules with high internal cohesion and low external coupling

# Look at the dependency graph of your monolith

# For a Node.js/TypeScript project, use madge

npx madge --circular src/ # Find circular dependencies (bad boundaries)

npx madge --image graph.svg src/ # Visualise the full dependency graph

# For Java, use ArchUnit to measure coupling

# For Python, use import-linterThe Shared Database Problem

The hardest part of monolith migration: the monolith and new microservices often need the same data.

Initial state (acceptable for short transition):

Monolith ------+

+---> Shared PostgreSQL database

Payment Service+

Problem: tight coupling - schema changes in the monolith can break Payment ServicePhase 1: Database View / Anti-Corruption Layer

Create a stable interface between the monolith's database and the new service:

-- Payment Service reads from a dedicated view, not raw tables

CREATE VIEW payment_service.orders AS

SELECT

id,

user_id,

total_amount,

currency,

status

FROM monolith.orders

WHERE status NOT IN ('draft', 'abandoned');

-- When monolith schema changes, only the view needs updating

-- Payment Service is insulated from monolith internalsPhase 2: Dual-Write with Events

Begin separating the databases while keeping them in sync:

// Monolith: when an order is paid, write to both databases

async function recordPayment(orderId: string, paymentId: string) {

await monolithDb.transaction(async (trx) => {

// Write to monolith database (old system of record)

await trx('payments').insert({ orderId, paymentId, status: 'completed' });

// Publish event so Payment Service database stays in sync

await trx('outbox_events').insert({

eventType: 'payment.completed',

payload: JSON.stringify({ orderId, paymentId }),

createdAt: new Date()

});

});

// Outbox pattern: a separate process polls the outbox and publishes to Kafka

}// Payment Service: consumes events and maintains its own copy

kafka.consumer.on('payment.completed', async (event) => {

const { orderId, paymentId } = JSON.parse(event.value);

await paymentServiceDb.payments.upsert({ orderId, paymentId, status: 'completed' });

});Phase 3: Migrate the Source of Truth

Once the Payment Service database is fully populated and both services use event synchronisation:

- Make the Payment Service the authoritative source for payment data

- Change the monolith to read payment data from the Payment Service API (or via events)

- Delete the payments table from the monolith database

Data Synchronisation Patterns

Change Data Capture (CDC)

CDC tracks every change in the monolith's database and publishes it as events, without modifying the monolith's application code:

# Debezium CDC connector configuration

# Reads PostgreSQL WAL (Write-Ahead Log) and publishes to Kafka

{

"name": "monolith-cdc",

"config": {

"connector.class": "io.debezium.connector.postgresql.PostgresConnector",

"database.hostname": "monolith-db",

"database.port": "5432",

"database.dbname": "monolith",

"database.server.name": "monolith",

"table.include.list": "public.orders,public.users,public.inventory",

"plugin.name": "pgoutput",

"topic.prefix": "cdc.monolith"

}

}Debezium captures every INSERT, UPDATE, DELETE from the specified tables and publishes them to Kafka topics. Microservices subscribe and maintain their own read copies.

CDC is valuable because it works without any changes to the monolith application code - essential for legacy systems where the code may be difficult to modify safely.

Service Communication During Migration

During migration, the new service often needs data that still lives in the monolith:

// Synchronous: Payment Service calls Monolith API for user data

// Acceptable short-term; creates coupling

const userResponse = await fetch(`${process.env.MONOLITH_URL}/internal/users/${userId}`);

const user = await userResponse.json();// Better: Event-driven - Payment Service maintains its own user data replica

// User data synced via CDC events, no runtime dependency on monolith

const user = await paymentServiceDb.users.findById(userId);Migration communication maturity progression:

1. Direct database access (shared database) - simple but tightly coupled

2. Internal API calls to monolith - looser coupling, runtime dependency

3. Event-driven with local data copy - independent, most resilientAnti-Patterns That Derail Migrations

The Big Bang Rewrite: Stopping all feature development to rewrite everything at once. Results in months of no value delivery, accumulated business pressure, and usually a rewrite that recreates the monolith's problems in a distributed architecture.

The Distributed Monolith: Splitting code into separate deployable units without splitting data or teams. Services still share a database, must be deployed together, and fail together. All the operational complexity of microservices with none of the benefits.

Signs you've built a distributed monolith:

- Deploying Service A requires deploying Service B

- Services share a database or schema

- A single user request calls 5 services synchronously in a chain

- Services fail in concert when one is slowWrong Boundaries: Splitting on technical layers (a "database service", a "validation service") rather than business domains. Creates chatty services with no meaningful independence.

Premature Migration of Working Features: Migrating stable, low-change code to earn architectural points. The code works; leave it in the monolith. Only extract components that have a concrete business reason for independence.

Migration Checklist

Before extracting each service, verify:

[ ] The service boundary is a clear business domain (not a technical layer)

[ ] One team will own this service end-to-end

[ ] The data schema for this service is clearly separable

[ ] The service's API contract is defined (OpenAPI or protobuf)

[ ] Monitoring and alerting are configured before routing production traffic

[ ] Circuit breakers are in place for calls to/from the monolith

[ ] The rollback plan is tested (route 100% back to monolith)

[ ] Database migration plan accounts for the shared database period

[ ] The team understands distributed transactions and has saga patterns if neededFrequently Asked Questions

Q: How long does a monolith-to-microservices migration typically take?

Significant migrations at large organisations take 2-5 years. The Strangler Fig approach means value is delivered throughout - you do not wait until migration is complete to see benefits. Each extracted service that is independently deployable provides immediate value. Plan the migration in business-justified increments, not as a single project with a completion date.

Q: Should we extract the most complex part of the monolith first or the easiest part?

Extract the easiest, most clearly bounded part first. The first extraction builds the shared infrastructure (API gateway, service deployment pipeline, monitoring, service discovery) and establishes the team's capability. The learning curve is steep for distributed systems - accumulate that experience on a low-risk extraction before touching critical paths like authentication or payments.

Q: What happens to the monolith's unit tests when a service is extracted?

The relevant tests move with the code. Integration tests that tested the monolith end-to-end now become contract tests - they verify that the new service's API honours the same contract the monolith's internal API had. Use tools like Pact for consumer-driven contract testing to catch API compatibility issues before they reach production.

Q: Is it ever correct to build a new monolith feature during migration?

Yes. If a new business requirement arrives during migration, building it in the monolith is often correct: faster to deliver, easier to change as requirements evolve, and avoids premature microservice boundaries on new code whose shape is still unclear. The migration and new feature development can proceed in parallel - the Strangler Fig does not freeze the monolith.

Key Takeaway

The Strangler Fig pattern is the only safe migration strategy for production systems: extract one service at a time, route traffic incrementally, and maintain the ability to roll back instantly. The shared database is the primary technical challenge - resolve it in three phases: shared access with a view layer, dual-write with event synchronisation, then transfer of source-of-truth ownership. Avoid the anti-patterns: no big-bang rewrites, no technical-layer boundaries, no shared databases between "independent" services. Migrate only features that have a concrete business reason for independence, and stop when the remaining monolith is stable and low-change.

Read next: Multi-Agent Architecture: The Future of AI Orchestration ->

Part of the Software Architecture Hub - engineering the split.