SQL Sorting and Pagination: ORDER BY and LIMIT

SQL Sorting and Pagination: ORDER BY and LIMIT

In your computer science education, you were likely taught about QuickSort or MergeSort as CPU-bound operations. In a database, sorting is much more complex because the data often exceeds the available RAM. If you ask a database to sort $100$ GB of financial transactions on a server with $16$ GB of RAM, the engine has to orchestrate an External Merge Sort, managing thousands of temporary files on the disk.

This 1,500+ word guide is your deep-dive into the "Presentation Layer." We will explore how to move from "Basic Sorting" to "Architectural Efficiency."

1. The Sort Engine: Internal vs. External Sorting

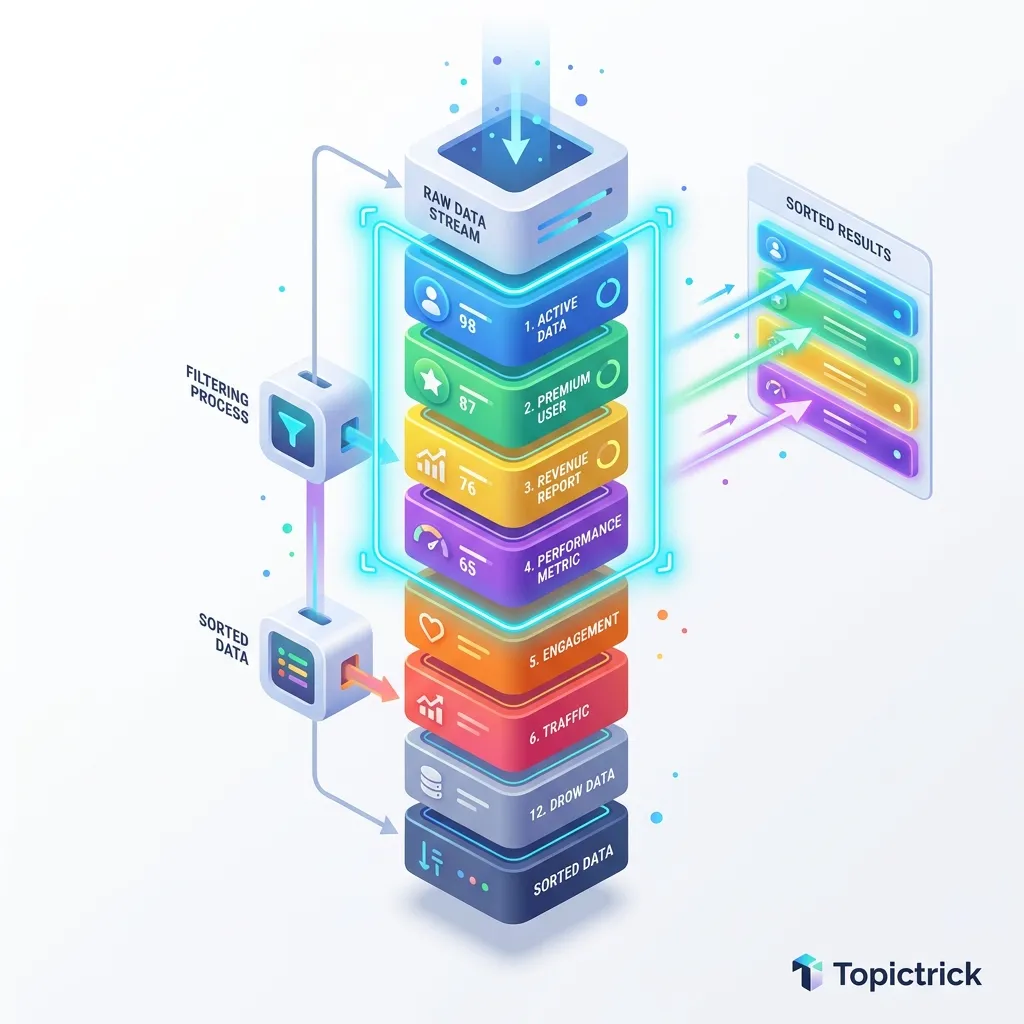

By default, an RDBMS stores rows in a "Heap"-essentially an unordered collection of pages. When you run ORDER BY, the engine must determine the physical path to reorganize those results.

The Index Advantage (No-Sort)

If you sort by a column that has a B-Tree Index, the engine doesn't "Sort" anything. It simply traverses the index pointers in order.

- The Physics: It uses Sequential Read patterns, which are highly optimized by the OS's read-ahead cache. This is the only way to sort millions of rows in milliseconds.

The RAM Sort (In-Memory)

If there is no index, the engine allocates a chunk of RAM (Postgres calls this work_mem).

- If the result set fits in this RAM: The engine perform a quicksort or heapsort in memory.

- Limit: If you have 100 users sorting data simultaneously, and

work_memis set to 64MB, you are using 6.4GB of RAM just for sorting.

The Disk Sort (External Merge)

If the data exceeds work_mem, the engine performs an External Merge Sort:

- It sorts chunks of data in RAM and writes them to the

tmpdirectory on the hard drive. - It then "Merges" these sorted files together to produce the final result.

- Performance Warning: A "Disk Sort" is $100$x to $1,000$x slower than a RAM sort. In production, this is a "System-Level failure."

The "Abbreviated Keys" Optimization

To speed up string and numeric sorts, modern Postgres uses Abbreviated Keys.

- The Mirror Physics: Dereferencing a pointer to a string on a data page is expensive for the CPU cache.

- The Optimization: The engine copies the first 8 bytes of the value (the "prefix") into a compact array in RAM. It sorts these prefixes first.

- The Result: Most comparisons are resolved by the prefix alone, avoiding the need to visit the actual data page for the majority of the sort operation.

Top-N Optimization: The Heap Sort Mirror

If you provide a LIMIT, the engine might switch from a global sort to a Top-N Heap Sort.

- The Algorithm: Instead of sorting all $1$ million rows, the engine maintains a Priority Queue (Min-Heap) of the top $N$ items.

- The Physics: As it scans the table, it only keeps a few rows in RAM. If a new row is better than the "Worst" item in the current Top-N, it replaces it.

- Why this matters: This allows the database to sort millions of rows using a tiny amount of memory, as long as your requested

LIMITis small.

2. Deterministic Sorting: The Tie-Breaker Rule

One of the most common bugs in SQL pagination is Non-Deterministic Results. If you sort by a column with duplicate values (like created_at or category), the database is free to return those rows in any order it chooses.

The Bug

- User looks at Page 1. Row A and Row B have the same timestamp. Row A appears.

- User clicks Page 2. Row A appears again because the database changed its mind about the order of duplicate timestamps during the second query. The user missed Row B entirely.

The Architect's Solution

Always include a Unique Column (usually the Primary Key) as a "Tie-Breaker" in your sort.

ORDER BY created_at DESC, id DESC;

This ensures that the order is mathematically guaranteed to be the same every time the query is run.

3. The Performance Trap: LIMIT and OFFSET

Pagination in amateur tutorials is almost always implemented like this:

SELECT * FROM transactions ORDER BY id LIMIT 20 OFFSET 1000000;

The Hardware Reality of OFFSET

To "Skip" $1,000,000$ rows, the database engine must physically read those $1,000,000$ rows, count them, and then throw them away.

- The $O(N)$ Problem: As the user scrolls deeper (to Page 5,000 or 10,000), the query takes longer and longer. Eventually, the query times out, and your database hits 100% Disk I/O.

- The Wall of Data: This is why enterprise systems (like Google Search) don't let you browse to the $10,000$th page of results.

4. Keyset Pagination (Cursor-Based): The Professional Way

To build a high-performance system like an infinite-scroll feed (TikTok, Instagram), you must use Keyset Pagination. Instead of "Skipping" rows, you ask for data immediately follows the last item you saw.

The Strategy

Imagine you just finished viewing ID 12500.

SELECT * FROM transactions WHERE id < 12500 ORDER BY id DESC LIMIT 20;

Why this is the "Golden Path":

- Speed: The

WHERE id < 12500allows the engine to use the B-Tree index to jump directly to the starting point. It only reads exactly 20 rows. - Consistency: If 5 new transactions are added while the user is reading, they are added at the top ($id > 12500$), so the user's "Next" page stays perfectly stable.

- Efficiency: The performance of Page 1 is identical to the performance of Page 1,000,000.

5. Case Study: The "Reports" Crash

A SaaS company had a dashboard that allowed users to sort their $5,000,000$ invoices by "Total Amount."

- When a user clicked "Sort by Amount," the database had to perform an External Merge Sort because $5,000,000$ rows didn't fit in the

work_mem. - Because $10$ users clicked this at the same time, the server ran out of disk space in the

tmpfolder and crashed the entire production environment.

The Fix

- Composite Index: They added an index on

amount. - Top-N Optimization: They restricted the UI so users could only see the "Top $1,000$" results. By adding a

LIMIT, the SQL engine used a Heap Sort algorithm that only keeps the best 1,000 items in memory, avoiding the disk sort entirely.

6. Summary: The Presentation Checklist

- Index Your Sorts: If you

ORDER BYa column, it must have an index for production-scale tables. - Explicit NULLs: Use

NULLS LASTto ensure UI consistency across different database types (Postgres vs MySQL). - Kill the OFFSET: Switch to Keyset Pagination for any table larger than $50,000$ rows.

- Deterministic Sorts: Always add the

Primary Keyas the final tie-breaker in yourORDER BYclause. - Audit Temp Files: Monitor your database metrics for "Temp File Writes." If you see them, your

work_memis too low or your indexes are missing.

Mastering sorting and pagination is the transition from "Retrieving data" to "Architecting User Velocity." By understanding the hardware limits of the Sort Buffer and the algorithmic trap of OFFSET, you build applications that feel "Snappy" even when managing datasets that move across continents.

7. The Keyset Mirror: Advanced Paging Logic

For the ultimate performance, we use Tuple Comparison for keyset pagination.

The Physics of (A, B) > (X, Y)

If you are sorting by created_at and id, your cursor must handle the tie-breaker correctly.

WHERE (created_at, id) < ('2026-04-18 12:00:00', 5050)

- The Engine Reality: Most modern engines optimize this into a range scan that covers both conditions in a single index seek.

- Result: You achieve perfect $O(\log N)$ performance regardless of where the user is in the data mirror.

Masterclass Presentation Checklist

- Audit Sort Determinism: Ensure every query has a unique tie-breaker (Primary Key).

- Implement Keyset Pagination: Replace

OFFSETwith cursor-basedWHERElogic for large tables. - Configure work_mem: Set the sort buffer large enough for 99% of your common queries to avoid disk swaps.

- Monitor log_temp_files: Configure your database to log any sort that spills to the storage mirror.

Read next: SQL Data Types: The Storage Mirror ->

Frequently Asked Questions

Q: What is the problem with OFFSET-based pagination for large datasets?

OFFSET pagination (LIMIT 20 OFFSET 1000) requires the database to scan and discard all rows before the offset on every page request. Page 1,000 of 20 rows requires processing 20,000 rows internally - performance degrades linearly as pages go deeper. For large tables, deep offsets become unacceptably slow. The solution is keyset pagination (cursor-based): record the last seen primary key value and use WHERE id > last_id ORDER BY id LIMIT 20. This is O(1) regardless of page depth because the index goes directly to the starting point.

Q: When should I use NULLS FIRST or NULLS LAST in ORDER BY?

By default, PostgreSQL sorts NULLs last in ascending order and first in descending order. MySQL and SQL Server treat NULLs as smaller than any value. When NULLs in sorted output would mislead users - for example, if NULL means "not yet rated" and you want those rows at the bottom regardless of sort direction - use ORDER BY rating DESC NULLS LAST to control their position explicitly. Always test sort behaviour with NULL-containing data when output ordering is user-facing.

Q: What is the difference between LIMIT and FETCH FIRST in SQL?

LIMIT n is widely supported (PostgreSQL, MySQL, SQLite) but is not standard SQL. FETCH FIRST n ROWS ONLY is the SQL standard equivalent supported by PostgreSQL, SQL Server, and DB2. They are functionally identical in most contexts. When writing SQL intended to be portable across databases (or when working in a corporate environment with SQL Server or DB2), prefer the standard FETCH FIRST syntax. When writing PostgreSQL or MySQL specifically, LIMIT is fine and more concise.