Caching Strategy: CDNs, Sidecars, and Memory Buffers

Caching Strategy: CDNs, Sidecars, and Memory Buffers

As an architect, your primary enemy is Latency. Latency is not just a software property; it is a physical reality governed by the speed of light and the seek time of hardware drives. Every microservice call that hits a "Mechanical" or even "Solid State" database is an invitation for a bottleneck.

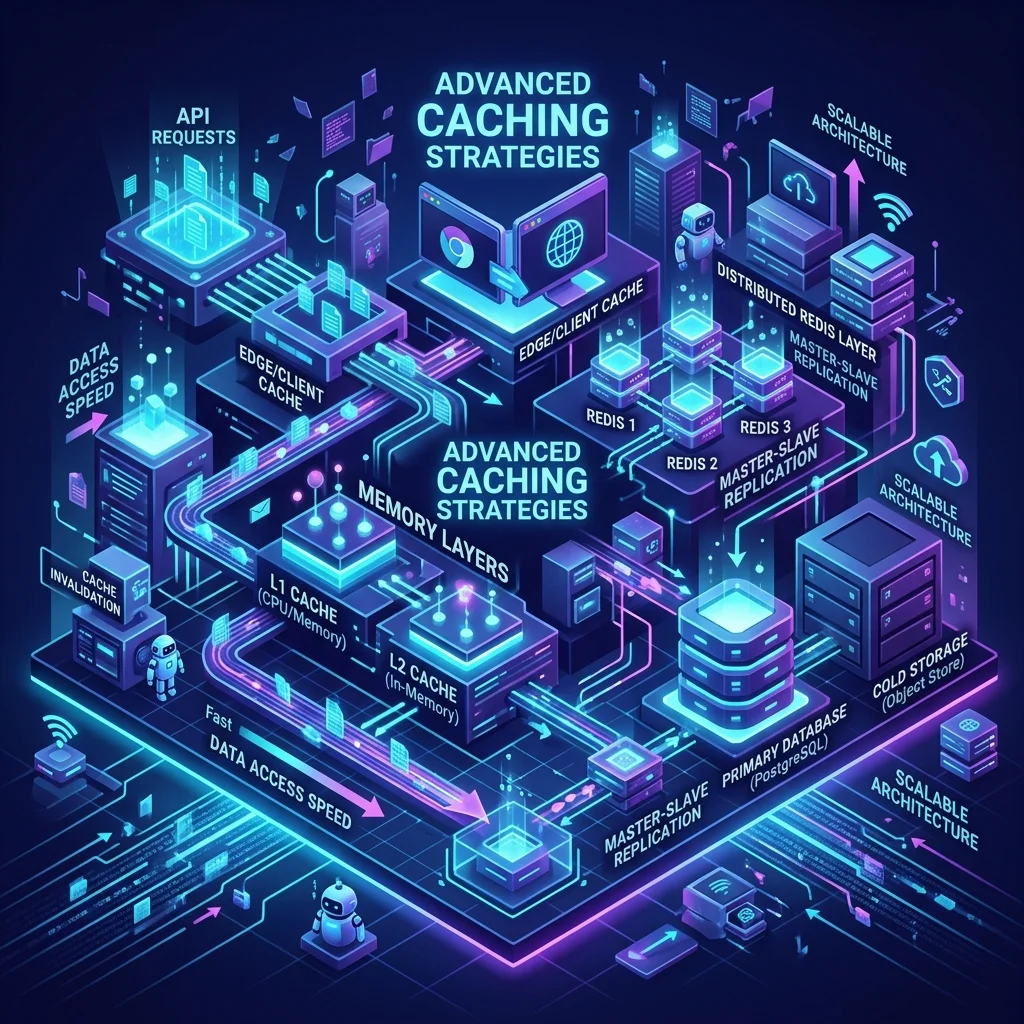

This 1,500+ word deep dive investigates the Caching Pyramid. We will move beyond simple "Key-Value" storage and explore how to orchestrate global content delivery networks (CDNs), sidecar proxies, and in-pod memory buffers to build systems that feel "Instant" to every human on Earth.

1. Hardware-Mirror: The CPU Cache Line Physics

Most developers treat the "Memory" as a single block. High-performance architects treat it as a series of Cache Lines.

The physics of the 64-Byte Line

- How it works: When a CPU fetches a single 4-byte integer from RAM, it doesn't just get that integer. It fetches the entire 64-byte block (a Cache Line) that the integer belongs to.

- Why this matters: If you store related items (e.g., an object's properties) contiguously in memory, the CPU fetches them all in one "Memory Hop." If your data is scattered, the CPU must perform a slow "RAM Fetch" for every field.

- False Sharing: This is a performance killer where two different threads update two separate variables that accidentally live on the same 64-byte cache line. The CPU is forced to "Sync" the line between cores constantly, reducing your speed by 90%.

The Latency Pyramid (Review)

| Tier | Location | Latency (Approx) | Scale Comparison |

|---|---|---|---|

| L1 Cache | On-CPU | 1.0 ns | Blink of an eye |

| L3 Cache | On-CPU | 20 ns | Length of a breath |

| RAM | Motherboard | 100 ns | A long weekend |

| Redis | Internal Network | 500 µs | A four-year degree |

Architecture Rule: Your goal is to keep the "Hot Data" in the CPU's L1/L2 cache as much as possible. This is the foundation of Data-Oriented Design.

2. Distributed Caching Physics: The "Revolving Door"

When your Redis or Memcached server is full, it must decide what to delete. This is Eviction Geometry.

LRU (Least Recently Used)

- The Concept: Discard the item that hasn't been accessed for the longest time.

- The Weakness: It is vulnerable to a "Scan." If a background job reads every item once, it will flush the entire "Truly Hot" cache and replace it with "One-time" junk.

ARC (Adaptive Replacement Cache)

Used in high-perf systems like ZFS and high-scale Postgres.

- The Logic: It tracks both "Frequency" and "Recency."

- The Physics: It maintains two lists. If a piece of data is accessed twice, it moves to the "Frequency" list, making it $10x$ harder to evict. This prevents a one-time scan from destroying your cache hit rate.

3. Global Edge Caching: The Speed of Light Tax

A CDN (Content Delivery Network) is essentially a Geographic Cache.

- The Physics: If your server is in Virginia and your user is in Tokyo, the speed of light dictates a minimum 150ms lag.

- The Solution: Edge Caching. By storing assets in a Tokyo Point-of-Presence (PoP), you reduce the trip to 10ms.

- Advanced Pattern: Stale-While-Revalidate.

- The CDN serves the "Stale" (old) version of the page instantly.

- In the background, it fetches the "Fresh" version and updates its cache for the next user.

- The Benefit: Zero "User-Visible" latency for content updates.

3. Layer 2: The Sidecar Proxy (Pod-Local Speed)

In a microservice Mesh (Review Module 69), you can implement caching inside the service's Sidecar (e.g., Envoy).

- The Logic: Instead of your code calling the database, it calls

localhost. The sidecar interceptor checks its local memory. - Hardware-Mirror: This utilizes the Loopback Interface, which operates at the speed of local RAM. It avoids the "Network Hop" entirely.

- Use Case: Frequently read, rarely changed configuration data or "Product Metadata" for an e-commerce catalog.

4. Layer 3: Shared Memory (The Redis/Memcached Core)

When you need a "Single Source of Truth" that is faster than a database, you reach for an In-Memory store.

- Redis (Single-Threaded Physics): Because Redis is single-threaded, it avoids "Lock Contention," making it incredibly fast for simple O(1) operations. However, a single slow operation (like

KEYS *) will block the entire server. - Memcached (Multi-Threaded Throughput): Better for "Dead-Simple" caching of large objects where persistence is not required.

5. Defense: The "Thundering Herd" & Stability Patterns

One of the most dangerous moments for a system is when a "Hot" cache key (e.g., "HOMEPAGE_DATA") expires.

- The Problem: $10,000$ concurrent requests see a "Cache Miss" and all hit the database at the same millisecond. The database crashes. This is a Thundering Herd.

Stability Strategy 1: Probabilistic Jitter

Never set your TTLs to exact numbers (e.g., 3,600s). Add a random "Jitter" of 1-30 seconds. This ensures that your 1,000 cached keys don't all expire at once.

Stability Strategy 2: Bloom Filters

Before checking the cache or the DB, check a Bloom Filter (Review Module 15).

- The Logic: A Bloom Filter is a space-efficient bit-array that tells you if a key "Definitely Doesn't Exist."

- Benefit: It prevents "Cache Penetration Attacks" where a hacker tries to query millions of non-existent IDs to bypass your cache and crash your database.

6. Summary: The Caching Architect's Checklist

- Analyze the Hit-Rate: A cache with a hit rate of less than 50% is often a "Net Negative" because you are paying the latency tax of checking the cache AND then the database.

- Invalidation Strategy: Choose your weapon. Write-Through for high consistency; Cache-Aside for simplicity; Write-Back for extreme performance (at the risk of data loss).

- Monitoring: Always track "Cache Serialization Latency." Sometimes, the time it takes to "JSON Stringify" a huge object is slower than just querying a small row from the database.

- Hardware Awareness: Monitor your Eviction Policy. If your Redis is "Evicting" keys constantly, your RAM is too small for your working set.

- Reverence for TTL: Treat every TTL as a contract. Do not "Hard-Code" them; make them configurable via your IDP (Review Module 57).

Caching is the ultimate act of Orchestrating Throughput. By using CDNs for global reach, Sidecars for pod-local speed, and Redis for shared state, you reduce the physical load on your core database and maximize the value of every CPU cycle. You graduate from "Storing data" to "Architecting the Instantaneous Application."

Phase 65: Performance Actions

- Measure the "Cache Hit Ratio" on your primary production cluster.

- Implement "Jitter" on all cache TTLs.

- Profile your data structures for Cache Locality: Use a tool like

valgrind --tool=cachegrindto measure L1 cache misses in your core logic. - Evaluate the ARC vs LRU performance for your specific workload.

Read next: Data Privacy & Sovereignty: Architecting for GDPR →

Part of the Software Architecture Hub — engineering the instant.