C Linked Lists: Singly, Doubly & Circular - A Complete Implementation Guide

C Linked Lists: Singly, Doubly & Circular - A Complete Implementation Guide

Table of Contents

- Why Linked Lists? Arrays vs Linked Lists

- The Anatomy of a Linked List Node

- Singly Linked List: Full Implementation

- Doubly Linked List: Two-Way Traversal

- Circular Linked List: Round-Robin Scheduling

- Memory Management: Freeing the Entire List

- The LRU Cache Pattern

- Intrusive Linked Lists: The Linux Kernel Approach

- Performance Analysis: Cache Misses and Fragmentation

- Frequently Asked Questions

- Key Takeaway

Why Linked Lists?

To choose the right data structure, you must understand the trade-offs at the memory level:

| Operation | Array | Linked List |

|---|---|---|

Index access O(1) | ✅ Instant | ❌ Must traverse |

| Search | O(n) | O(n) |

| Insert at head | O(n) (shift all) | ✅ O(1) |

| Insert at tail | O(1) amortized | O(1) with tail pointer |

| Delete at known position | O(n) (shift) | ✅ O(1) |

| Memory overhead | None | 8-byte next pointer per node |

| Cache performance | ✅ Excellent | ❌ Poor (scattered heap) |

| Dynamic sizing | Requires realloc | ✅ Natural |

Use a linked list when: You need frequent insertions/deletions at arbitrary positions, memory fragmentation is not a concern, and sequential traversal is acceptable.

Use an array when: You need random access by index, cache performance is critical, or the dataset is mostly read and rarely modified.

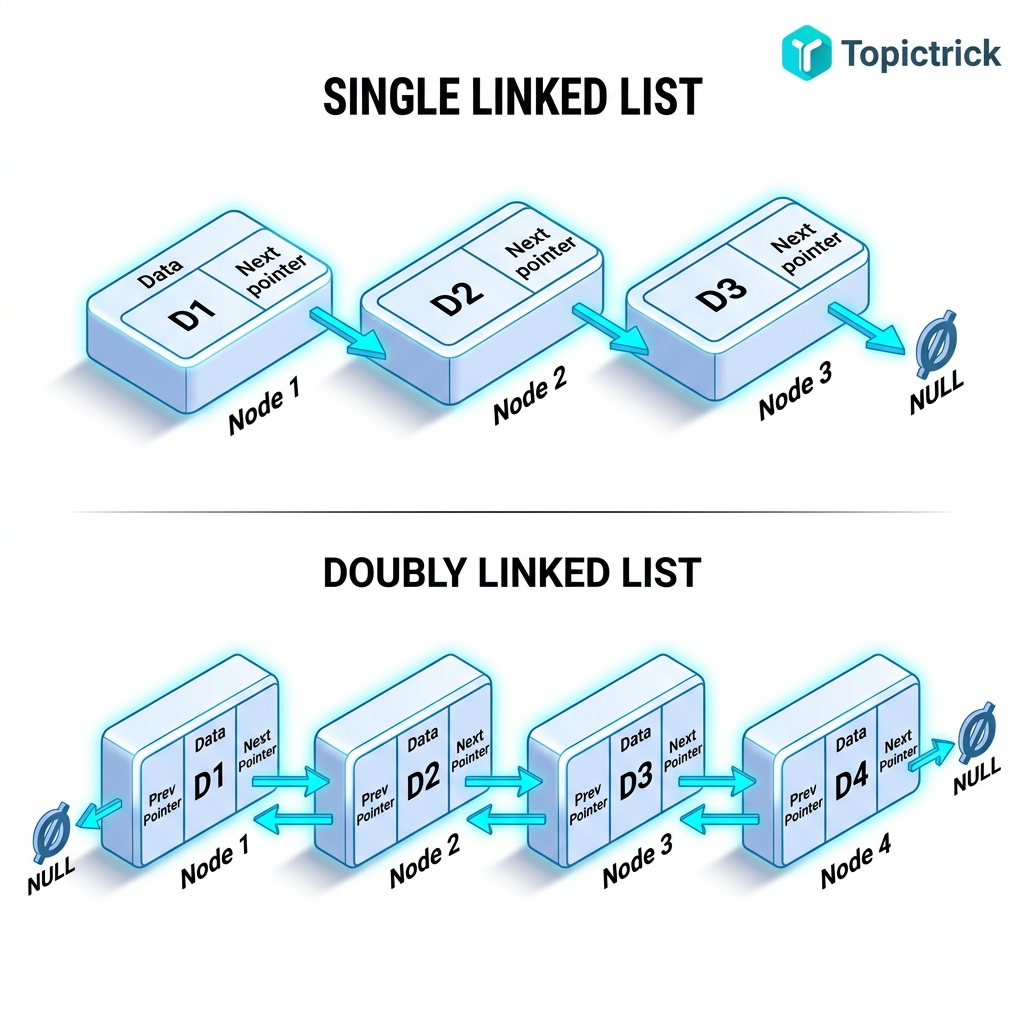

The Anatomy of a Linked List Node

Each node in a linked list is a self-referential struct: it holds data and a pointer to the next node of the same type:

#include <stdlib.h>

#include <stdio.h>

#include <stdint.h>

// Singly linked node

struct Node {

int32_t data; // The payload

struct Node *next; // Pointer to next node, or NULL if last

};

// Convenience typedef

typedef struct Node Node;

// Factory function: allocate and initialize a node

Node *node_create(int32_t value) {

Node *n = malloc(sizeof(Node));

if (!n) return NULL; // Always check allocation

n->data = value;

n->next = NULL;

return n;

}The key insight: struct Node *next is a pointer to the same struct type. This self-reference is what creates the chain. Each node can be anywhere in heap memory - they do not need to be contiguous.

Singly Linked List: Full Implementation

A complete, production-quality singly linked list with all fundamental operations:

#include <stdlib.h>

#include <stdio.h>

#include <stdint.h>

#include <stdbool.h>

typedef struct Node {

int32_t data;

struct Node *next;

} Node;

typedef struct {

Node *head;

Node *tail; // Tail pointer for O(1) append

size_t length;

} LinkedList;

// Initialize an empty list

void list_init(LinkedList *list) {

list->head = NULL;

list->tail = NULL;

list->length = 0;

}

// Prepend: O(1) - insert at head

bool list_prepend(LinkedList *list, int32_t value) {

Node *n = malloc(sizeof(Node));

if (!n) return false;

n->data = value;

n->next = list->head;

list->head = n;

if (!list->tail) list->tail = n; // First node is also the tail

list->length++;

return true;

}

// Append: O(1) thanks to tail pointer

bool list_append(LinkedList *list, int32_t value) {

Node *n = malloc(sizeof(Node));

if (!n) return false;

n->data = value;

n->next = NULL;

if (list->tail) {

list->tail->next = n;

} else {

list->head = n; // Empty list: new node is both head and tail

}

list->tail = n;

list->length++;

return true;

}

// Remove first node: O(1)

bool list_pop_front(LinkedList *list, int32_t *out_value) {

if (!list->head) return false;

Node *removed = list->head;

*out_value = removed->data;

list->head = removed->next;

if (!list->head) list->tail = NULL; // List is now empty

free(removed);

list->length--;

return true;

}

// Find a value: O(n)

Node *list_find(const LinkedList *list, int32_t value) {

for (Node *n = list->head; n != NULL; n = n->next) {

if (n->data == value) return n;

}

return NULL;

}

// Delete first occurrence of a value: O(n)

bool list_delete(LinkedList *list, int32_t value) {

Node **current = &list->head; // Pointer to pointer trick - elegant deletion

while (*current) {

Node *node = *current;

if (node->data == value) {

*current = node->next; // Bypass the node

if (node == list->tail) list->tail = NULL; // Update tail if needed

free(node);

list->length--;

return true;

}

current = &node->next; // Move to the next node's next pointer

}

return false;

}

// Print the entire list

void list_print(const LinkedList *list) {

printf("List [%zu]: ", list->length);

for (Node *n = list->head; n != NULL; n = n->next) {

printf("%d", n->data);

if (n->next) printf(" -> ");

}

printf(" -> NULL\n");

}

// Free all nodes - MUST call when done

void list_destroy(LinkedList *list) {

Node *current = list->head;

while (current) {

Node *next = current->next; // Save next BEFORE freeing

free(current);

current = next;

}

list->head = NULL;

list->tail = NULL;

list->length = 0;

}

int main(void) {

LinkedList list;

list_init(&list);

list_append(&list, 10);

list_append(&list, 20);

list_append(&list, 30);

list_prepend(&list, 5);

list_print(&list); // List [4]: 5 -> 10 -> 20 -> 30 -> NULL

list_delete(&list, 20);

list_print(&list); // List [3]: 5 -> 10 -> 30 -> NULL

list_destroy(&list);

return 0;

}Doubly Linked List: Two-Way Traversal

Adding a prev pointer enables O(1) backward traversal and more efficient deletion (no need to track the previous node separately):

typedef struct DNode {

int32_t data;

struct DNode *next;

struct DNode *prev;

} DNode;

typedef struct {

DNode *head;

DNode *tail;

size_t length;

} DLinkedList;

// Insert after a known node: O(1) - the power of doubly linked lists

void dll_insert_after(DLinkedList *list, DNode *pos, int32_t value) {

DNode *new_node = malloc(sizeof(DNode));

if (!new_node) return;

new_node->data = value;

new_node->next = pos->next;

new_node->prev = pos;

if (pos->next) {

pos->next->prev = new_node;

} else {

list->tail = new_node; // Was last node

}

pos->next = new_node;

list->length++;

}

// Delete a known node: O(1) - no traversal needed

void dll_delete_node(DLinkedList *list, DNode *node) {

if (node->prev) node->prev->next = node->next;

else list->head = node->next;

if (node->next) node->next->prev = node->prev;

else list->tail = node->prev;

free(node);

list->length--;

}Circular Linked List: Round-Robin Scheduling

In a circular linked list, the last node's next pointer points back to the head. This creates an endless cycle - perfect for round-robin scheduling and game loops:

#include <stdio.h>

#include <stdlib.h>

typedef struct CNode {

int32_t process_id;

struct CNode *next;

} CNode;

// Round-robin scheduler: give each process one time slice

void round_robin(CNode *head, int total_ticks) {

CNode *current = head;

for (int tick = 0; tick < total_ticks; tick++) {

printf("Tick %2d: Running Process %d\n", tick, current->process_id);

current = current->next; // Advance to next process (wraps around)

}

}

int main(void) {

// Create circular list: PID 1 -> PID 2 -> PID 3 -> (back to PID 1)

CNode *p1 = malloc(sizeof(CNode)); p1->process_id = 1;

CNode *p2 = malloc(sizeof(CNode)); p2->process_id = 2;

CNode *p3 = malloc(sizeof(CNode)); p3->process_id = 3;

p1->next = p2;

p2->next = p3;

p3->next = p1; // Circular!

round_robin(p1, 9); // Runs 3 full cycles

free(p1); free(p2); free(p3);

return 0;

}Memory Management: Freeing the Entire List

The most critical rule: every node was individually malloc'd, so every node must be individually free'd:

void list_destroy(LinkedList *list) {

Node *current = list->head;

while (current != NULL) {

Node *next_node = current->next; // CRITICAL: save next BEFORE freeing

free(current); // Now safe to free

current = next_node; // Advance to saved pointer

}

list->head = NULL;

list->tail = NULL;

list->length = 0;

}The most common mistake is using current->next after free(current) - accessing a freed node's data is undefined behavior.

The LRU Cache Pattern

An LRU (Least Recently Used) cache is one of the most common uses of a doubly linked list in production systems. It maintains a list ordered by recency: most recently accessed at the head, least recently accessed at the tail:

// Combined hash table + doubly linked list for O(1) LRU operations

// - Hash table: O(1) lookup by key

// - Doubly linked list: O(1) move-to-front (update recency)

// - Tail deletion: O(1) eviction of least recently used

// (Full implementation is the Module 27 project - LRU Cache Engine)

// Key pattern:

// On cache hit: move node to head (O(1) with doubly linked list)

// On cache miss: insert at head, delete tail if capacity exceededRedis, memcached, and virtually every operating system page replacement policy use this exact pattern.

Intrusive Linked Lists: The Linux Kernel Approach

The Linux kernel's list implementation is different from the textbook approach. Instead of a node containing data, the data struct contains the list node. This is the "intrusive" linked list pattern:

// Linux kernel style (simplified)

struct list_head {

struct list_head *next;

struct list_head *prev;

};

// Data struct embeds the list node intrustively

struct Task {

int pid;

char name[16];

struct list_head list; // Embedded node - not a pointer to a separate node

};

// container_of recovers the Task from a list_head pointer

#define container_of(ptr, type, member) \

((type*)((char*)(ptr) - offsetof(type, member)))Advantages: A single task can be on multiple lists simultaneously (run queue, wait queue, timer list) by embedding multiple list_head members. No extra allocation per node - the node is embedded directly in the data struct.

Performance Analysis: Cache Misses and Fragmentation

The biggest practical disadvantage of linked lists is cache performance. Nodes are allocated individually with malloc, which scatters them throughout the heap:

- Array sequential iteration: CPU prefetcher loads the next 64 bytes (up to 16 ints) into L1 cache automatically.

- Linked list sequential iteration: Each

->nextpointer follows an address that may be anywhere in virtual memory - potentially a cache miss on every node.

On modern CPUs, an L1 cache miss costs ~4 cycles; an L2 miss costs ~12 cycles; an L3 miss costs ~40 cycles; a RAM fetch costs ~200 cycles. For large linked lists in performance-critical code, this is a severe bottleneck.

Solutions:

- Memory pool allocator: Pre-allocate a pool of nodes from a single large block. Adjacent nodes are then near each other in memory.

- Indexed linked lists: Use array indices instead of pointers - nodes live in an array, and

nextis an integer index. - Slab allocator: Used in the Linux kernel - pre-allocates fixed-size objects in contiguous slabs.

Frequently Asked Questions

Why is insert-at-head O(1) but insert-at-middle O(n)?

Inserting at the head requires only updating one pointer (new->next = head; head = new). Inserting at an arbitrary position requires first finding that position, which requires traversing from the head - an O(n) operation. If you already have a pointer to the node, insertion is O(1) (which is why doubly linked lists are preferred - deletion of a known node is O(1) without the traversal).

How does the Linux kernel implement its linked list?

The kernel uses struct list_head - a doubly linked circular list embedded as a field inside the data structure (struct task_struct, struct inode, etc.). This intrusive design allows a single kernel object to participate in multiple lists simultaneously and avoids the extra heap allocation of storing a node pointer.

When should I use a hash table instead of a linked list for lookups? Always. For lookup by key, a hash table gives O(1) average vs O(n) for a linked list. Linked lists are primarily valuable for ordered collections that need frequent insertion and deletion. The classic combined structure is a hash table (for O(1) lookup) + doubly linked list (for O(1) ordered operations) - the foundation of LRU caches.

What is the "pointer to pointer" trick in list deletion?

Node **current = &list->head is a pointer to the head pointer itself. By working through *current (the pointer it points to), you can uniformly handle deletion of the head node and middle nodes without a special case. When *current = node->next, you're updating whichever pointer (head, or a previous node's next) currently points to the deleted node.

Key Takeaway

Linked lists are the gateway to complex, self-referential data structures. Their power lies not in raw traversal speed (arrays win there) but in their ability to insert and delete O(1) at any known position, dynamically grow without reallocation, and participate in sophisticated patterns like LRU caches and kernel scheduler queues.

In systems programming, you will use linked lists everywhere - from OS process scheduling to interpreter object reference tracking to network buffer queues. Mastering the implementation at the pointer level gives you a tool belt that serves across every large-scale C system.

Read next: Hash Tables & Collision Resolution: O(1) Lookup in C ->

Part of the C Mastery Course - 30 modules covering everything from C basics to kernel-level systems engineering.