POSIX Threads & Synchronization in C: Mutexes, Condition Variables & Atomic Operations

POSIX Threads & Synchronization in C: Mutexes, Condition Variables & Atomic Operations

Table of Contents

- Why Threads? The Concurrency Model

- Creating and Joining Threads

- Race Conditions: The Core Problem

- Mutexes: Protecting Critical Sections

- Deadlock: The Concurrency Trap

- Condition Variables: Thread Signaling

- Read-Write Locks: Concurrent Reads

- C11 Atomic Operations: Lock-Free Programming

- Building a Thread Pool

- Frequently Asked Questions

- Key Takeaway

Why Threads? The Concurrency Model

Threads share:

- Heap: All

malloc'd memory visible to all threads. - Global variables: Any

staticor global-scope variable. - File descriptors: Open files, sockets.

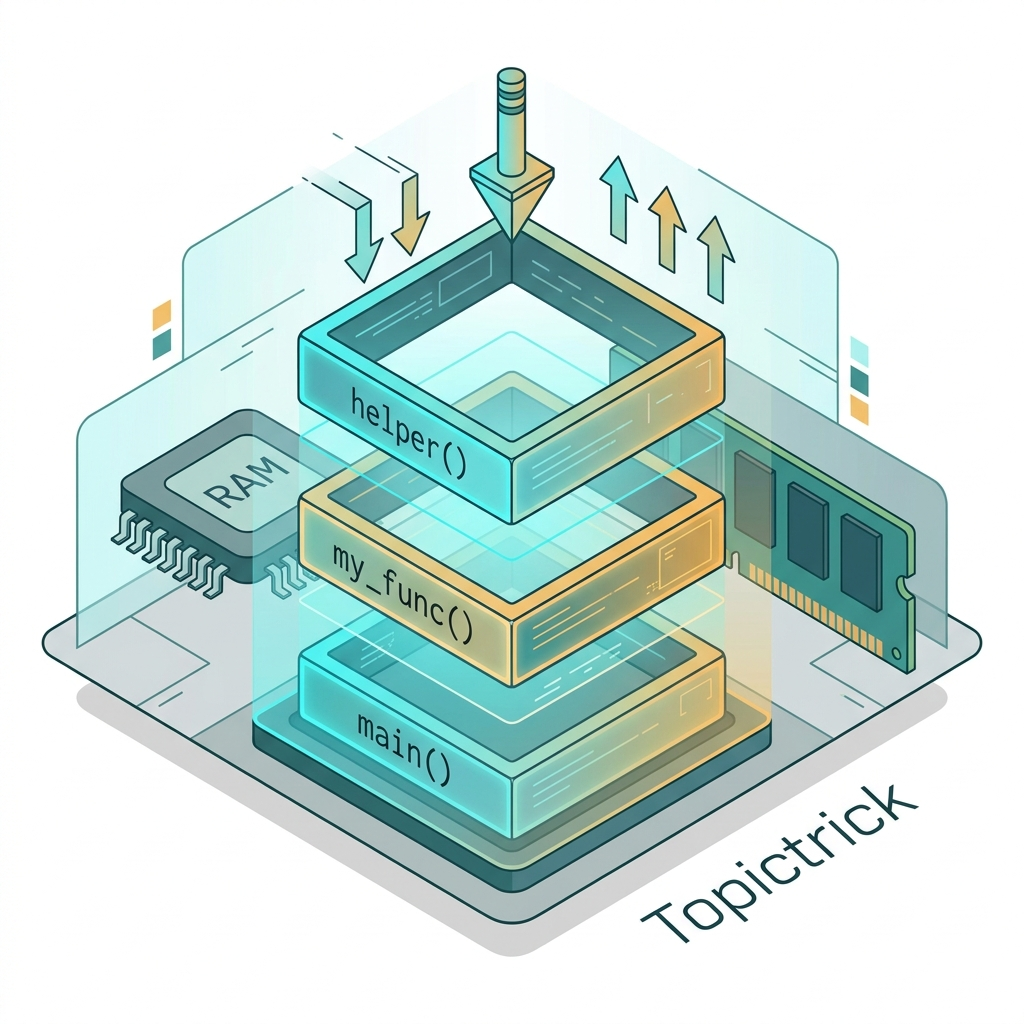

Threads have their own:

- Stack: Local variables in each function, function call chain.

- Registers: Current instruction pointer, register file.

- Signal mask.

The shared heap is both the power and the danger. Multiple threads can cooperate on the same data - but without explicit coordination, they corrupt each other's work.

Creating and Joining Threads

#include <pthread.h>

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

typedef struct {

int thread_id;

int start;

int end;

long result;

} WorkerArgs;

// Thread function: sum integers from start to end

void *sum_range(void *arg) {

WorkerArgs *args = (WorkerArgs*)arg;

args->result = 0;

for (int i = args->start; i <= args->end; i++) {

args->result += i;

}

printf("Thread %d: sum [%d..%d] = %ld\n",

args->thread_id, args->start, args->end, args->result);

return NULL; // Or return (void*)args->result;

}

int main(void) {

const int NUM_THREADS = 4;

pthread_t threads[NUM_THREADS];

WorkerArgs args[NUM_THREADS] = {

{0, 1, 250, 0},

{1, 251, 500, 0},

{2, 501, 750, 0},

{3, 751, 1000, 0},

};

// Create all threads

for (int i = 0; i < NUM_THREADS; i++) {

if (pthread_create(&threads[i], NULL, sum_range, &args[i]) != 0) {

perror("pthread_create");

return 1;

}

}

// Wait for all threads to finish

long total = 0;

for (int i = 0; i < NUM_THREADS; i++) {

pthread_join(threads[i], NULL);

total += args[i].result;

}

printf("Total sum 1..1000 = %ld (expected 500500)\n", total);

return 0;

}Compile with: gcc -pthread main.c -o main

Race Conditions: The Core Problem

A race condition occurs when two threads access shared data concurrently, and at least one access is a write. The result depends on which thread executes first - non-deterministic behavior:

#include <pthread.h>

#include <stdio.h>

int counter = 0; // Shared variable - DANGEROUS without protection!

void *unsafe_increment(void *arg) {

for (int i = 0; i < 1000000; i++) {

counter++; // NOT atomic! Compiles to: LOAD, ADD, STORE - 3 operations

// Thread switch can happen between any two of these 3 steps

}

return NULL;

}

int main(void) {

pthread_t t1, t2;

pthread_create(&t1, NULL, unsafe_increment, NULL);

pthread_create(&t2, NULL, unsafe_increment, NULL);

pthread_join(t1, NULL);

pthread_join(t2, NULL);

printf("Counter: %d (expected 2000000)\n", counter);

// OUTPUT IS UNPREDICTABLE: might be 1,732,891 or 1,999,114...

return 0;

}The bug: counter++ compiles to three machine instructions (load, increment, store). Another thread can execute its load between your increment and store, causing one increment to be lost.

Mutexes: Protecting Critical Sections

A mutex (mutual exclusion lock) ensures that only one thread executes the critical section at a time:

#include <pthread.h>

#include <stdio.h>

int counter = 0;

pthread_mutex_t lock = PTHREAD_MUTEX_INITIALIZER; // Static initialization

void *safe_increment(void *arg) {

for (int i = 0; i < 1000000; i++) {

pthread_mutex_lock(&lock); // Acquire lock - blocks if another thread holds it

counter++; // Critical section: only one thread here at a time

pthread_mutex_unlock(&lock); // Release lock - allows waiting threads to proceed

}

return NULL;

}

int main(void) {

pthread_t t1, t2;

pthread_create(&t1, NULL, safe_increment, NULL);

pthread_create(&t2, NULL, safe_increment, NULL);

pthread_join(t1, NULL);

pthread_join(t2, NULL);

pthread_mutex_destroy(&lock);

printf("Counter: %d (always 2000000)\n", counter);

return 0;

}Best practices:

- Hold the lock for the shortest possible time (minimize the critical section).

- Never call blocking functions while holding a lock.

- Use

PTHREAD_MUTEX_INITIALIZERfor static mutexes,pthread_mutex_initfor dynamic (heap-allocated) mutexes. - Always check return values for

pthread_mutex_lockin production code.

Deadlock: The Concurrency Trap

Deadlock occurs when two threads each hold a lock the other needs:

// DEADLOCK SCENARIO:

// Thread 1: lock(mutex_A), then tries to lock(mutex_B) - blocked

// Thread 2: lock(mutex_B), then tries to lock(mutex_A) - blocked

// Neither can proceed - program hangs forever

// PREVENTION: Always acquire locks in the same ORDER

pthread_mutex_t mutex_A, mutex_B;

void thread1_safe(void) {

pthread_mutex_lock(&mutex_A); // Always A first

pthread_mutex_lock(&mutex_B); // Then B

// ... critical work ...

pthread_mutex_unlock(&mutex_B);

pthread_mutex_unlock(&mutex_A);

}

void thread2_safe(void) {

pthread_mutex_lock(&mutex_A); // Same order: A first

pthread_mutex_lock(&mutex_B); // Then B

// ... critical work ...

pthread_mutex_unlock(&mutex_B);

pthread_mutex_unlock(&mutex_A);

}Deadlock prevention rules:

- Lock ordering: Always acquire multiple locks in the same global order.

- Lock timeout: Use

pthread_mutex_timedlockwith a timeout. - Try-lock: Use

pthread_mutex_trylockto detect potential deadlock. - Minimize lock nesting: Avoid holding one lock while acquiring another.

Condition Variables: Thread Signaling

Mutexes are for mutual exclusion. Condition variables allow threads to wait until a condition is true without busy-polling:

#include <pthread.h>

#include <stdio.h>

#include <stdlib.h>

#include <stdbool.h>

// Producer-Consumer queue

#define QUEUE_SIZE 16

typedef struct {

int items[QUEUE_SIZE];

int head, tail, count;

pthread_mutex_t lock;

pthread_cond_t not_empty; // Signal when item is available

pthread_cond_t not_full; // Signal when space is available

} PCQueue;

void pcq_init(PCQueue *q) {

q->head = q->tail = q->count = 0;

pthread_mutex_init(&q->lock, NULL);

pthread_cond_init(&q->not_empty, NULL);

pthread_cond_init(&q->not_full, NULL);

}

void pcq_push(PCQueue *q, int item) {

pthread_mutex_lock(&q->lock);

while (q->count == QUEUE_SIZE) { // Wait while FULL

pthread_cond_wait(&q->not_full, &q->lock); // Releases lock, sleeps

}

q->items[q->tail] = item;

q->tail = (q->tail + 1) % QUEUE_SIZE;

q->count++;

pthread_cond_signal(&q->not_empty); // Wake up waiting consumer

pthread_mutex_unlock(&q->lock);

}

int pcq_pop(PCQueue *q) {

pthread_mutex_lock(&q->lock);

while (q->count == 0) { // Wait while EMPTY

pthread_cond_wait(&q->not_empty, &q->lock); // Releases lock, sleeps

}

int item = q->items[q->head];

q->head = (q->head + 1) % QUEUE_SIZE;

q->count--;

pthread_cond_signal(&q->not_full); // Wake up waiting producer

pthread_mutex_unlock(&q->lock);

return item;

}pthread_cond_wait atomically releases the mutex and puts the thread to sleep - no CPU is consumed while waiting. When pthread_cond_signal wakes it, the thread re-acquires the mutex before returning.

Read-Write Locks: Concurrent Reads

Many data structures are read frequently but written rarely. Using a mutex blocks all concurrent reads unnecessarily. A read-write lock allows multiple concurrent readers but exclusive write access:

#include <pthread.h>

#include <stdint.h>

typedef struct {

int32_t data[1024];

pthread_rwlock_t rwlock;

} SharedData;

void *reader(void *arg) {

SharedData *sd = (SharedData*)arg;

pthread_rwlock_rdlock(&sd->rwlock); // Multiple readers can hold this simultaneously

int sum = 0;

for (int i = 0; i < 1024; i++) sum += sd->data[i];

pthread_rwlock_unlock(&sd->rwlock);

return NULL;

}

void *writer(void *arg) {

SharedData *sd = (SharedData*)arg;

pthread_rwlock_wrlock(&sd->rwlock); // Exclusive - no readers allowed

for (int i = 0; i < 1024; i++) sd->data[i] = i;

pthread_rwlock_unlock(&sd->rwlock);

return NULL;

}Use rwlocks when: readers far outnumber writers (in-memory caches, configuration databases, routing tables).

C11 Atomic Operations: Lock-Free Programming

C11 introduced <stdatomic.h> for hardware-level atomic operations - faster than mutexes for simple shared counters and flags:

#include <stdatomic.h>

#include <pthread.h>

#include <stdio.h>

atomic_int atomic_counter = 0; // Atomic type - guaranteed to be safe

atomic_bool should_stop = false;

void *atomic_worker(void *arg) {

for (int i = 0; i < 1000000; i++) {

atomic_fetch_add(&atomic_counter, 1); // Hardware atomic ADD - no mutex needed

}

return NULL;

}

// Lock-free flag: thread-safe without a mutex

void stop_workers(void) {

atomic_store(&should_stop, true); // Visible to all threads immediately

}

bool check_stopped(void) {

return atomic_load(&should_stop);

}

int main(void) {

pthread_t t1, t2;

pthread_create(&t1, NULL, atomic_worker, NULL);

pthread_create(&t2, NULL, atomic_worker, NULL);

pthread_join(t1, NULL);

pthread_join(t2, NULL);

printf("Counter: %d (always 2000000)\n", atomic_counter);

return 0;

}When to use atomics vs mutexes:

- Atomics: Simple flags, counters, single-value updates.

- Mutexes: Complex multi-step operations on multiple variables, condition waiting.

- Never use regular variables for inter-thread communication without synchronization.

Building a Thread Pool

A thread pool pre-creates a fixed number of threads that persist and pick up work from a shared queue - avoiding the overhead of creating/destroying threads for each task:

// Thread pool conceptual structure:

typedef struct {

pthread_t *threads; // Worker thread handles

int count; // Number of worker threads

PCQueue work_queue; // Shared task queue

bool shutdown; // Termination flag

} ThreadPool;

// Each worker:

void *pool_worker(void *arg) {

ThreadPool *pool = (ThreadPool*)arg;

while (true) {

Task task = pcq_pop(&pool->work_queue); // Blocks until work arrives

if (pool->shutdown) break;

task.function(task.arg); // Execute the task

}

return NULL;

}

// Submit work:

// pcq_push(&pool->work_queue, (Task){.function = do_something, .arg = data});Thread pools are the core mechanism behind Nginx's worker process model, Java's ExecutorService, and (at the OS level) the Linux kernel's kworker threads.

Frequently Asked Questions

What is the difference between a mutex and a semaphore?

A mutex is owned by one thread - only the locking thread can unlock it. A semaphore (from <semaphore.h>) is a counter - any thread can increment or decrement it, making it suitable for signaling between threads and limiting concurrent access to a resource (e.g., a connection pool of size 10).

How do I detect race conditions?

Use ThreadSanitizer (TSan): gcc -fsanitize=thread -g main.c. TSan instruments every memory access and detects all data races that occur during a test run. It adds ~5-10x runtime overhead but is the most reliable way to find race conditions.

Is volatile sufficient for thread safety?

No. volatile prevents the compiler from caching a variable in a register and forces re-reading from memory on each access - but it does NOT create atomic read-modify-write operations and provides NO synchronization. Use _Atomic types from <stdatomic.h> for safe inter-thread variable access.

When should I use pthread_cond_broadcast vs pthread_cond_signal?

pthread_cond_signal wakes exactly one waiting thread. pthread_cond_broadcast wakes all waiting threads. Use broadcast when: the condition is true for all waiters (e.g., a "shutdown" event), or you can't determine which specific waiter should proceed.

Key Takeaway

POSIX threads turn C programs into Multi-Core Engines. With the right synchronization - mutexes for exclusion, condition variables for signaling, atomics for simple shared state - you can safely parallelize workloads and approach linear scaling with the number of CPU cores.

The fundamental discipline: never access shared data without synchronization. The type of synchronization (mutex, rwlock, atomic) depends on the access pattern and performance requirements. ThreadSanitizer makes race conditions visible - run it on every concurrent codebase.

Read next: Processes, Fork & Exec: Process-Level Isolation ->

Part of the C Mastery Course - 30 modules from C basics to expert-level systems engineering.