The CAP Theorem Explained: Consistency, Availability, and Partition Tolerance

The CAP Theorem Explained: Consistency, Availability, and Partition Tolerance

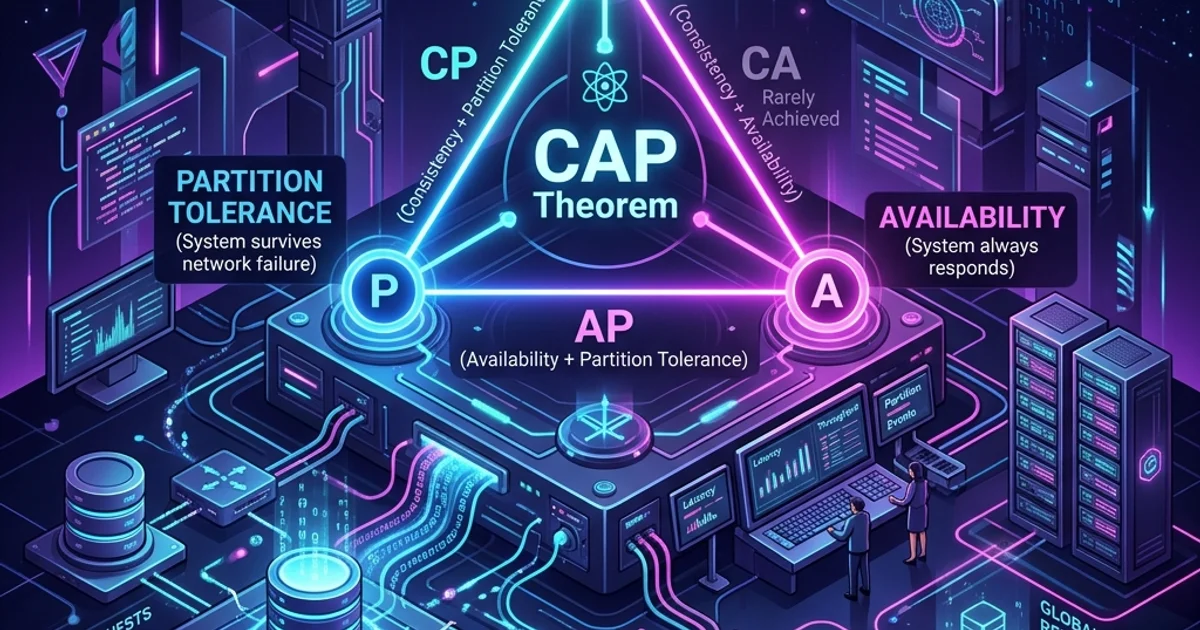

The CAP theorem is one of the most important constraints in distributed systems design. Stated simply: a distributed system can only guarantee two of three properties - Consistency, Availability, and Partition Tolerance - and since Partition Tolerance is unavoidable in any real network, you are always choosing between Consistency and Availability.

Understanding this trade-off determines which database you choose, how you architect your services, and what failure modes your users will experience. This guide explains all three properties from first principles, shows real database examples, and introduces the PACELC extension for latency-aware design.

Why Distributed Systems Are Hard

A single-server database is simple: one process reads and writes to one disk. Consistency is guaranteed by the hardware - two reads of the same row always return the same data.

Once you add a second server (for high availability, geographic distribution, or scale), complexity explodes. The two servers must communicate over a network. Networks are unreliable. At any moment, the link between Server 1 and Server 2 can be severed by a hardware failure, a misconfigured firewall, or a backhoe cutting a fiber cable.

What should your system do when that link is severed? The CAP theorem formalizes the choice you must make.

The Three Properties Defined

C - Consistency (Strong Consistency)

Every read returns the most recent write, or an error. If a user updates their profile, every subsequent read of that profile - from any node in the system - returns the updated value.

More formally: the system behaves as if there is only one copy of the data. There is no "stale read" - you will never read data that was valid two seconds ago but has since been superseded.

This is sometimes called "linearizability" or "atomic consistency" in academic literature.

Everyday analogy: You withdraw £500 from an ATM. When you walk to a second ATM, your balance reflects the withdrawal. If the second ATM showed the pre-withdrawal balance, that would be an inconsistency.

A - Availability

Every request receives a response (not an error). The system may not return the most recent data, but it will always return something.

Formally: every non-failing node must return a response to every request within a reasonable time.

Important nuance: Availability in CAP does not mean "fast" or "highly available" in the SLA sense. It means the system does not return errors or refuse to answer. Returning stale data is allowed; returning a 503 error is not.

P - Partition Tolerance

The system continues to operate when the network between nodes is severed or delayed.

A network partition is when nodes cannot communicate with each other. In any real distributed system spanning more than one machine (or especially any system spread across multiple data centers), network partitions are inevitable. They happen regularly due to:

- Physical hardware failures

- Software bugs in network equipment

- Datacenter maintenance

- BGP misconfigurations

- Temporary packet loss during high network load

The mathematical consequence: Since partitions happen in any real distributed system, P is not optional. You must design for partitions. Therefore the real choice is between C and A when a partition occurs.

The Two Viable Combinations

CP: Consistency + Partition Tolerance

"When the network is split, I would rather refuse requests than return incorrect data."

During a partition, a CP system stops answering requests (or returns errors) until the partition heals and the nodes can sync. Once the partition heals, all reads return consistent data.

The user experience during a partition:

User: GET /account/balance

System: 503 Service Unavailable (partition in progress)Real-world examples:

- PostgreSQL (with synchronous replication)

- HBase

- Zookeeper

- etcd

- Google Spanner (approximately - it uses atomic clocks to minimize partition duration)

When to choose CP:

- Financial transactions (double-spend prevention)

- Inventory management (cannot oversell)

- Distributed locks and leader election

- Legal record systems (audit trails must be accurate)

- Any domain where stale data causes real harm

AP: Availability + Partition Tolerance

"When the network is split, I would rather return possibly-stale data than return an error."

During a partition, an AP system continues serving requests from whichever node receives them. Different nodes may return different (inconsistent) data. When the partition heals, the nodes reconcile their differences through a process called eventual consistency.

The user experience during a partition:

User (connected to Node A): GET /post/123/likes -> 1,547 likes

User (connected to Node B): GET /post/123/likes -> 1,543 likes (stale by 4 likes)Real-world examples:

- Cassandra

- DynamoDB (default mode)

- CouchDB

- Riak

- DNS (the internet's most important AP system)

When to choose AP:

- Social media feeds (a few seconds of staleness is acceptable)

- Product catalogs (showing last-known price is better than showing an error)

- User preference storage (dark mode toggle can be eventually consistent)

- Analytics event collection (some late events are fine)

- Any domain where availability matters more than instantaneous accuracy

Eventual Consistency Explained

Eventual consistency is the trade-off you accept in AP systems. It guarantees that if no new updates are made, all replicas will eventually converge to the same value.

The key word is "eventually" - the system provides no timing guarantees. In practice, replication lag in well-designed AP systems is milliseconds to seconds under normal conditions. During a partition, it can be minutes or hours.

Conflict Resolution in AP Systems

When a partition heals, nodes may have conflicting writes. How to resolve them?

Last-Write-Wins (LWW): Each write is timestamped. The write with the latest timestamp wins. Simple but lossy - concurrent writes from two nodes mean one write is permanently discarded.

Vector clocks: Each write carries a version vector tracking which nodes have seen it. Conflicts are detected and passed to the application to resolve. Amazon DynamoDB uses vector clocks for its conflict detection.

CRDTs (Conflict-free Replicated Data Types): Mathematical data structures that can always be merged without conflicts. Examples:

- G-Counter: A counter that only increments. Merge = max(counter_A, counter_B). Useful for view counts and like counts.

- LWW-Element-Set: A set where additions with newer timestamps always win. Useful for shopping carts.

- Observed-Remove Set: Deletion is tracked explicitly, so "add on Node A + delete on Node B" resolves correctly.

Real Database Comparison

| Database | CAP Position | Consistency Model | Use Case |

|---|---|---|---|

| PostgreSQL | CP | Strong (serializable) | Financial, inventory, user accounts |

| MySQL + sync replication | CP | Strong | E-commerce transactions |

| MongoDB (default) | CP | Causal (adjustable) | General-purpose document store |

| Cassandra | AP | Eventual | High-write, globally distributed |

| DynamoDB (default) | AP | Eventually consistent | Serverless, high traffic |

| DynamoDB (strong reads) | CP | Strong | Inventory, sessions |

| Redis (single node) | CP | Strong | Cache, sessions |

| Redis Cluster | AP | Eventual (across slots) | Distributed cache |

| Zookeeper | CP | Linearizable | Distributed coordination |

| CouchDB | AP | Eventual + MVCC | Offline-first applications |

Tunable Consistency

Some databases allow you to choose your consistency level per operation rather than committing to one model:

Cassandra's Consistency Levels

from cassandra.cluster import Cluster

from cassandra import ConsistencyLevel

from cassandra.query import SimpleStatement

cluster = Cluster(['10.0.0.1', '10.0.0.2', '10.0.0.3'])

session = cluster.connect('my_keyspace')

# Strong consistency: write must be acknowledged by a quorum of nodes

strong_write = SimpleStatement(

"UPDATE users SET balance = %s WHERE user_id = %s",

consistency_level=ConsistencyLevel.QUORUM

)

session.execute(strong_write, [new_balance, user_id])

# Eventual consistency: write acknowledged by just one node (fastest)

eventual_write = SimpleStatement(

"UPDATE user_events SET last_seen = %s WHERE user_id = %s",

consistency_level=ConsistencyLevel.ONE

)

session.execute(eventual_write, [timestamp, user_id])QUORUM = majority of replicas must respond. With 3 replicas: 2 must acknowledge. This gives strong consistency (if any node fails, the majority still holds the latest write).

ONE = only one replica must respond. Fastest but stale reads are possible.

The formula for strong consistency: write consistency + read consistency > replication factor

With RF=3: QUORUM write (2) + QUORUM read (2) = 4 > 3 -> strongly consistent.

DynamoDB Consistency

import { DynamoDBClient, GetItemCommand } from '@aws-sdk/client-dynamodb';

const client = new DynamoDBClient({});

// Eventually consistent read (default) - cheaper, faster

const eventualRead = await client.send(new GetItemCommand({

TableName: 'Users',

Key: { userId: { S: user_id } },

ConsistentRead: false, // default

}));

// Strongly consistent read - guaranteed to reflect all prior writes

const strongRead = await client.send(new GetItemCommand({

TableName: 'Users',

Key: { userId: { S: user_id } },

ConsistentRead: true, // 2x the read capacity cost

}));The PACELC Theorem: Adding Latency to the Model

The CAP theorem only addresses behavior during a partition (P). Daniel Abadi extended it with PACELC in 2012:

If there is a Partition (P): choose Availability (A) or Consistency (C). Else (E) during normal operation: choose Latency (L) or Consistency (C).

The second part is the insight CAP misses: even without a network partition, achieving strong consistency requires coordination between nodes, and coordination takes time.

| System | During Partition | During Normal Operation |

|---|---|---|

| Cassandra | A (available) | L (low latency, eventual) |

| DynamoDB (eventual) | A | L |

| PostgreSQL sync replication | C (consistent) | C (strong, higher latency) |

| MongoDB default | C | L |

| Google Spanner | C | C (uses atomic clocks to minimize latency penalty) |

Why Latency vs. Consistency Matters

A strong consistency write to PostgreSQL with synchronous replication:

- Write committed to primary

- Primary sends write to secondary

- Secondary writes and acknowledges

- Primary responds to client

This round-trip adds 1-20ms depending on network distance. At 10,000 writes/second, this overhead is significant.

Cassandra with eventual consistency:

- Write sent to one node

- Node responds immediately

- Replication happens asynchronously in the background

Near-zero write latency, but reads may return stale data.

The PACELC choice is about your application's read/write latency budget versus its tolerance for stale data during normal operation.

Practical Decision Framework

Use this flowchart to choose your consistency model:

Does wrong data cause financial, legal, or safety harm?

YES -> CP/strong consistency (PostgreSQL, DynamoDB ConsistentRead)

NO ←"

Can you tolerate users seeing data that is seconds old?

NO -> CP/strong consistency

YES ←"

Do you need global low-latency writes?

YES -> AP/eventual consistency (Cassandra, DynamoDB default)

NO ←"

Can you afford higher write latency for accuracy guarantees?

YES -> CP (PostgreSQL with sync replication)

NO -> AP with tunable consistency (Cassandra QUORUM where needed)Common Misconceptions

"NoSQL = AP, SQL = CP"

This is a simplification. MongoDB is CP by default. DynamoDB has both eventually consistent and strongly consistent read modes. PostgreSQL with asynchronous replication becomes AP - replicas may return stale data if the primary fails. The consistency model depends on how you configure the system, not just whether it is SQL or NoSQL.

"I should always choose consistency"

Strong consistency has real costs: higher write latency, reduced availability during partitions, and harder global distribution. DNS - the foundation of the internet - is intentionally AP. Your social feed does not need banking-grade consistency. Choose based on the domain, not on a general preference.

"CAP makes distributed systems impossible to reason about"

CAP is a constraint, not a curse. It tells you exactly what trade-off you are making. Many successful systems are built on eventual consistency precisely because the domain tolerates it. Understanding CAP prevents you from accidentally building a banking system on a Cassandra cluster.

Frequently Asked Questions

Q: Is any database 100% strongly consistent?

PostgreSQL with synchronous replication provides linearizable consistency within a single region. Google Spanner provides external consistency globally using TrueTime (atomic clock + GPS). Both sacrifice some write latency for this guarantee.

Q: Can a distributed system provide all three CAP properties?

No - this is mathematically proven. During a network partition, you can guarantee either consistent responses or available responses, but not both. You can minimize partition duration and latency through engineering, but you cannot eliminate the trade-off.

Q: What does "eventual consistency" look like to users?

On social media, you might like a post and immediately see the like count jump by one on your screen, but a friend refreshing the page a second later sees the pre-like count. One second later, both see the same count. This is eventually consistent behavior - temporarily divergent, converging to the same state.

Q: How does Google Spanner achieve strong consistency globally?

Spanner uses TrueTime, a globally synchronized clock with known uncertainty bounds (typically ±4ms). Before committing a write, Spanner waits for the uncertainty window to pass (commit wait). This guarantees that any subsequent read anywhere in the world sees the write. The cost: 4-10ms of additional write latency. For global strong consistency, that is remarkably fast.

Key Takeaway

The CAP theorem is not a theoretical curiosity - it is the framework for every database choice in a distributed system. Since network partitions are unavoidable, you are always choosing between consistency and availability under failure. CP systems return errors rather than wrong data; AP systems return possibly-stale data rather than errors. Extend the analysis with PACELC to also consider the latency vs. consistency trade-off during normal operation. Match your consistency model to your domain: use CP for money, inventory, and legal records; use AP for social feeds, analytics, and user preferences.

Read next: Microservices Communication: gRPC vs. REST vs. GraphQL ->

Part of the Software Architecture Hub - engineering the truth.