HashiCorp Consul Tutorial: Service Discovery for Spring Boot Microservices

Module 36: Service Discovery with Consul

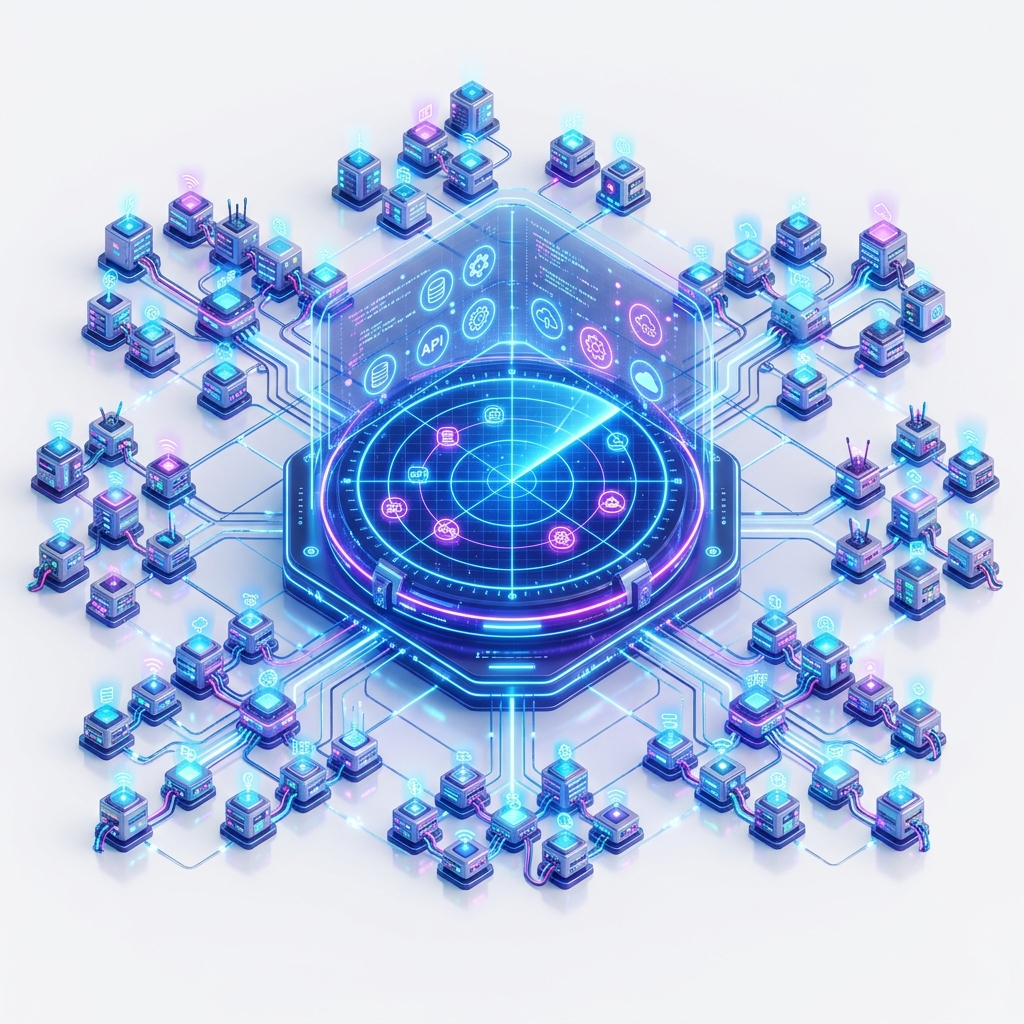

In a cloud-native environment, hardware is fluid. Instances are created, moved, and destroyed by schedulers like Kubernetes or AWS Auto Scaling Groups. You cannot rely on static IP addresses. You need a Service Registry that acts as the "Phonebook" of your infrastructure.

While Netflix Eureka (Module 31) is popular, HashiCorp Consul provides a more robust, multi-datacenter-ready alternative that includes healthy service discovery, key-value storage, and a service mesh.

1. The Consul Architecture: Agents and Servers

Consul operates using a distributed cluster model. Unlike Eureka (which is a passive registry), Consul is active.

- Consul Agent: A lightweight process that runs on every physical hardware node in your cluster. It is responsible for health checking the services running on that specific host.

- Consul Server: The "Brain" of the cluster. A small group (typically 3 or 5) of servers that maintain the state using the Raft Consensus Algorithm.

- Quorum: For a write to be successful, a majority of servers must agree. This ensures consistency even if a hardware node fails.

2. Hardware-Mirror: The Gossip Protocol (LAN & WAN)

Consul uses a Gossip Protocol (based on Serf) to manage cluster membership and broadcast events. This is where the hardware meets the software.

The Physics of Discovery:

- UDP Background Hum: Agents talk to each other using small UDP packets. Even when your application is idle, your NIC (Network Interface Card) is constantly processing "Member Joined" or "Member Alive" packets.

- CPU Interrupts: Each incoming gossip packet triggers a hardware interrupt. In a cluster of 1,000 nodes, the sheer volume of "Gossip Noise" can consume 1-2% of your System CPU just managing the registry.

- Convergence Time: When a node fails (e.g., a physical RAM error), the gossip protocol propagates this news. The time it takes for the whole cluster to "Know" depends on the Network Latency between your hardware racks.

Hardware-Mirror Rule: Ensure your network switch fabric supports high-volume UDP traffic. In multi-datacenter setups, place Consul Servers on high-IOPS hardware with NVMe SSDs to ensure the Raft log can be written to disk with sub-millisecond latency.

3. Implementation: Service Registration

In the Spring ecosystem, Consul integration is seamless. You simply add the starter, and the application will self-register on startup.

Maven Dependencies

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-consul-discovery</artifactId>

</dependency>Configuration (application.yml)

spring:

application:

name: order-service

cloud:

consul:

host: localhost

port: 8500

discovery:

instance-id: ${spring.application.name}:${random.value}

health-check-path: /actuator/health

health-check-interval: 10s4. Health Checks: The Hardware-Software Heartbeat

Consul's discovery is "Health-Aware." If a service instance is running but its database connection is broken, Consul will stop routing traffic to it.

Common Check Types:

- HTTP: Consul Agent calls a URL (e.g.,

/actuator/health). If it returns 200, the instance is "Healthy." - TCP: Consul attempts to open a socket on a specific port.

- TTL (Time To Live): The application must "Check-in" with Consul periodically. If it fails to do so (e.g., due to a JVM Freeze/Long GC pause), Consul marks it as failed.

Hardware-Mirror Insight: Frequent health checks (e.g., every 1 second) provide fast failover but create constant I/O load on your application and Network overhead on the bus. Balance your check interval based on your hardware's capacity for context switching.

5. Consul as a Config Source (KV Store)

Beyond discovery, Consul includes a distributed Key-Value (KV) Store. This can be an alternative to the Spring Cloud Config Server (Module 35).

Why use Consul for Config?

- Real-time Updates: Consul uses "Long Polling" to watch for changes. When you update a key in the Consul UI, the change travels to the application in milliseconds.

- Consistency: Values are stored via Raft, ensuring that all nodes see the same configuration.

spring:

cloud:

consul:

config:

enabled: true

prefix: config

default-context: application6. Consul vs. Eureka: The Hardware Tradeoff

| Feature | Netflix Eureka | HashiCorp Consul |

|---|---|---|

| Consistency Model | AP (Availability/Partition) - Eventually Consistent | CP (Consistency/Partition) - Strongly Consistent |

| Hardware Density | Passive; servers can be small. | Active; servers need high IOPS/CPU for Raft. |

| Failover Speed | Slower (Heartbeats + Cache Expiry). | Faster (Gossip + Active Probing). |

| Multi-Datacenter | Difficult to coordinate. | Native WAN Gossip support. |

Hardware Selection: Use Eureka if you have unreliable hardware or a "Scale-at-all-costs" mentality where correctness is secondary to speed. Use Consul if you have a stable infrastructure and require strong guarantees that a service is actually healthy before sending traffic.

7. Service Mesh: The Future with Consul Connect

In advanced "Hardware-Mirror" setups, Consul provides Connect, which handles service-to-service security via mTLS (Mutual TLS).

- Consul generates and distributes certificates to every node.

- Hardware-level encryption occurs in a "Sidecar Proxy" (like Envoy).

- Your Java application thinks it's talking over plain HTTP, but the hardware is actually encrypting bits over the wire using AES-NI instructions.

8. Summary

Consul is the "Nervous System" of your microservice cluster. By leveraging the Gossip Protocol for discovery and Raft for consistency, it provides a rock-solid foundation for high-availability systems. Understanding the hardware tax of gossip noise and the importance of health-check intervals allows you to tune Consul for maximum reliability.

In the next module, Module 37: Fault Tolerance with Resilience4j, we'll see how to handle the inevitable failures that occur when Service Discovery reports a node is "Healthy" but it's actually slow.

Next Steps:

- Run Consul in a Docker container:

docker run -d -p 8500:8500 consul. - Enable

spring-cloud-starter-consul-discoveryin an existing Spring Boot app. - Open the Consul UI at

http://localhost:8500and watch your service register. - Kill the application process and see how quickly Consul detects the "Critical" state.

Frequently Asked Questions

Q: What is Consul and how does it differ from Eureka for service discovery?

Consul is a multi-purpose infrastructure tool from HashiCorp that provides service discovery, health checking, key-value configuration storage, and service mesh capabilities. Eureka is Netflix's service registry focused solely on discovery. Consul uses a consensus protocol (Raft) for strong consistency, supports DNS-based discovery natively, and runs as an agent on each node. Use Consul when you need a unified tool for discovery, configuration, and potentially service mesh; Eureka when you want a simpler, pure-Java registry.

Q: How does Consul's health checking work with Spring Boot?

When your Spring Boot application registers with Consul (via spring-cloud-starter-consul-discovery), it provides a health check endpoint (by default /actuator/health). Consul's agent periodically calls this endpoint. If the check fails for a configured number of times, Consul marks the instance as unhealthy and removes it from the pool of instances returned to callers. This ensures that service discovery never returns a dead instance.

Q: Can I use Consul as a configuration store instead of Spring Cloud Config Server?

Yes. The spring-cloud-starter-consul-config dependency enables Consul's key-value store as a configuration backend. Properties are stored under configurable prefixes in Consul's KV store and are loaded at startup via bootstrap.properties. Changes can be detected via long-polling and automatically refreshed in running instances - similar to Config Server with Spring Cloud Bus, but without needing a separate message broker.