Resilience Patterns: Circuit Breakers, Retries, Timeouts, and Bulkheads

Resilience Patterns: Circuit Breakers, Retries, Timeouts, and Bulkheads

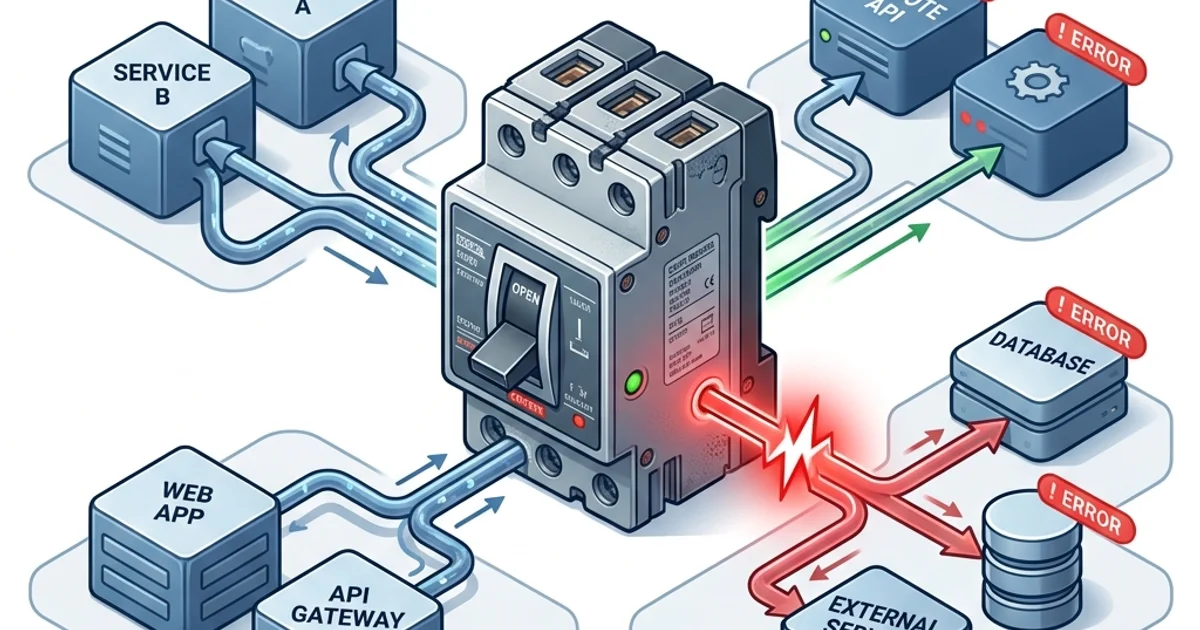

In a microservices architecture, your application depends on external services - payment processors, notification services, recommendation engines, third-party APIs. Any of these can fail or slow down at any time. Without resilience patterns, one slow or failing dependency can bring down your entire application through cascading failures.

Resilience patterns are design techniques that allow your system to continue operating, possibly at reduced capacity, when dependencies fail. This guide covers the four essential patterns: Circuit Breaker, Retry with Exponential Backoff, Timeout, and Bulkhead.

The Cascading Failure Problem

Consider a checkout flow that calls three services:

User -> Checkout Service -> Payment Service (slow)

-> Inventory Service (waiting for Checkout to respond)

-> Notification Service (waiting for Checkout)If Payment Service starts responding in 30 seconds instead of 200ms:

- Checkout's threads are blocked, waiting for Payment

- New requests pile up, all waiting

- Checkout's thread pool exhausts - it stops responding to Inventory and Notification

- Inventory and Notification's threads block, waiting for Checkout

- Their thread pools exhaust too

- The entire system is down because of one slow service

This is a cascading failure. Resilience patterns break the chain.

Circuit Breaker Pattern

The Circuit Breaker monitors calls to a dependency. When the failure rate exceeds a threshold, it "opens" - subsequent calls fail immediately without attempting the actual call. After a timeout, it allows a test call through ("half-open"). If the test succeeds, the breaker closes; if not, it opens again.

CLOSED (normal) -> failure threshold exceeded -> OPEN (failing fast)

OPEN -> timeout expires -> HALF-OPEN (testing) -> success -> CLOSED

-> failure -> OPENCircuit Breaker States Explained

Closed state: All calls go through normally. The breaker counts failures. If the failure rate exceeds the threshold within a time window, the circuit opens.

Open state: All calls fail immediately with a CircuitBreakerOpenError (no network call made). This gives the failing service time to recover without being bombarded by retry storms.

Half-open state: After the open timeout, a single test call is allowed. If it succeeds, the circuit closes and traffic resumes normally. If it fails, the circuit opens again.

Node.js Implementation

// lib/circuit-breaker.js

class CircuitBreaker {

constructor(options = {}) {

this.failureThreshold = options.failureThreshold ?? 5;

this.successThreshold = options.successThreshold ?? 2;

this.timeout = options.timeout ?? 60000; // 60 seconds in open state

this.state = 'CLOSED';

this.failureCount = 0;

this.successCount = 0;

this.lastFailureTime = null;

}

async call(fn) {

if (this.state === 'OPEN') {

if (Date.now() - this.lastFailureTime > this.timeout) {

this.state = 'HALF_OPEN';

} else {

throw new Error('Circuit breaker is OPEN - service unavailable');

}

}

try {

const result = await fn();

this.onSuccess();

return result;

} catch (error) {

this.onFailure();

throw error;

}

}

onSuccess() {

this.failureCount = 0;

if (this.state === 'HALF_OPEN') {

this.successCount++;

if (this.successCount >= this.successThreshold) {

this.state = 'CLOSED';

this.successCount = 0;

}

}

}

onFailure() {

this.failureCount++;

this.lastFailureTime = Date.now();

if (this.state === 'HALF_OPEN' || this.failureCount >= this.failureThreshold) {

this.state = 'OPEN';

this.successCount = 0;

}

}

}

// Usage

const paymentBreaker = new CircuitBreaker({ failureThreshold: 5, timeout: 30000 });

async function processPayment(order) {

try {

return await paymentBreaker.call(async () => {

return await paymentService.charge(order.userId, order.total);

});

} catch (err) {

if (err.message.includes('Circuit breaker is OPEN')) {

// Return a graceful fallback

return { success: false, reason: 'payment_service_unavailable', canRetry: true };

}

throw err;

}

}Using Resilience4j (Java/Spring)

// application.yml

resilience4j:

circuitbreaker:

instances:

paymentService:

registerHealthIndicator: true

slidingWindowSize: 10 # Last 10 calls

failureRateThreshold: 50 # Open if >50% fail

waitDurationInOpenState: 30s # Stay open for 30s

permittedNumberOfCallsInHalfOpenState: 3@CircuitBreaker(name = "paymentService", fallbackMethod = "paymentFallback")

public PaymentResult processPayment(Order order) {

return paymentClient.charge(order.getUserId(), order.getTotal());

}

public PaymentResult paymentFallback(Order order, Exception ex) {

log.warn("Payment service unavailable, using fallback: {}", ex.getMessage());

return PaymentResult.pending("Payment queued for processing");

}Retry with Exponential Backoff and Jitter

Not all failures are permanent. Network blips, temporary service overloads, and transient errors resolve within seconds. Retrying is appropriate for these cases.

Naive retry (dangerous):

// WRONG - immediate retry under load makes things worse

for (let i = 0; i < 3; i++) {

try {

return await paymentService.charge(amount);

} catch (err) {

if (i === 2) throw err;

// Retries immediately - contributes to DDoS on failing service

}

}Exponential backoff: Increase the wait time between retries exponentially. Each failure doubles the wait.

Jitter: Add random variation to the wait time. Without jitter, 1,000 clients all retry at exactly the same time (all waited 1s, then 2s, then 4s) - creating synchronized retry storms that overwhelm the recovering service.

// lib/retry.js - retry with exponential backoff and jitter

async function withRetry(fn, options = {}) {

const {

maxAttempts = 3,

baseDelayMs = 100,

maxDelayMs = 10000,

retryableErrors = [], // List of error codes/names that should be retried

} = options;

for (let attempt = 1; attempt <= maxAttempts; attempt++) {

try {

return await fn();

} catch (error) {

const isLastAttempt = attempt === maxAttempts;

const isRetryable = isRetryableError(error, retryableErrors);

if (isLastAttempt || !isRetryable) {

throw error;

}

// Exponential backoff: 100ms, 200ms, 400ms, 800ms...

const exponentialDelay = baseDelayMs * Math.pow(2, attempt - 1);

// Add jitter: random value between 0 and the exponential delay

const jitter = Math.random() * exponentialDelay;

// Full jitter: delay = random between 0 and exponential_delay

const delay = Math.min(exponentialDelay + jitter, maxDelayMs);

console.log(`Attempt ${attempt} failed, retrying in ${delay.toFixed(0)}ms`);

await sleep(delay);

}

}

}

function isRetryableError(error, retryableErrors) {

// Always retry on network errors and 5xx (server errors)

// Never retry on 4xx (client errors - wrong input won't succeed on retry)

if (error.code === 'ECONNRESET' || error.code === 'ETIMEDOUT') return true;

if (error.status >= 500) return true;

if (error.status >= 400 && error.status < 500) return false;

return retryableErrors.some(e => error.code === e || error.name === e);

}

const sleep = (ms) => new Promise(resolve => setTimeout(resolve, ms));

// Usage

const result = await withRetry(

() => paymentService.charge(order.userId, order.total),

{ maxAttempts: 3, baseDelayMs: 200, retryableErrors: ['ServiceUnavailable'] }

);When NOT to Retry

- HTTP 400-499 (client errors): The request is malformed. Retrying will always fail.

- Idempotency unknown: If you don't know whether the operation was applied, retrying may double-charge a customer. Use idempotency keys.

- Circuit is open: Don't retry against an open circuit breaker. Fail fast.

Timeout Pattern

A timeout is the simplest and most important resilience pattern. Never wait indefinitely for a response.

Without timeouts:

- Service A calls Service B

- Service B is slow (disk I/O issue, GC pause, database lock)

- Service A's thread blocks indefinitely

- All of Service A's threads eventually block

- Service A stops responding - cascading failure

// timeout.js - wrap any async call with a timeout

function withTimeout(promise, timeoutMs, operationName = 'operation') {

const timeoutPromise = new Promise((_, reject) => {

setTimeout(() => {

reject(new Error(`${operationName} timed out after ${timeoutMs}ms`));

}, timeoutMs);

});

return Promise.race([promise, timeoutPromise]);

}

// Usage

async function getRecommendations(userId) {

try {

return await withTimeout(

recommendationService.getFor(userId),

500, // Never wait more than 500ms for recommendations

'recommendation-service'

);

} catch (err) {

if (err.message.includes('timed out')) {

console.warn('Recommendation service timeout, returning empty recommendations');

return []; // Graceful fallback

}

throw err;

}

}Recommended Timeout Values

| Service Type | Recommended Timeout |

|---|---|

| Synchronous API (user-facing) | 200-500ms |

| Database query (simple) | 1-2s |

| Database query (complex report) | 5-30s |

| External payment processor | 10-30s |

| ML inference (non-real-time) | 5-10s |

| Background job step | 60-300s |

The timeout should be set based on what the user experience requires, not what the service might theoretically achieve.

Bulkhead Pattern

Named after the watertight compartments in a ship - if one compartment floods, the others stay dry.

In software, a bulkhead isolates resources (thread pools, connection pools, semaphores) for different operations. If one operation consumes all resources, others remain available.

// lib/bulkhead.js - semaphore-based bulkhead

class Bulkhead {

constructor(maxConcurrent, queueSize = 0) {

this.maxConcurrent = maxConcurrent;

this.queueSize = queueSize;

this.currentCount = 0;

this.queue = [];

}

async execute(fn) {

if (this.currentCount >= this.maxConcurrent) {

if (this.queue.length >= this.queueSize) {

throw new Error('Bulkhead queue full - request rejected');

}

// Queue the request

await new Promise((resolve, reject) => {

this.queue.push({ resolve, reject });

});

}

this.currentCount++;

try {

return await fn();

} finally {

this.currentCount--;

if (this.queue.length > 0) {

const next = this.queue.shift();

next.resolve();

}

}

}

}

// Separate bulkheads for different downstream services

const paymentBulkhead = new Bulkhead(10, 5); // Max 10 concurrent payment calls

const recommendationBulkhead = new Bulkhead(30, 20); // More generous for recommendations

async function checkout(order) {

// Payment is critical - strict bulkhead

const paymentResult = await paymentBulkhead.execute(

() => paymentService.charge(order.userId, order.total)

);

// Recommendations are optional - separate pool, won't affect payment

let recommendations = [];

try {

recommendations = await recommendationBulkhead.execute(

() => recommendationService.getFor(order.userId)

);

} catch {

// Bulkhead rejection - just proceed without recommendations

}

return { paymentResult, recommendations };

}Combining All Four Patterns

In production, you apply all four patterns together on each service call:

// lib/resilient-call.js - all patterns combined

import CircuitBreaker from './circuit-breaker.js';

import { withRetry, withTimeout } from './resilience.js';

function createResilientClient(serviceName, options = {}) {

const breaker = new CircuitBreaker({

failureThreshold: options.failureThreshold ?? 5,

timeout: options.circuitTimeout ?? 30000,

});

const bulkhead = new Bulkhead(

options.maxConcurrent ?? 20,

options.queueSize ?? 10,

);

return async function call(fn, callOptions = {}) {

// 1. Bulkhead - reject if too many concurrent calls

return bulkhead.execute(async () => {

// 2. Circuit breaker - fail fast if service is known to be down

return breaker.call(async () => {

// 3. Retry - retry on transient failures

return withRetry(async () => {

// 4. Timeout - don't wait forever

return withTimeout(

fn(),

callOptions.timeoutMs ?? options.defaultTimeoutMs ?? 2000,

serviceName,

);

}, {

maxAttempts: callOptions.maxAttempts ?? 3,

baseDelayMs: callOptions.baseDelayMs ?? 100,

});

});

});

};

}

// Usage

const paymentClient = createResilientClient('payment-service', {

maxConcurrent: 10,

failureThreshold: 5,

defaultTimeoutMs: 5000,

});

const result = await paymentClient(

() => fetch(`${PAYMENT_SERVICE_URL}/charge`, { method: 'POST', body: JSON.stringify(order) }),

{ maxAttempts: 2, timeoutMs: 10000 }

);Service Mesh: Infrastructure-Level Resilience

In 2026, many teams implement resilience patterns at the infrastructure level using a service mesh (Istio, Linkerd) rather than in application code.

The service mesh runs a sidecar proxy (Envoy) alongside each service. The proxy handles:

- Circuit breaking

- Retries with exponential backoff

- Timeouts

- Rate limiting

- mTLS (mutual TLS authentication)

# Istio VirtualService - circuit breaker in the mesh

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: payment-service

spec:

host: payment-service

trafficPolicy:

connectionPool:

http:

http1MaxPendingRequests: 100

http2MaxRequests: 1000

outlierDetection:

consecutive5xxErrors: 5 # Open circuit after 5 consecutive 5xx errors

interval: 30s

baseEjectionTime: 30s # Eject for 30s

maxEjectionPercent: 50 # Never eject more than 50% of endpointsThe advantage: consistent resilience behavior without every team implementing it in their service code.

Frequently Asked Questions

Q: Is Netflix Hystrix still used in 2026?

Netflix Hystrix is in maintenance mode since 2018. The Java ecosystem has migrated to Resilience4j, which is actively maintained, reactive-friendly, and modular. For service-mesh-based circuit breaking, Istio and Linkerd are the modern choice.

Q: What is the difference between a timeout and a circuit breaker?

A timeout sets a maximum wait time for a single call - if the call doesn't return within N milliseconds, fail. A circuit breaker tracks the failure rate across multiple calls over time - if too many fail, it stops making calls at all for a period. They address different scenarios: timeouts handle slow calls, circuit breakers handle repeated failures across many calls.

Q: Should I always retry on 503 Service Unavailable?

Not always. A 503 during a circuit-open period is intentional - retrying immediately just re-hits the circuit breaker and gets another immediate failure. Check if the error is from your circuit breaker before retrying. Retry on 503 only after the circuit-timeout has passed and the breaker is in half-open state.

Q: How do I choose bulkhead sizes?

Base bulkhead sizes on your service's critical paths. Payment processing should have a small, dedicated pool (ensuring it always has capacity). Non-critical services like recommendations can share a larger pool. Start with sizes based on your expected peak concurrency and adjust based on production metrics.

Key Takeaway

Resilience is not optional in distributed systems - every external call will eventually fail. The circuit breaker prevents cascading failures by failing fast when a service is down. Retries with exponential backoff and jitter handle transient errors without creating retry storms. Timeouts ensure one slow service cannot consume all your resources. Bulkheads ensure a problem in one area cannot affect another. Apply all four patterns to every external service call, and your system degrades gracefully rather than collapsing catastrophically.

Read next: Observability: Logging, Monitoring, and Tracing ->

Part of the Software Architecture Hub - engineering the stability.