Edge Computing: Processing at the Source

Edge Computing: Processing at the Source

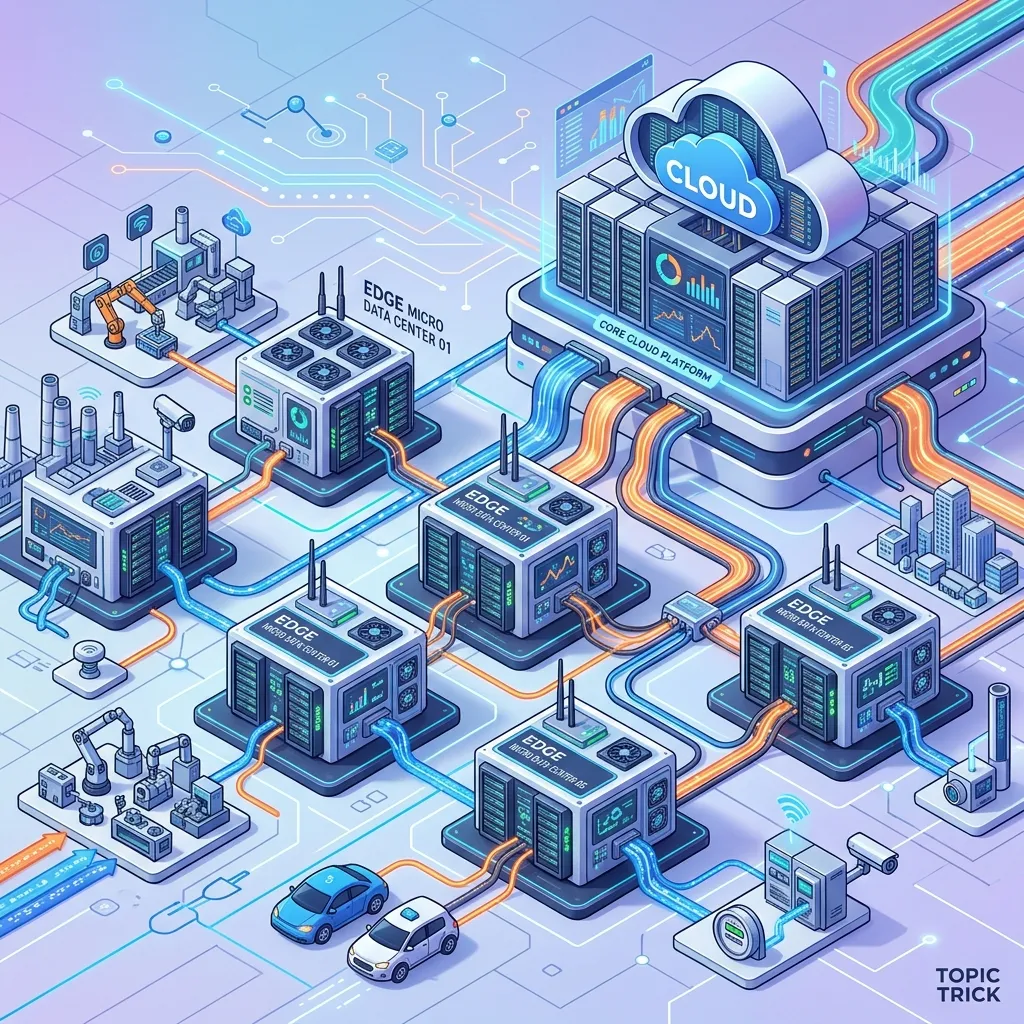

In the old world of Cloud Computing (2010-2022), we optimized for "Throughput." In the new world of Edge Computing (2026+), we optimize for Latency Physics. We are moving the "Brain" of the application away from a few massive data centers and directly into the backbone of the internet itself.

This 1,500+ word guide investigates the Global Low-Latency Revolution. We will explore why "Serverless" alone isn't enough and how to architect systems that feel instantaneous to every human on Earth regardless of their coordinates.

1. Hardware-Mirror: The Physics of the "Last Mile"

The ultimate bottleneck in software is not the CPU or the RAM-it is the Speed of Light.

- The Physics: Light travels through fiber-optic cables at approximately $200,000$ km/s. To cross the Atlantic (New York to London) and back, a packet takes roughly $60$ms minimum. Add in router hops, and you hit $80$ms.

- The Hardware Reality: No amount of code optimization can fix the "Propagation Delay." If your API call requires $5$ database round-trips to Virginia, a user in Sydney will experience a $2$-second lag just from the distance.

- The Edge Solution: By placing nodes in PoPs (Points of Presence) located physically inside the user's city (often at the ISP level), we reduce the trip to $10$ms.

2. Architecture: V8 Isolates vs. Containers

Traditional cloud "Serverless" functions (e.g., AWS Lambda) often use containers or micro-VMs. At the edge, this is too heavy.

The Problem: The Cold Start Tax

Starting a Docker container takes $200$ms-$800$ms. If your latency budget is $50$ms, the container itself is the enemy.

The Edge Solution: V8 Isolates

Modern Edge Workers (Cloudflare Workers, Deno Deploy) use V8 Isolates.

- The Internals: Instead of a full operating system or even a "Process," an Isolate is a tiny sandbox within a single, massive process.

- Memory Physics: Starting a new context takes $< 1$ms and uses a few kilobytes of RAM. This allows a single Edge server to host $100,000$ separate tenants simultaneously without a "Cold Start" penalty.

3. The "Data" Challenge: Edge Persistence

Code is "Stateless" and easy to move. Data has "Gravity." Moving a 10TB Postgres database to 300 cities is impossible.

Edge Strategy 1: Smart Caching (KV Stores)

Store small, frequently accessed data (session tokens, feature flags) in extremely fast Global KV Stores.

- Trade-off: High availability, but eventual consistency. It might take $5$ seconds for a "Write" in London to be visible in LA.

Edge Strategy 2: Edge-Active SQL (D1/Turso)

In 2026, we use Distributed SQLite (Turso) or D1.

- The Architecture: Your "Main" database stays in the cloud. Each Edge node maintains a "ReadOnly" replica of just the rows relevant to the local users.

- Synchronicity: Using CRDTs (Conflict-free Replicated Data Types), the edge can handle local writes and "Merge" them back to the core without race conditions.

4. Edge-Native Use Cases: Zero-Flicker Apps

Dynamic A/B Testing

Instead of doing a "Client-side" redirect (which causes a flicker), the Edge Worker parses the user's cookie and serves the "B" version of the HTML directly. To the browser, it looks like a standard response.

Image/Video Optimization

The Edge detects the user's device. If it's an iPhone, it converts the image to WebP or AVIF on the fly. This reduces "Bytes over the wire" by 80%, further increasing the "Perceived Speed."

Security: Web Application Firewall (WAF)

Malicious bots are blocked at the Edge Node. This means the "Poison Traffic" never reaches your expensive main database, saving you money on compute costs and protecting you from Layer 7 DDoS attacks.

5. Summary: The Edge Architect's Checklist

- Geography Awareness: Map your user base before choosing Edge regions. If 90% of your users are in Europe, don't waste budget on Edge nodes in Brazil.

- Isolate-Ready Code: Edge workers have no "Local Filesystem." Your code must be pure logic-talking to APIs or KV stores.

- Consistency Matrix: Decide which data needs to be "Global" (Slow) and which can be "Local" (Fast).

- Vendor Neutrality: Use standard Web APIs. If you rely too heavily on one provider's "Specific" Edge logic, you are locked into their pricing forever.

- Monitoring: Use Observability sidecars to track "P99 Latency at the Edge." If an edge node is slow, your architecture should automatically "Failback" to the core cloud region.

Edge Computing is the "New Global Standard." By mastering the deployment of logic to the network edge and the discipline of low-latency data replication, you gain the ability to build applications that feel "Instant" to every human on Earth. You graduate from "Regional Developer" to "Global Architect."

Phase 47: Action Items

- Deploy a "Hello World" worker to $250+$ global locations.

- Measure the latency difference between a "Central" API and an "Edge" API from multiple continents.

- Implement a basic "Edge-Redirect" based on the user's geolocation header.

Read next: Software Architect Career Path: From Maker to Strategist ->

Part of the Software Architecture Hub - engineering the speed.