Serverless Architecture in 2026: Beyond Functions - Cold Starts, AI Inference & Global Edge

Serverless Architecture in 2026: Beyond Functions - Cold Starts, AI Inference & Global Edge

Table of Contents

- The Pay-for-Value Economics

- The Serverless Spectrum: FaaS to Containers

- How Event-Driven Triggers Work

- Cold Starts: The Problem and 2026 Solutions

- Serverless AI: The Biggest Growth Driver

- Edge Serverless: Cloudflare Workers vs Lambda@Edge

- State Management in a Stateless World

- Orchestrating Complex Workflows: Step Functions & Temporal

- Cost Model: When Serverless Wins vs Loses

- Vendor Lock-in: The Real Risk

- Frequently Asked Questions

- Key Takeaway

The Pay-for-Value Economics

Traditional cloud computing requires reserving capacity upfront:

Traditional VM/Kubernetes:

+-- 10 app instances x $0.10/hr = $0.10/hr minimum (even at 0 traffic)

+-- Pay for RAM/CPU you reserve, not use

+--- Over-provision for peak -> 70% utilization average

Serverless FaaS (Lambda):

+-- Pay per 1ms of actual execution

+-- Pay per actual requests (first 1M free on AWS)

+-- $0.00 at 3am when no traffic

+--- Infinite burst capacity - no capacity planning neededMonthly cost example - API serving 100K requests/day:

- EC2 t3.medium (always on): ~$30/month

- AWS Lambda (100ms avg, 128MB): ~$2.50/month

- 12x cheaper at this traffic level

The crossover point: typically ~1-2M requests/month at 100-200ms average execution, or sustained 10-20% CPU utilization - beyond that, reserved compute is cheaper.

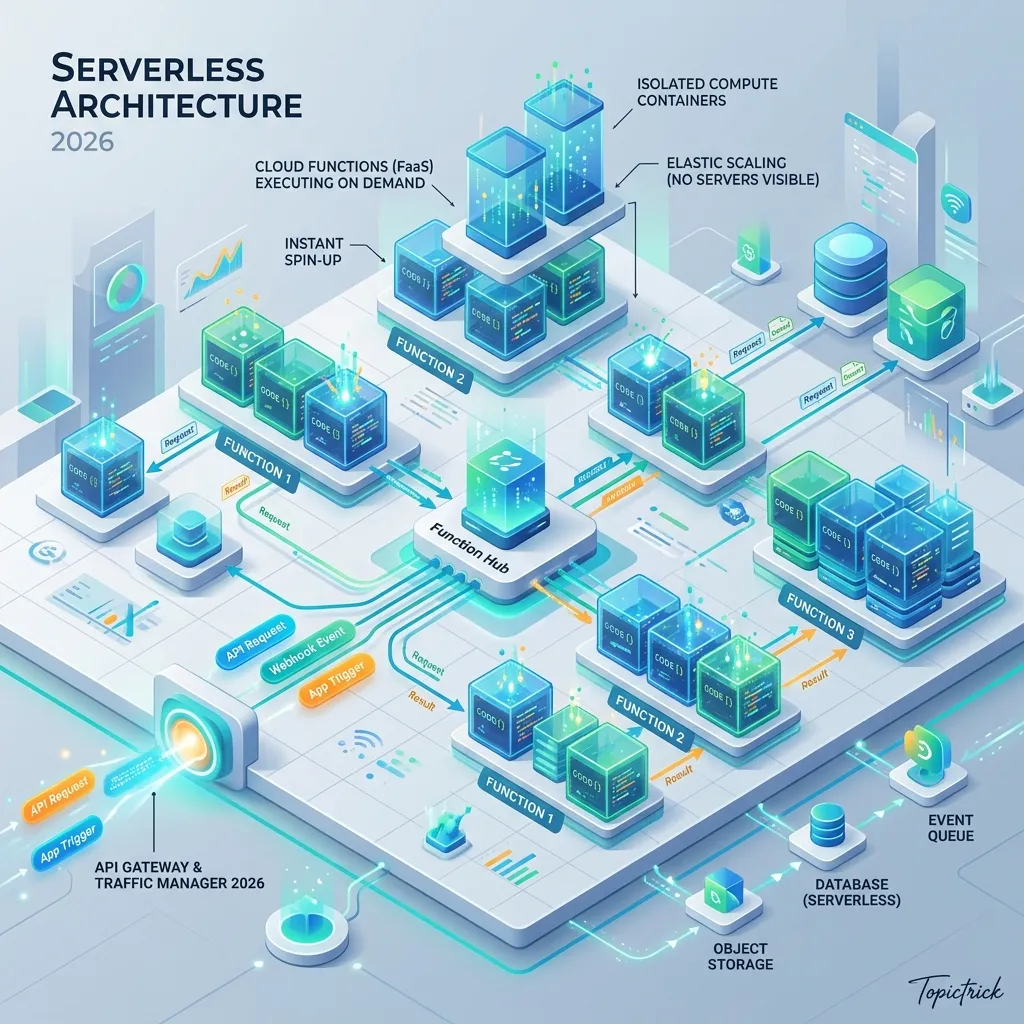

The Serverless Spectrum: FaaS to Containers

Function-as-a-Service (FaaS): AWS Lambda, Google Cloud Functions, Cloudflare Workers. Stateless, request-scoped, millisecond billing, hard limits (15min max in Lambda, 30s in Workers).

Serverless Containers: AWS Fargate, Google Cloud Run, Azure Container Apps. Your container runs on demand, scales to zero, but you control the runtime. No 15-minute limit. Better for long-running processes (video processing, ML inference batches).

How Event-Driven Triggers Work

Serverless functions are dormant until an event wakes them:

# Lambda handler - the complete "server" for this endpoint:

import json

def handler(event, context):

# event contains HTTP request details (API Gateway proxy format)

http_method = event['httpMethod']

path = event['path']

body = json.loads(event.get('body') or '{}')

if http_method == 'POST' and path == '/orders':

# Business logic here

order = create_order(body)

return {

'statusCode': 201,

'headers': {'Content-Type': 'application/json'},

'body': json.dumps({'orderId': order.id})

}

return {'statusCode': 404, 'body': 'Not Found'}Cold Starts: The Problem and 2026 Solutions

A cold start occurs when a new instance of your function is initialised from scratch - the cloud provider must:

- Allocate a container

- Load your code and dependencies

- Execute your initialisation code

- Then handle the request

Typical cold start latencies (2024 benchmarks):

| Runtime | P50 Cold Start | P99 Cold Start |

|---|---|---|

| Node.js 20 | 200ms | 800ms |

| Python 3.12 | 250ms | 900ms |

| Java 21 (without SnapStart) | 1,500ms | 4,000ms |

| Java 21 + AWS SnapStart | 90ms | 300ms |

| Go 1.22 | 80ms | 250ms |

| Cloudflare Workers (V8 Isolate) | < 5ms | < 15ms |

| Bun on Lambda | 60ms | 200ms |

2026 Solutions:

-

AWS Lambda SnapStart (Java): Takes a snapshot of the initialized JVM. Subsequent cold starts restore from snapshot instead of JVM boot - 10x improvement.

-

Cloudflare Workers (V8 Isolates): Not a container per request - each request runs in a V8 JavaScript isolate (same tech as Chrome tabs). Startup time: microseconds.

-

Provisioned Concurrency (AWS): Pre-warm N instances so they're always ready - eliminates cold starts at a cost (you pay for idle warm instances).

-

Choose Go/Bun/Rust: Compiled languages with minimal runtime startup are naturally cold-start-friendly.

Serverless AI: The Biggest Growth Driver

Serverless AI is the primary force expanding the serverless market in 2026:

# Serverless LLM inference - no GPU management required:

import anthropic

client = anthropic.Anthropic()

# This calls Anthropic's serverless compute - you pay per input/output token

# No GPU provisioning, no model loading, no scaling

message = client.messages.create(

model="claude-opus-4-5",

max_tokens=1024,

messages=[{"role": "user", "content": "Summarise this contract..."}]

)

# Same pattern for image generation, embedding models, speech-to-text

# The entire ML inference stack is abstracted awayServerless AI platforms in 2026:

| Platform | Models | Pricing Model |

|---|---|---|

| AWS Bedrock | Claude, Llama, Mistral, Titan | Per token |

| Google Vertex AI | Gemini, Claude, open-source | Per token + per second |

| Together AI | 50+ open-source models | Per token |

| Replicate | Image, video, audio models | Per second of compute |

| Modal | Custom models (bring your own) | Per second of GPU |

State Management in a Stateless World

Serverless functions are ephemeral - they have no memory between requests. State must be externalised:

| State Type | Solution | Latency |

|---|---|---|

| Session state | Redis (Upstash, ElastiCache) | < 1ms |

| User data | DynamoDB, PlanetScale, Turso | 1-10ms |

| File storage | S3, R2, Cloudflare KV | 5-50ms |

| Long-lived workflow state | Temporal, AWS Step Functions | Durable |

| Short-lived computation cache | Lambda /tmp (up to 10GB) | < 1ms |

| Global edge KV | Cloudflare KV, Deno KV | < 5ms |

# Serverless function with DynamoDB for state:

import boto3

dynamodb = boto3.resource('dynamodb')

table = dynamodb.Table('user-sessions')

def handler(event, context):

user_id = event['requestContext']['authorizer']['userId']

# Read state from DynamoDB (external, durable)

session = table.get_item(Key={'userId': user_id}).get('Item')

# Process request using session state

result = process_with_session(event, session)

# Write updated state back

table.put_item(Item={'userId': user_id, **result.updated_state})

return {'statusCode': 200, 'body': json.dumps(result.response)}Cost Model: When Serverless Wins vs Loses

Serverless WINS when:

✅ Traffic is spiky, unpredictable, or low-volume

✅ You have many small isolated functions

✅ Zero-traffic periods exist (nights, weekends)

✅ You need infinite burst capacity (flash sales, viral moments)

✅ Development speed > operational cost

Serverless LOSES when:

❌ Sustained high CPU utilization (> 50% continuously)

❌ Functions exceed 15-minute execution limit

❌ Large in-memory datasets needed across requests

❌ Sub-10ms cold start requirements for all users

❌ Regulatory requirements for dedicated infrastructureFrequently Asked Questions

Is Kubernetes dead? Should everything be serverless? No - Kubernetes and serverless serve different use cases. Kubernetes excels at long-running, stateful workloads with complex networking requirements (databases, ML training, WebSocket servers, background workers). Serverless excels at stateless, request-scoped processing with variable traffic. Most large systems use both: Kubernetes for persistent services, serverless for event-driven processing and APIs.

How do I avoid vendor lock-in with serverless? Use the Serverless Framework or AWS CDK with portable abstractions. Implement your business logic as pure functions that receive/return standard request/response objects - avoid using vendor-specific SDKs directly inside your business logic. Use OpenTelemetry for observability (not vendor-proprietary agents). The adapter pattern from Hexagonal Architecture applies here: your core code knows nothing about Lambda; a thin adapter translates Lambda events to your domain objects.

Key Takeaway

Serverless in 2026 is the dominant architecture for new API services, event-driven processing, and AI inference pipelines - not because it's always cheaper, but because it eliminates the operational tax of managing servers. Cold starts are largely solved for most runtimes. The remaining limits (15-minute execution, statelessness, vendor lock-in) are engineering constraints to design around, not fundamental blockers. For the majority of web APIs, background jobs, and AI-powered features in 2026, the answer to "Should I use serverless?" is: "Yes, unless you have specific requirements that make traditional compute objectively better."

Read next: Platform Engineering Architecture: The Internal Developer Platform ->

Part of the Software Architecture Hub - comprehensive guides from architectural foundations to advanced distributed systems patterns.