FinOps for Architects: Engineering for Cloud Economy

FinOps for Architects: Engineering for Cloud Economy

In the early days of Cloud (2010), the goal was "Global Scale." In the modern era (2026), the goal is Global Efficiency. We have moved beyond the "Checkwriter" phase of cloud where companies paid whatever AWS or Azure asked. We are now in the era of Cost-Aware Architecture.

This 1,500+ word deep dive investigates the Engineering of the Cloud Bill. We will move beyond "Deleting old S3 buckets" and explore how to bake financial logic into your systems from the first line of code, ensuring your platform is as profitable as it is performant.

1. Hardware-Mirror: The "CPU Idle" Physics

In a data center, a CPU draws power even when it is doing nothing.

- The Physics: A server running at 10% utilization consumes nearly 50% of the power it would draw at 100% utilization.

- The Financial Waste: In the cloud, you pay for "Allocated Resources." If your Kubernetes pod "Requests" $2$ cores but only uses $0.2$, you are physically burning investor capital for no result.

- The Solution: Rightsizing and Bursting. Use "Burstable" (T-series) instances for non-peak workloads and automate the HPA (Horizontal Pod Autoscaler) to kill excess capacity within minutes of traffic drops.

2. The Power Lever: Arm64 (Graviton) Transition

The single largest "Architectural ROI" in 2026 is the migration from x86 (Intel/AMD) to Arm64 (AWS Graviton / Google Tau).

- The Physics: Arm processors use Reduced Instruction Set Computing (RISC). They perform more work per watt and generate less heat.

- The Economics: Cloud providers pass these savings to you. Arm instances are typically 20% cheaper than x86.

- The Performance: For web-heavy workloads (Java, Go, Python), Arm is often 20% faster.

- The Lever: By simply changing your build target to

linux/arm64, you can achieve a 40% Price-Performance boost across your entire estate.

3. The Unit Economics of a Lambda Call

Serverless functions (AWS Lambda, Azure Functions) are often marketed as "Cost-free when idle." While true, they introduce a different kind of financial tax: The Cold Start Latency Tax.

The Physics of the Cold Start

When a Lambda starts, the cloud provider must physically move your code to a raw server, initialize a container, and start your runtime (JVM, Node, Go).

- The Financial Cost: You pay for the Initialization Time. If your Java Lambda takes 5 seconds to start, you are paying for 5 seconds of maximum-wattage CPU cycles before your first line of business logic even runs.

- The Architect's Lever: Use Provisioned Concurrency for high-priority routes, or switch to "Warm-up" engines like LLVM/GraalVM to reduce cold start times from seconds to milliseconds.

Memory Allocation vs. CPU Power

In FaaS, you don't choose "CPU cores." You choose Memory.

- The Hardware Link: Cloud providers map CPU power proportionally to RAM. If you double the memory, you double the CPU power.

- The FinOps Strategy: Sometimes, allocating 1024MB to a 128MB task is cheaper because the task finishes 10x faster, resulting in lower total "Duration-based" billing. This is the Power-Tuning requirement of modern FinOps.

4. Spot Instance Survival: 90% Discounts for the Brave

Spot instances are spare capacity that the cloud provider can "reclaim" at any time with a 2-minute notice.

The Architectural Requirement: Statelessness

To use Spot effectively, your architecture must be Interruption-Tolerant.

- The Internal: When the "Termination Signal" hits the metadata endpoint, your application has 120 seconds to:

- Stop accepting new requests.

- Flush logs to a persistent sink.

- Gracefully shut down.

- The Reward: For CI/CD runners, batch processing, and non-critical microservices, Spot reduces your compute bill by 70%-90%.

5. Storage Economics: S3 Tiering & The "Small File Tax"

Data is heavy. Moving it, storing it, and retrieving it all have different pricing models that architects must master.

The Tiering Geometry

- S3 Standard: High availability, high cost.

- S3 Intelligent Tiering: Automatically moves data based on access patterns. Recommended for 90% of use cases.

- S3 Glacier Deep Archive: The "Bit Graveyard." Costs $1 per Terabyte/month, but takes hours to retrieve.

The Small File Tax

If you store 1 million 1KB files, the "Request Cost" (PUT/GET) will be higher than the "Storage Cost."

- The Architect's Fix: Batching. Combine small files into a larger

.taror.parquetfile before uploading to S3 to minimize per-request overhead.

4. Data Egress: The Hidden "Extraction Tax"

Cloud providers make it "Free" to move data In, but charge a fortune to move data Out.

- The Physics: Every bit moved across an Availability Zone (AZ) or Region boundary costs money.

- The Architectural Waste: Chatty microservices talking across regions.

- The Solution: Inter-AZ Optimization.

- Configure your Load Balancer to prioritize "Local" instances.

- If Service A and Service B talk $1$ million times a day, they should live in the same AZ to avoid the "Egress Tax."

5. Case Study: The "Zero-Waste" SaaS Pivot

A fintech startup was spending $$2M/year$ on cloud.

- Action 1: Switched all staging environments to Spot Instances (spare capacity at a 90% discount).

- Action 2: Migrated the core Go API to AWS Graviton (Arm64).

- Action 3: Implemented S3 Lifecycle Policies to move logs to Glacier after 7 days.

- Result: They reduced their annual bill to $$1.1M$-saving nearly $$1M$ without dropping a single packet or firing a single engineer.

6. Summary: The FinOps Architect's Checklist

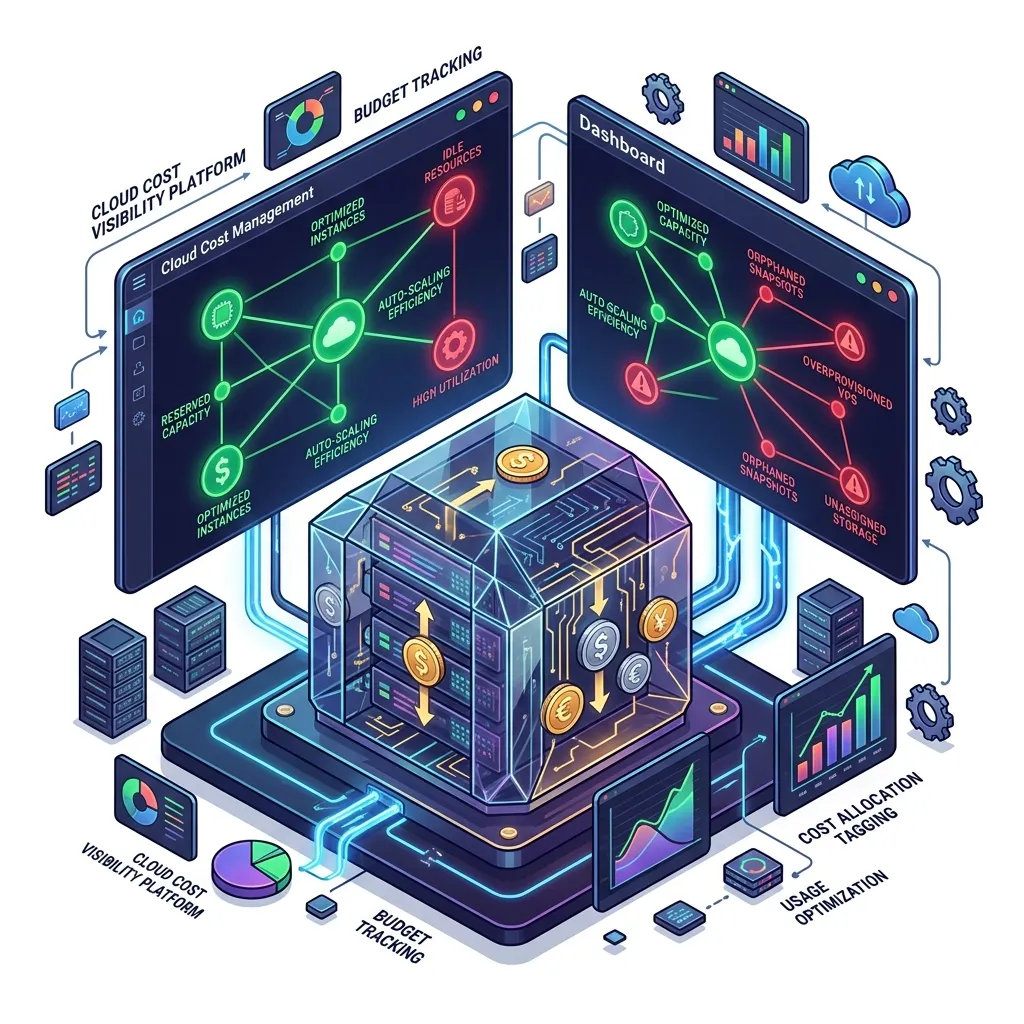

- Tagging Governance: You cannot optimize what you cannot see. Every resource must have a

cost-centerandownertag. - Unit Economics: Calculate the Cost per User. If the cost per user increases as you grow, your architecture is "Scaling Inefficiently."

- Spot-First Strategy: Use Spot instances for 100% of CI/CD and 80% of non-critical workers.

- Zombie Detection: Automate the deletion of orphaned EBS volumes and unused Load Balancers via your IDP (Review Module 57).

- Multi-Arch by Default: Ensure your Docker files support

arm64today, so you can switch hardware platforms tomorrow without a code rewrite.

FinOps is not about "Saving Money"; it is about Unit Profitability. By mastering the financial physics of the cloud, you gain the power to build sustainable systems that can last for decades. You graduate from "Managing compute" to "Architecting the Capital Efficiency of Modern Business."

Phase 70: FinOps Actions

- Calculate your "Waste Percentage": What is the difference between your "Reserved CPU" and your "Actual CPU Usage"?

- Run a "Spot Instance Challenge": Try to run your staging environment for 24 hours on 100% Spot capacity.

- Plan a Graviton Pilot: Bench-test your most intensive service on an Arm64 instance.

- Identify the "Data egress geometry": Use a cost visualizer to see how much you spend moving bytes across Availability Zones.

Read next: Legacy Modernization: The Strangler Fig Pattern in Action ->

Part of the Software Architecture Hub - making engineering sustainable.