Legacy Modernization: The Strangler Fig in Practice

Legacy Modernization: The Strangler Fig in Practice

In every large enterprise, there exists a "Legacy System"-a 20-year-old monolith written in a forgotten language, running on hardware that is physically losing parts. The business wants to move to the cloud, but the risk of a "Rip and Replace" is too high.

This 1,500+ word guide investigates the Science of Legacy Modernization. We move beyond the theory of "Cleaning up code" and explore the infrastructure-level strategies required to excise technical rot while the patient remains walking.

1. Hardware-Mirror: The "Extraction Tax" (Mainframe vs. Cloud)

Legacy systems often live on-premise on specialized hardware (Mainframes or IBM Power systems).

- The Physics: Moving data from an on-premise mainframe to a modern AWS/Azure microservice involves Speed-of-Light Latency.

- The Hardware Reality: A call from your modern "Go" microservice to a legacy "COBOL" DB often takes $20$ms-$50$ms just in propagation delay.

- The Architecture Fix: You must implement Local Proximity Caching. Every time you modernize a module, you must also "Snapshat" the legacy data into a modern regional DB to avoid the "Mainframe Hop" on every request.

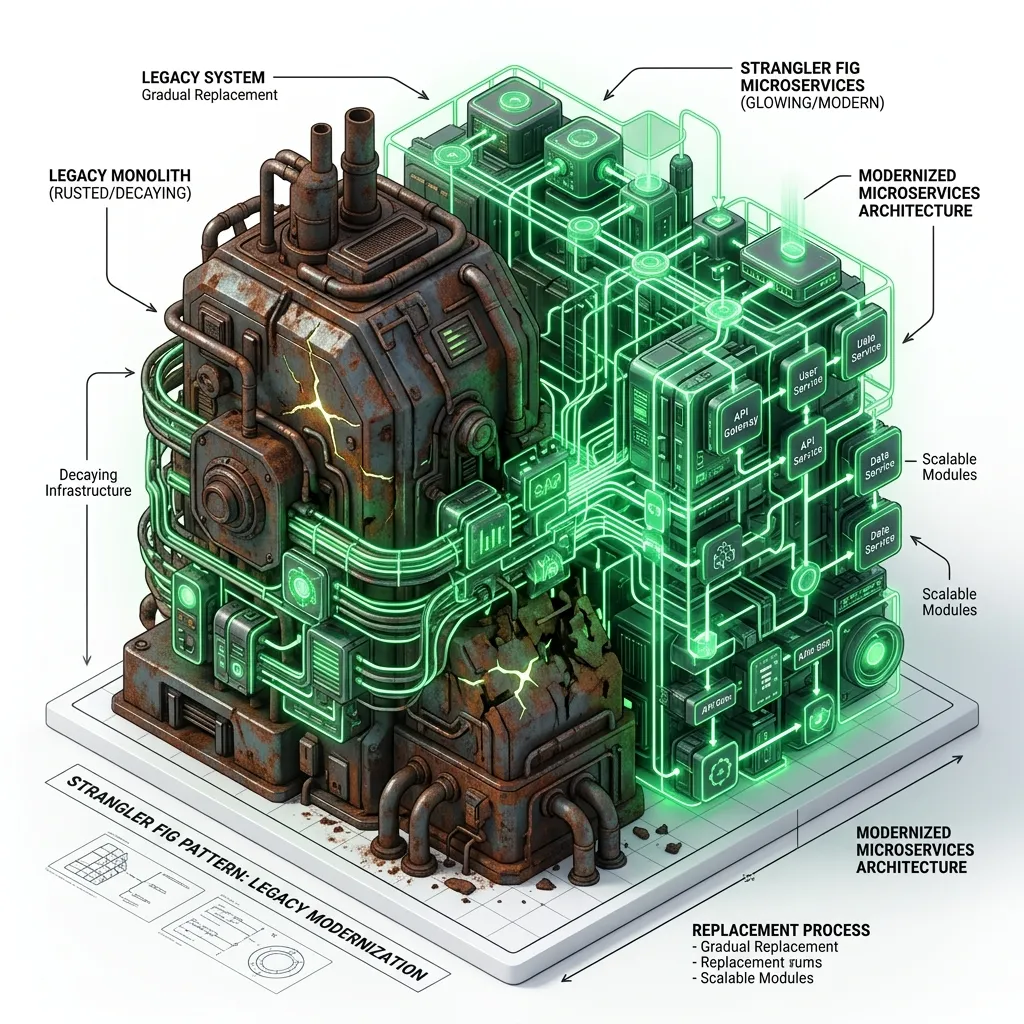

2. The Strangler Fig Pattern: The Global Evolution

Named after the Ficus aurea tree that grows around a host and eventually replaces it, the Strangler Fig is the gold standard for modernization.

Phase 1: The Facade (Intercept)

Place a "Strangler Facade" (a high-performance proxy like Nginx or Kong) in front of the monolith.

- Logic: All traffic hits the Facade. For 99% of routes, it "Proxies" the request back to the legacy system.

- Engineering: This is your "Control Hub." It allows you to flip traffic between old and new versions without a redeploy.

Phase 2: Transform (The Micro-Migration)

Build a single feature-for example, "User Login"-in your new modern stack.

- Rule: Never modernize the "Core" first. Modernize a "Leaf Node" (an edge feature) to test your pipeline and build confidence.

Phase 3: Coexist & Eliminate

Route 1% of traffic to the new "User Login" service.

- Validation: Compare the results of the new service with the old one via Shadow Writes (Module 76). Once verified, move 100% of traffic and physically delete the code from the monolith.

3. Data Shadowing & The Double-Write Cycle

Content is easy to modernize; State is hard. When you move a feature to a new microservice, you must ensure the databases remain in sync.

The Double-Write Pattern

- Intercept: The application receives a "Write" (e.g., Update Balance).

- Primary Write: The app writes to the Legacy DB.

- Secondary Write: The app asynchronously writes to the New DB.

- Verification: A background process matches the records. If they differ, the "New" system is not yet ready for authority.

Change Data Capture (CDC)

In 2026, the modern standard is CDC (Change Data Capture) using tools like Debezium.

- Internal: CDC reads the Transaction Logs of the legacy database (e.g., Oracle Redo Logs) directly from the disk.

- The Physical Benefit: It does not put any "Query Load" on the legacy database engine, ensuring the old monolith doesn't crash while you are trying to "Strangle" it.

4. Hardware-Mirror: Mainframe vs. Cloud Data Physics

Mainframes (EBCDIC) and Cloud (UTF-8) think about data differently at the physical byte level.

The Encoding Nightmare

- Legacy: Mainframes use EBCDIC (Extended Binary Coded Decimal Interchange Code).

- Modern: Every cloud service uses UTF-8.

- The Architectural Tax: Your Anti-Corruption Layer (ACL) must perform a CPU-intensive translation of every character. If not optimized, this translation can consume significant compute cycles in high-traffic gateways.

Endianness: Big vs. Little

- Mainframes (Big-Endian): Store the most significant byte at the smallest address.

- Modern CPUs (Little-Endian): Store the least significant byte first.

- The Risk: If you pass raw binary data from a mainframe to an x86 cloud server without a translation layer, your $100.00$ transaction might be interpreted as $$25,600.00$.

5. Decommissioning: The "Scream Test"

The final and most dangerous phase of modernization is turning the old system off.

The Zombie Dependency

You believe the monolith is dead, but a forgotten "Monthly Cron Job" might still be calling it.

- The Scream Test: Physically disable the network connection to the legacy server for 2 hours during a low-traffic window.

- The Metric: If no one "Screams" (no critical alerts fire), the decommission is likely safe. If someone screams, you quickly re-enable the connection and document the missing dependency in your Modernization Registry.

The "Data Vaulting" Requirement

Once silenced, the legacy hardware should not be immediately shredded.

- Architecture: Keep the legacy data in a "Cold Vault" (S3 Glacier) for 7 years to meet regulatory compliance, but physically shut down the high-wattage servers to meet your FinOps Efficiency Goals (Review Module 70).

4. Branch by Abstraction: Code-Level Modernization

When you can't use a network proxy (e.g., internal library refactoring), use Branch by Abstraction.

- Define a Clean Interface: Create a new interface for the legacy functionality.

- Implementation A (Old): Wrap the legacy code in the interface.

- Implementation B (New): Write the modern version of the code under the same interface.

- Toggling: Use Feature Flags (Module 22) to switch implementation A to B at runtime.

5. Case Study: The "Wall Street" Refactor

A global bank had a Java 8 monolith controlling trillions in transactions.

- The Blocker: 2.5 million lines of code. A rewrite would take 5 years.

- The Strangler: They modernization the "Reporting" module first using the Strangler Fig.

- The Hardware Shift: They used Debezium (CDC) to stream DB changes from the legacy Oracle DB to a modern PostgreSQL cluster in the cloud.

- Result: They modernized the entire "Front Office" stack in 18 months, reducing server maintenance costs by 60% while the old system was still running "Settlements" in the background.

6. Summary: The Modernization Architect's Checklist

- Zero Downtime First: If your plan requires a "48-hour Maintenance Window," your plan is flawed. Use the Facade pattern.

- Modernize the Risk, Not the Tech: Don't use a new framework just because it's cool. Modernize to reduce Operational Risk (e.g., the server is dying) or Business Blockers (e.g., we can't add Japan support).

- Data Sync is King: Use Change Data Capture (CDC) to keep the legacy and modern databases in sync during the transition.

- Identify the "Deadwood": 30% of legacy code is usually unused. Use Code Coverage tools in production to find dead routes and delete them rather than migrating them.

- Soft Power: Modernization often creates friction with "Legacy Engineers." Build an Inclusive Roadmap where they are trained on the new stack as they help dismantle the old one.

Legacy Modernization is an exercise in Technical Patience. By using the Strangler Fig pattern and Anti-Corruption Layers, you move the risk from a single "Go-Live" Saturday to a series of boring, successful Tuesdays. You graduate from "Managing technical debt" to "Architecting the Rebirth of Modern Systems."

Phase 71: Modernization Actions

- Identify the "Leaf Node" feature in your system with the least dependencies.

- Set up a High-Performance Proxy (Nginx/Kong) to intercept traffic to that feature.

- Draft a Transition ADR (Review Module 53) that defines the success metrics for the migration.

- Perform a "Binary Audit": Identify if your legacy data uses Big-Endian or EBCDIC formats before starting the ACL development.

Read next: Chaos Engineering: Designing for Global Failure ->

Part of the Software Architecture Hub - reclaiming the future from the past.